The phrase sounds dramatic, but the underlying question is real. When people call AGI the “last invention,” they are not usually claiming that humans will stop building things overnight. They mean something narrower and more consequential: if we build systems that can reliably help invent better software, better machines, better scientific tools, and eventually better AI, then human engineering may stop being the main bottleneck in technological progress. The payoff for readers is simple. This article separates the engineering case from the hype, so you can judge whether the AGI race really could become a turning point in how invention works.

What people mean by “the last invention”

The strongest version of the claim goes like this: humanity invents a general-purpose intelligence that can outperform humans across most cognitive work, including research and engineering. Once that happens, the system can contribute to the next generation of inventions faster than human teams can. If that loop becomes self-reinforcing, AGI looks less like one more product cycle and more like a technology that changes the speed and structure of all the ones that follow.

A concrete comparison helps. The steam engine changed industry, but it did not independently redesign factories, logistics, and metallurgy. A genuinely general AI system might. That is why the phrase sticks. It points to an invention that could accelerate the invention process itself.

Still, the phrase is often used too loosely. A strong coding assistant is not the same thing as autonomous R&D. A model that writes competent reports is not automatically a system that can formulate hypotheses, run disciplined tests, analyze failure modes, and update its own methods without supervision. The “last invention” idea only becomes serious when AI crosses from task assistance into sustained invention work.

Why the idea feels more plausible now

Three changes make the argument harder to dismiss than it was even a few years ago.

First, frontier labs are openly discussing AGI in operational terms. Google DeepMind’s 2025 essay on taking a responsible path to AGI does not treat AGI as pure fiction. It frames AGI as a capability trajectory that raises concrete risks, including deceptive alignment and misuse, and argues for staged governance as systems become more capable (Google DeepMind). That matters because labs tend to understate speculative milestones, not overstate them, when legal and safety exposure are high.

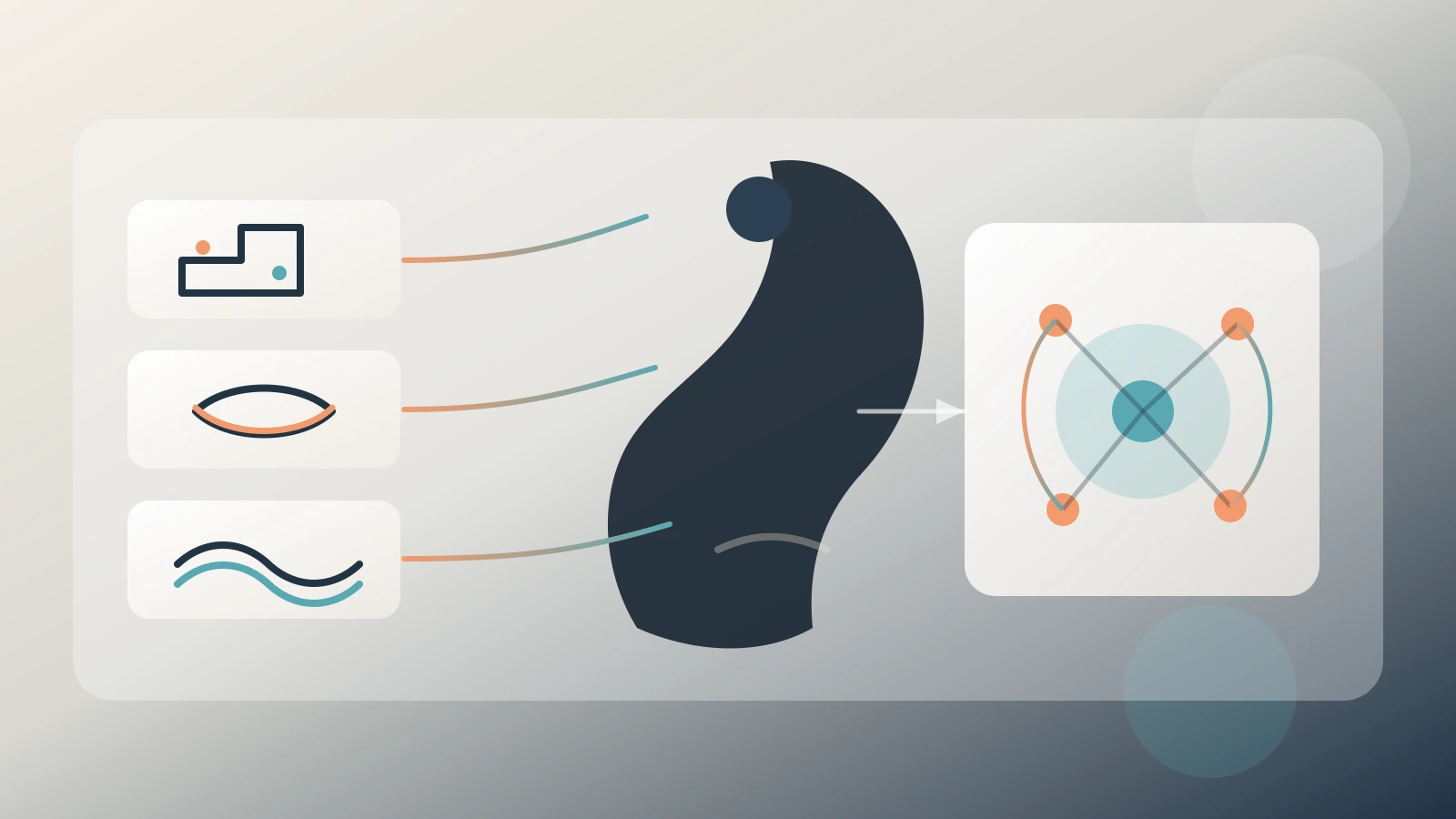

Second, the evaluation lens has changed. OpenAI’s updated Preparedness Framework treats autonomy and self-improvement as capability areas that can trigger tighter safeguards (OpenAI). That is a more useful signal than benchmark marketing. It suggests that the relevant frontier is not only language fluency but whether systems can pursue longer tasks, operate with less supervision, and contribute to capability growth itself.

Third, the supporting stack around models keeps improving. Tool use, coding agents, retrieval systems, simulation environments, and lab automation do not make AGI inevitable, but they do make AI more useful in invention-adjacent workflows. The shift is similar to the difference between a calculator and a CAD workstation. The model alone is not the full story. The surrounding system determines whether intelligence becomes operational.

Why the thesis is still too simple

The clean singularity story misses how engineering actually works.

Real invention is constrained by the physical world. A model can suggest a new battery chemistry, but someone still has to synthesize materials, run tests, verify safety, and scale manufacturing. A model can propose a chip architecture, but fabrication capacity, yield, thermal limits, and supply-chain bottlenecks still decide whether the design matters. In software, iteration is faster, but even there, deployment risk, compliance, and system reliability slow the loop.

This is where the steam-engine analogy breaks again. AGI, if it arrives in a powerful form, would not replace all bottlenecks. It would attack some bottlenecks much more aggressively than others. That distinction matters for investors. If a sector depends mostly on search, simulation, optimization, or software iteration, AI may compress timelines quickly. If a sector depends on scarce equipment, biology, regulation, or human trust, progress will still be slower and messier.

NIST’s AI Risk Management Framework is useful here because it forces a less cinematic view. It treats AI deployment as a problem of measurement, governance, validation, and impact management (NIST). That framing is a corrective to last-invention rhetoric. Even if invention speed rises, the burden of testing and controlling real systems does not disappear.

What AGI would likely change first

The first visible change would probably not be “human engineers become obsolete.” It would be that high-leverage teams start shipping far more candidate ideas with the same headcount.

Software is the obvious example. If AI systems become better at reading large codebases, generating patches, writing tests, and explaining tradeoffs, teams can explore more architectural options before committing. That does not remove humans from the loop, but it can change the ratio between idea generation and implementation time.

Science is another likely early winner. In drug discovery, materials science, and biological design, AI is already useful for narrowing search spaces and proposing candidates. The step from “good assistant” to “credible co-researcher” is smaller there than many people realize. A system that helps researchers eliminate weak options faster can have an outsized effect even if it never becomes a fully independent scientist.

A plain-language comparison helps. Think of ordinary productivity software as a bicycle. It helps you move faster. Think of invention-grade AI as a machine shop. It changes what you can produce, not just how quickly you pedal.

Why “the last invention” may become true in one domain before others

One mistake in AGI debates is treating the future as uniform. It will not be.

The software industry could experience something close to the last-invention thesis much earlier than sectors like construction or energy infrastructure. Once AI systems can materially improve developer workflows, software compounds on itself quickly because software tools help build more software tools. That feedback loop is tighter than it is in industries where every gain must pass through factories, permits, or scarce materials.

By contrast, a robotics-heavy domain may see slower transformation. Even if model intelligence improves fast, robotics still collides with sensor noise, edge cases, calibration, wear, and maintenance. In other words, the same AGI that looks revolutionary in code may look merely helpful in logistics.

That is why “the last invention” should be treated as a sector-by-sector possibility, not a single date on a calendar.

What engineers and investors should actually watch

If you want a practical signal, stop asking whether a model sounds smart and start asking whether it can complete invention-adjacent workflows with reliable judgment.

For engineers, the meaningful signals are task duration, tool reliability, error recovery, and evaluation quality. Can the system run a multi-step investigation, document assumptions, detect when the environment changed, and recover from failure without hiding it? A fluent answer is cheap. Robustness under pressure is not.

For investors, watch where AI shortens expensive loops. If a product reduces weeks of search, testing, or implementation into days, that is much more important than leaderboard noise. But also watch governance. DeepMind, OpenAI, and NIST all point toward the same lesson: capability growth without safety discipline raises operational and reputational risk. The firms most likely to matter will be the ones that combine autonomy gains with credible controls.

Why governance may matter as much as raw capability

There is a temptation to think the winner in the AGI race will simply be the lab with the fastest model-improvement curve. That is too narrow.

If AI really does start compounding invention, governance becomes part of the product. A system that proposes faster designs but cannot be audited, red-teamed, or permissioned safely will be harder to deploy in any serious industry. A model that can write code but cannot explain assumptions or surface uncertainty is not a reliable engine of progress. It is a speed tool with hidden liabilities.

A comparison makes this clearer. Formula One cars are fast because they combine power with control systems, safety standards, telemetry, and pit discipline. Raw speed without control is not a winning system. The same logic will apply to AGI-grade invention tools. The organizations that matter most may not be the ones that promise the biggest leap. They may be the ones that can compound capability without compounding chaos.

Final Thoughts

AGI may or may not deserve the label “humanity’s last invention,” but the phrase survives because it captures a real possibility: the first technology that meaningfully accelerates the production of future technologies. That is a bigger claim than chatbot progress, and it deserves a higher standard of evidence.

The most defensible view today is not that AGI instantly ends engineering as a human discipline. It is that the race matters because intelligence is becoming more operational, more autonomous, and more embedded in invention itself. If that trend continues, the future may not belong to the lab that builds one dramatic super-system first. It may belong to the teams that learn how to turn advancing AI into disciplined, compounding invention without losing control of the process.