The dream behind empathy technology is easy to understand. If people could feel even a fraction of what others feel, maybe families would fight less, politics would cool down, and large conflicts would become harder to sustain. That dream gets sharper when neurotechnology enters the conversation. Brain-computer interfaces, biosensors, and affective models make it tempting to imagine a future where emotion can be transmitted, synchronized, or at least made harder to ignore. The payoff in this article is straightforward: it explains what neural sync and emotional BCI could realistically do, where the science stops, and why conflict resolution remains a social and political problem even if the tools get better.

What empathy technology actually means today

The phrase sounds futuristic, but the current field is a mix of several smaller technologies.

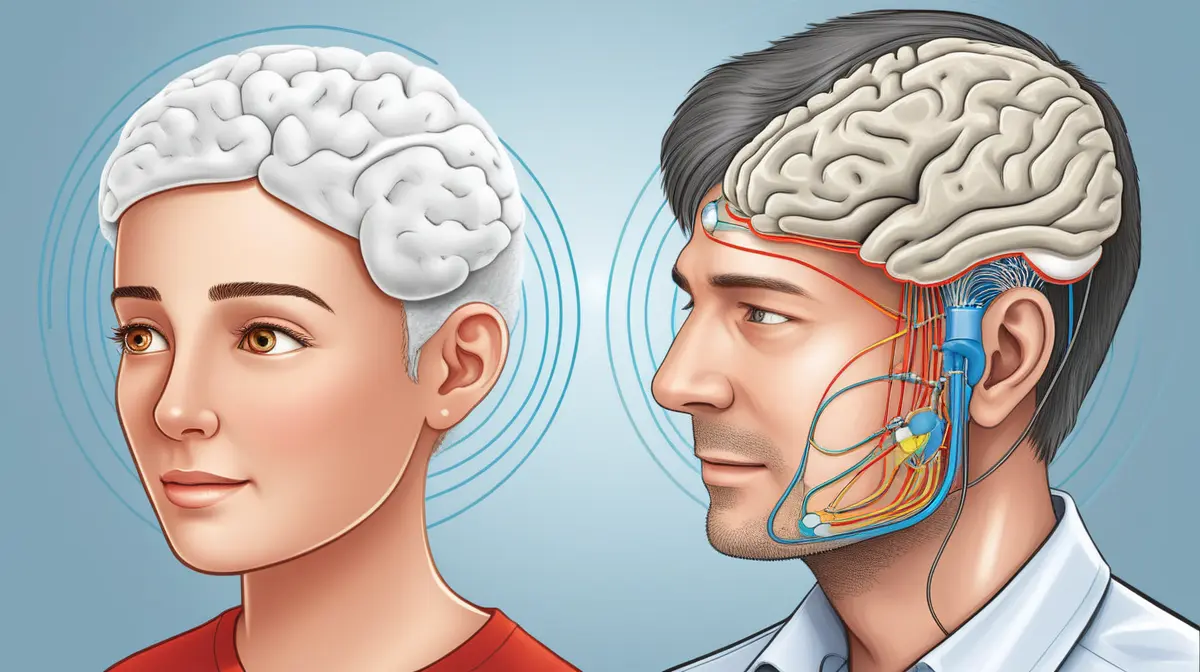

Some systems estimate emotional state from signals such as heart rate variability, facial movement, voice, or brain activity. Some use virtual reality to intensify perspective taking. Some neurotechnology teams explore closed-loop systems that respond to ongoing neural activity. In a few controlled experiments, researchers have even demonstrated limited brain-to-brain signaling between humans.

A concrete comparison helps. A mood-tracking watch, a VR perspective-taking exercise, and a high-end neural interface can all sit under the broad cultural label of empathy technology, but they are not remotely equivalent. One offers inference, one offers simulation, and one offers signal exchange. None of them amounts to a shared mind.

That matters because people often jump from narrow demonstrations to ideas like collective consciousness. The evidence does not support that jump. What the evidence does support is that technology can sometimes improve the conditions for perspective taking, emotional awareness, or communication. That is valuable, but it is a much smaller claim.

Why empathy is harder than just feeling more

Neuroscience has spent years showing that empathy is not a single switch. Reviews in Nature Neuroscience describe it as a combination of affective sharing, perspective taking, regulation, and interpretation. In plain language, empathy involves both resonance and boundaries. You have to register another person’s state without losing the ability to tell self from other.

This is why simple emotional mirroring is not enough. If a technology only amplifies raw feeling, it can create overload, bias, or panic rather than understanding. A comparison makes that clear. Hearing someone cry may make you feel distress, but it does not automatically tell you what caused the pain, what response is appropriate, or what structural issue created the harm.

The fairness study published in Nature makes the point in another way. Empathic neural responses are not neutral. They are shaped by context and social judgment. People do not automatically empathize equally with everyone. A tool that aims to increase empathy has to deal with that bias rather than assume a clean technical fix.

What brain-to-brain experiments actually prove

Controlled demonstrations of direct signaling between people are real, but they should be described honestly.

The University of Washington’s early brain-to-brain interface work showed that one person could influence another’s action through a constrained experimental setup. That is important because it proves information can cross from one nervous system to another through a technological chain. It does not prove shared experience, moral agreement, or mutual understanding.

A practical example helps. Sending a simple motor command is like sending a buzzer signal in a quiz game. It shows a channel exists. It does not show that rich inner states such as grief, trust, fear, or forgiveness can be transferred with the same clarity.

That is where neural sync usually gets overstated. Coordination is not the same thing as empathy. Two people can act in rhythm and still want different outcomes. The science supports the possibility of new communication pathways. It does not support the fantasy that a cable between brains would automatically dissolve political conflict.

Where empathy technology could help in the real world

The strongest use cases are likely to be local, structured, and voluntary.

In therapy, emotion-aware tools may help people notice dysregulation sooner. In education, well-designed simulations may help students understand conditions they have never experienced directly. In mediation, biofeedback dashboards might help participants see escalation patterns before a conversation collapses.

That is a more credible path than trying to solve war through synchronized feeling. Consider a family conflict. A system that shows when both people are physiologically escalating might help a mediator slow the pace, reset the room, and translate more carefully. That is not collective consciousness. It is practical decision support built around emotional data.

The same logic may apply in negotiation training, restorative justice settings, or cross-cultural education. Technology can scaffold better listening. It cannot replace the harder work of accountability, incentives, and institutional trust.

Why conflict is not only an empathy problem

This is the point that techno-optimism tends to miss.

Some conflicts persist not because people fail to feel, but because they benefit from the current arrangement, fear punishment, mistrust the system, or reject the legitimacy of the other side. Empathy can influence behavior, but it does not erase power, ideology, scarcity, or strategic incentives.

A comparison helps. A better microphone can make people hear each other clearly in a meeting. It cannot force them to agree on budget, land, or law. Likewise, conflict resolution tech may improve conditions for communication while leaving the core dispute untouched.

That is why the phrase solve global conflict needs restraint. Empathy matters. Prosocial behavior research supports that. But political conflict also runs through institutions, narratives, and material interests. Technology may help reduce misunderstanding at the margins. It will not replace diplomacy, reform, or justice.

The ethical risks are serious even if the promise is real

Any system that senses, predicts, or influences emotion creates pressure points.

The first risk is coercion. People may be told to wear empathy systems for workplace harmony, school discipline, or public safety. The second risk is manipulation. A platform that detects your emotional state can also shape it. The third risk is false moral certainty. If a dashboard says someone is calm or sincere, users may trust the system more than the person.

UNESCO’s neurotechnology ethics work is useful here because it frames mental privacy and autonomy as central, not optional. That is the right standard. A technology marketed as prosocial can still become invasive if people lose control over what is measured, who sees it, and how it is interpreted.

The most plausible future is augmented mediation, not merged minds

A realistic future for emotional BCI looks less like telepathy and more like assisted communication.

You might see systems that detect overload during therapy, tools that translate stress cues into clearer prompts for a mediator, or interfaces that help patients with limited speech express internal state more accurately. Those are meaningful advances. They could change healthcare, caregiving, and some forms of conflict management.

But they are still tools around the edges of human relationship, not replacements for it. A good comparison is subtitles in a foreign-language film. Subtitles can dramatically improve understanding, but they do not make you native to the culture represented on screen. Future empathy tools may work the same way. They can narrow gaps without erasing them.

Why empathy training and empathy enforcement are very different

One reason empathy technology can sound attractive is that empathy itself is usually framed as an unquestioned good. But tools that encourage empathy are not the same as systems that demand emotional transparency.

A concrete example helps. A voluntary VR exercise used in medical training to improve patient understanding is one thing. A workplace system that monitors emotional resonance during meetings and scores employees on it is something else entirely. The first expands perspective. The second can become behavioral management disguised as social progress.

That distinction will matter if emotional BCI moves beyond laboratories. Designers will need to separate assistive use from compulsory use. Mediators and therapists may want tools that help participants slow down or notice dysregulation. Governments, employers, or schools may be tempted by something very different: systems that classify the right amount of concern, the right tone, or the right level of emotional alignment.

Once that happens, the technology stops being about empathy and starts being about compliance. That is why any serious roadmap for conflict resolution tech has to protect refusal, ambiguity, and individual boundaries. Empathy that can only exist under measurement is not empathy in the human sense. It is performance under supervision.

Final Thoughts

Shared neural signals may eventually help people notice each other’s emotional state more accurately, regulate conversations more effectively, and design better therapeutic or mediation environments. That would be significant. It would also fall well short of collective consciousness or a technical solution to geopolitics.

The honest promise of empathy technology is not that it will make disagreement disappear. It is that, in narrow and voluntary settings, it may reduce some of the noise that keeps people from understanding what is happening in the room. That is still worth building. It just should not be confused with a shortcut around human institutions, moral responsibility, or the hard architecture of conflict itself.