The phrase post-smartphone era gets attention because people are tired of screens but not tired of what screens do for them. We want navigation, messaging, shopping, search, and media without always holding a slab of glass in front of our face. That is why AR contact lenses keep showing up in future-of-tech conversations. They promise a screenless world where digital information sits directly in view instead of being trapped inside a phone. The practical payoff here is simple: this article explains what AR contact lenses can realistically do, why visual computing is moving forward, and why the smartphone is more likely to be absorbed into wearables than suddenly killed off.

Why the post-smartphone idea keeps returning

Every generation of consumer tech eventually runs into the same friction. The device becomes powerful, but the form factor starts to feel clumsy.

Smartphones are efficient, but they constantly pull attention downward into a private rectangle. That is fine when you are alone. It is awkward when you are walking, shopping, working with other people, or trying to stay aware of your surroundings. A comparison makes the appeal of wearables obvious. Wireless earbuds did not kill the phone, but they removed one repeated interaction friction point. AR wearables are chasing the same kind of shift at a much larger level.

This is why the phrase augmented reality future resonates with both consumers and brands. It hints at computing that feels closer to sight itself. The user does not open a screen. The interface appears where attention already is.

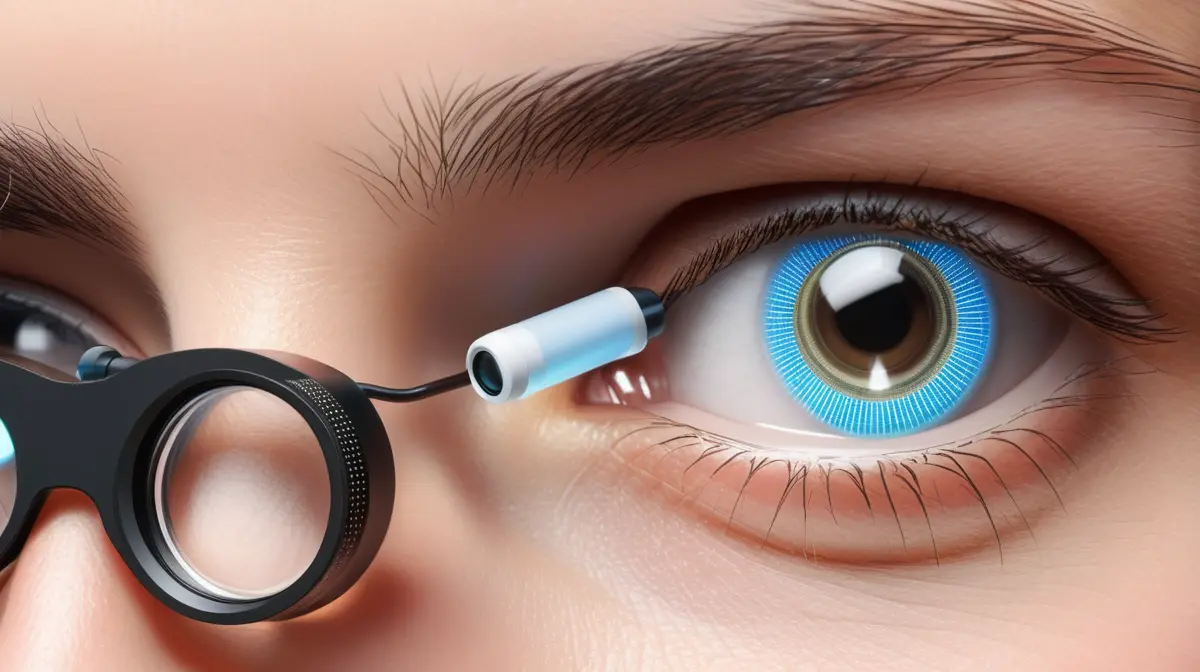

Why AR contact lenses sound more disruptive than smart glasses

AR smart glasses are already familiar enough for people to picture them. Contact lenses feel more radical because they remove the last visible hardware step.

A smart glass frame still signals that a person is using a device. A lens promises something quieter. That difference matters socially and commercially. If the interface becomes nearly invisible, the technology starts to feel less like an accessory and more like a layer on reality.

A practical comparison helps. Laptops turned computing into a portable activity. Smartphones made it constant. AR contact lenses would try to make it ambient. That is a much bigger jump in how people experience interfaces, even if the underlying services are the same ones already handled by phones today.

The problem is that the smaller the hardware gets, the harder everything else becomes. Power, heat, optics, safety, wireless links, and user comfort all get more difficult at contact-lens scale.

What AR contact lenses can realistically do first

The strongest early use cases are narrow overlays, not full cinematic mixed reality.

Think glanceable translation, simple prompts, notifications, navigation cues, or low-density information for medical, industrial, and accessibility scenarios. That is a more realistic near-term path than projecting a rich 3D workspace into daily life with no compromises.

A concrete example helps. A warehouse worker might benefit from directional arrows, picking instructions, or hazard prompts in-line with sight. A consumer walking through a city might use subtle wayfinding cues or real-time caption support. Those are useful because they deliver just enough information to reduce friction without requiring a full virtual desktop on the eye.

This is where visual computing becomes practical. The best early systems will probably show less than people imagine, not more.

Why the smartphone still solves problems lenses do not

For all the hype around a screenless world, the phone remains hard to replace because it is doing several jobs at once.

It is a battery pack, a camera system, a secure identity device, a payment terminal, a typing surface, a processing node, and an app platform. AR lenses would have to replace all of those functions or depend on another device that already handles them.

A comparison makes the point clear. Smartwatches became mainstream without replacing phones because they work best as companions. AR contact lenses are likely to follow a similar path for a while. They may become the front-end display while the smartphone, pendant, or pocket compute device handles the heavy work in the background.

That matters because many “phones are dead” headlines ignore system architecture. Even if the screen becomes optional, the smartphone’s computing role may survive longer than the screen-centric mental model suggests.

The hardest engineering problems are not software

Most people think of AR as a content problem, but contact lenses are constrained first by hardware and human biology.

A lens needs safe materials, stable optics, reliable positioning, acceptable comfort, and a power budget small enough to avoid dangerous heat. It also needs a communication path to external compute and some way to maintain visibility under real-world conditions such as blinking, tear film changes, motion, and lighting shifts.

A simple comparison helps. Building a great smartwatch app is difficult. Building a device someone is willing to wear on the surface of the eye for long periods is a different category of challenge entirely. The design constraints are closer to medical-device thinking than ordinary consumer-electronics iteration.

This is why smart glasses remain the more plausible bridge technology. They have more room for batteries, sensors, radios, and processors, which makes product tradeoffs far easier.

Why brands and retailers care anyway

Retail and marketing teams are interested because the shift from screens to overlays could change where digital influence happens.

Today, discovery often starts on a phone feed or a search results page. In a stronger AR ecosystem, some of that discovery may happen in physical context: product information in-store, location-aware recommendations, guided comparisons, or branded overlays tied to place and intent.

A concrete example helps. Instead of searching for shoe details on a product page, a shopper might look at the shelf and see price, sizes, reviews, and fit guidance directly in view. That would not just be a new display. It would change how retail journeys are designed.

This is why wearable tech matters beyond hardware enthusiasts. If interfaces move into the environment, marketers, retailers, and media companies have to rethink what a page, placement, or impression even is.

Privacy and social rules will matter as much as the optics

Invisible interfaces change more than convenience. They change what other people can no longer easily see.

A phone is legible. You can usually tell when someone is using it. AR lenses reduce that social signal. That raises obvious questions about recording, attention, consent, and manipulation. If a person can see prompts or overlays that others cannot detect, interaction norms get more complicated.

A practical comparison helps. Smart glasses already trigger concern when people suspect hidden cameras. AR contact lenses would intensify that discomfort because the hardware disappears almost completely from public view. Even when a device is harmless, people may still feel watched or asymmetrically informed.

This is why the augmented reality future will depend on trust, not only on display quality. Rules, visible indicators, platform restrictions, and setting-specific norms will matter if the technology is ever going to feel socially acceptable.

The likely path is layered, not sudden

The most realistic future is not “contact lenses kill the screen forever” in one dramatic leap. It is a layered transition.

First come better audio interfaces and lighter wearables. Then smarter glasses with improved displays and camera systems. Then, in some sectors, specialized lenses or ultra-light eye-based displays that solve narrow tasks better than phones do. Over time, those layers may reduce how often the phone’s screen is the primary interface, even if the phone itself remains central in the stack.

A comparison with laptops helps. Phones did not erase laptops. They changed when and why people use them. AR wearables may do the same to phones. The device does not vanish. Its role shifts.

What a screenless world would actually feel like

If visual computing matures, the biggest user change may not be spectacle. It may be reduced switching.

People would spend less time unlocking, opening, searching, and bouncing between apps just to answer small questions. Information would arrive more contextually. Interfaces would become more glanceable and less page-based. That kind of change sounds subtle, but it can reshape habits faster than one breakthrough demo ever could.

A practical example helps. Navigation today often means repeatedly glancing between the street and the phone. A mature AR system that quietly places turn guidance where you are already looking could feel instantly better, even if nothing else about your digital life changes.

That is the most believable route to a screenless world: not a total break from today, but a steady removal of unnecessary screen checks.

Final Thoughts

AR contact lenses may become one of the most important symbols of the post-smartphone era, but they are unlikely to kill the phone in the simple way the headline suggests. The better way to understand them is as the most extreme version of a larger shift toward ambient, wearable, and visual computing.

The real question is not whether one gadget destroys another. It is whether interfaces move closer to sight, context, and continuous awareness. If they do, the smartphone may stop being the center of digital life even before it disappears from our pockets. That is a much more plausible future, and it is arguably the more interesting one.