For decades, the story of AI dominating humans in competitive environments has been a digital one. Chess engines crushed grandmasters. AlphaGo stunned the world. Language models passed bar exams. But every single one of those wins happened in a controlled, virtual space — a world of ones and zeroes where the messiness of physical reality never enters the frame.

That changed this week.

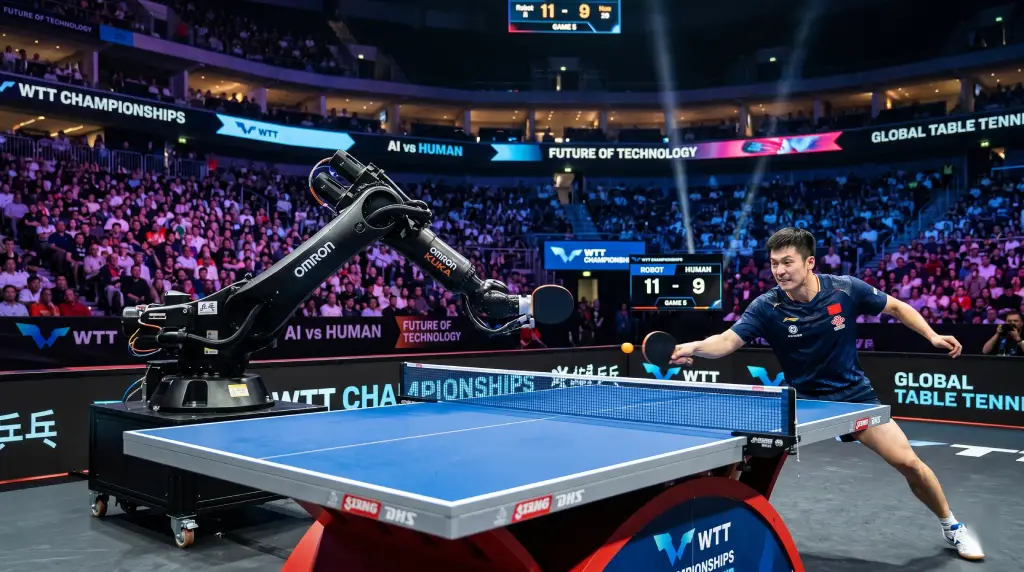

On April 23, 2026, Sony AI published research in Nature introducing Project Ace — a fully autonomous robotic system that can defeat elite and professional-level human table tennis players in real-time competitive matches. It is the first time any AI system has achieved human expert-level performance in a commonly played competitive sport in the physical world.

That distinction matters enormously. And if you care about where AI is actually headed, this is a story worth paying attention to.

Why Table Tennis Is a Brutally Hard Problem for AI

Table tennis might look simple on the surface — two players, a small ball, a table. But in terms of the computational and physical demands it places on any system trying to master it, it is extraordinarily punishing.

The ball moves at speeds exceeding 100 km/h. It carries spin that changes mid-flight. The rallies unfold in milliseconds. A player’s shot decisions happen faster than most people’s conscious awareness. Opponents adapt, bluff, and shift strategies within a single game. There is no room for lag, no moment to pause and recalculate.

For a robot to compete at this level, it cannot just be fast. It has to perceive the ball’s exact 3D position and spin in real time, make a shot decision, and execute a precise physical response — all within the span of a human blink.

That has been, as Sony AI themselves note, one of the most significant unsolved challenges in robotics for years.

How Ace Actually Works

Sony AI’s team built Ace from the ground up with three core innovations working together.

The first is a high-speed perception system unlike anything fielded in a robot before. Ace uses nine active pixel sensor cameras to track the ball’s precise position in three-dimensional space. Alongside these, three gaze control systems equipped with event-based vision sensors — technology originally developed by Sony’s semiconductor division — capture the ball’s angular velocity and spin in real time. These are not off-the-shelf components. They were engineered specifically for this task, pushing the limits of what sensors can detect at high speed.

The second is a control system built on model-free reinforcement learning. Rather than pre-programming Ace with a library of shots or hardcoded response rules, the team used a learning approach that allowed the robot to develop its own strategies through practice — adapting in real time without relying on a fixed internal model of the world. This is what gives Ace its flexibility under pressure.

The third is state-of-the-art high-speed robotic hardware capable of executing precise, rapid physical movements at the edge of human reaction time. Perception and decision-making only matter if the body can actually follow through — and Ace’s hardware was purpose-built to make that possible.

How It Performed Against Real Players

Sony AI didn’t test Ace against amateurs. The robot was put against professional and elite-level competitors in genuine match settings.

In December 2025 matches against four new players — two professional and two elite — Ace defeated both elite players and one of the professional players. It lost to the second professional opponent.

By March 2026, when matched against three new professional players, Ace defeated all three of them at least once.

And the performance kept improving. Ace showed higher shot speeds, more aggressive shot placement closer to the table edge, and faster rally pacing compared to earlier evaluations. The robot was not just competing — it was getting better, developing a more aggressive and strategic style of play under pressure.

Peter Dürr, Director of Sony AI in Zürich and the project lead, put it plainly:

“Table tennis is a game of enormous complexity that requires split-second decisions as well as speed and power. This research breakthrough highlights the potential of physical AI agents to perform real-time interactive tasks.”

Why This Matters Beyond the Trophy Case

Here is the part that most coverage will underplay.

Winning at table tennis is not the point. The point is what Ace had to solve in order to win.

Real-time physical perception. Sub-millisecond decision-making. Continuous adaptation to an unpredictable adversary. Precise execution in a dynamic, uncontrolled environment. These are not table tennis skills — these are the foundational capabilities that separate genuinely useful physical AI from the fragile, lab-controlled robots that have dominated robotics research for the past twenty years.

Peter Stone, Chief Scientist at Sony AI, said it directly:

“This breakthrough is much bigger than table tennis. It represents a landmark moment in AI research, showing, for the first time, that an AI system can perceive, reason, and act effectively in complex, rapidly changing real-world environments that demand precision and speed.”

The path from here leads toward AI systems that can operate reliably in surgical settings, disaster response scenarios, manufacturing environments where precision matters, and anywhere else that requires a machine to perceive and react to a physical world that doesn’t wait.

The Broader Context: AI Is Moving Off the Screen

Ace does not exist in a vacuum. It arrives in a week when physical AI is dominating the conversation across the industry.

NVIDIA has been pushing hard on what it calls “physical AI” — robots that can understand natural language instructions and perform complex tasks in real-world environments. At GTC 2026, the company introduced new Isaac GR00T models and Cosmos world simulation tools specifically designed to train robots faster and deploy them more reliably outside lab conditions.

Humanoid robots drew global attention at Hong Kong’s InnoEX 2026 fair in April, where more than 100 robots were shown performing physical tasks that would have seemed science fiction a decade ago — boxing, rescue operations, and highly coordinated movement.

The picture emerging is not of AI as a purely digital phenomenon. It is of AI entering the physical world in a serious, systematic way. Ace is the sharpest demonstration of what that transition looks like when done rigorously and published in peer-reviewed science.

What Comes Next

Sony AI’s research has now set a new public benchmark for what physical AI can achieve. The question researchers are already asking is where the next wall is.

Table tennis is one specific domain. The architecture behind Ace — high-fidelity sensing, real-time adaptive decision-making, precise physical execution — is general enough to be applied far more broadly. The team’s own framing points toward safety-critical environments and real-time human interaction as the natural next frontiers.

The gap between an AI that can beat you at ping pong and an AI that can assist a surgeon or navigate a disaster site is enormous, technically and ethically. But the underlying challenge — perceiving a dynamic physical world and acting on it in real time with precision — is now demonstrably solvable.

That is the actual news here. Not the score of the match. But what the score tells us about where AI’s expanding frontier really is.

Sources: Sony AI Official Announcement, Nature (April 23, 2026), NVIDIA GTC 2026 Physical AI Roadmap.