The phrase brain hacking sounds extreme, but the core issue is actually simple. If a device reads or writes neural signals, then it creates a new class of sensitive data and a new attack surface. The immediate challenge is not only preventing dramatic hostile-takeover scenarios. It is protecting users from surveillance, spoofed inputs, insecure updates, weak authentication, and misuse of intimate neural data. The payoff here is practical: this article explains what neural cybersecurity really means, what realistic attacks would look like, and how a person might one day “ad-block” their own brain by demanding layered safeguards rather than hype.

Why neural data is different from ordinary personal data

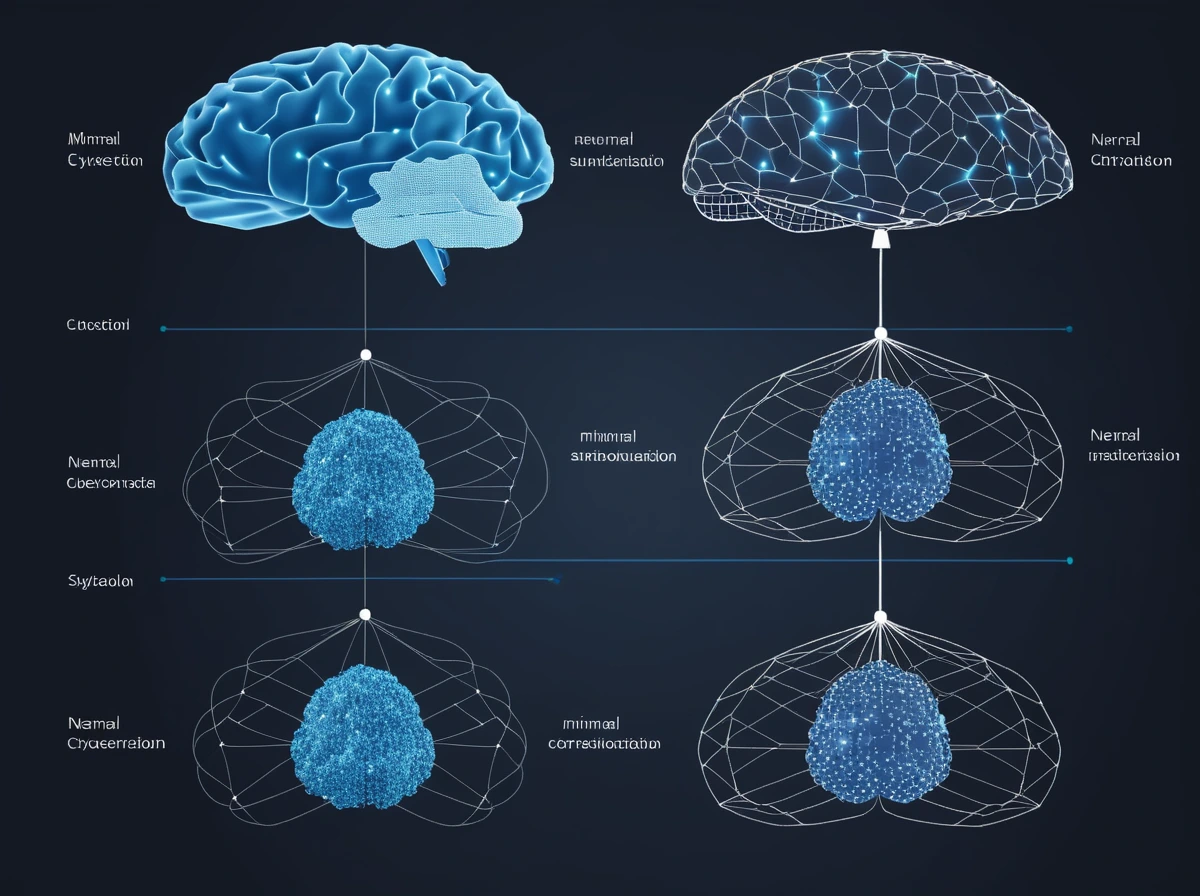

Most digital privacy debates begin with familiar categories: messages, location, purchases, health records. Neural data is different because it sits closer to perception, intention, recognition, and internal state.

That does not mean every BCI can read private thoughts in plain language. Most cannot. But it does mean that brain-linked systems can generate data about attention, motor intent, sensory response, recognition, or mental state that people rightly experience as deeply private.

UNESCO’s neurotechnology work treats mental privacy and brain-data confidentiality as central ethical issues, not optional extras (UNESCO). That framing matters because many security discussions still talk as if neural devices were just another wearable. They are not. A breach involving heart-rate data is serious. A breach involving signals tied to intention or recognition reaches closer to personhood itself.

A practical comparison helps. Password theft exposes what you know. Neural data misuse may expose how you respond before you even have a chance to explain yourself.

What brain hacking would realistically look like

The media version of brain hacking usually jumps to total mind control. Real attack paths are usually less cinematic and more believable.

One risk is eavesdropping on neural data streams or related metadata. Another is tampering with software updates, calibration settings, or device commands. A third is spoofing inputs so the system responds to false signals. A fourth is data misuse by the platform itself, even without an outside hacker.

The legal and cybersecurity review of BCIs makes this point clearly: attacks could range from eavesdropping on neurological data to manipulating brain activity, and existing law still leaves major gaps (PubMed).

That should change how people imagine the threat. The biggest near-term problem is not a villain taking over your mind remotely. It is a chain of smaller failures: poor authentication, weak software integrity, permissive data collection, insecure cloud handling, and vague user consent.

Why encryption is necessary but not enough

People often respond to this topic by saying just encrypt it. Encryption matters. It is not the whole solution.

NIST’s general cybersecurity framework logic still applies: identify assets, protect them, detect abuse, respond to incidents, and recover safely (NIST). But neural interfaces add extra difficulty because the protected system is not just a file server. It is a device participating in real-time interaction with a person’s body or mind.

A comparison makes the point clear. Encrypting a streaming service protects content in transit. Securing a neural interface also requires trusted hardware, update signing, access control, fail-safe modes, clear consent boundaries, and careful limits on what the device is even allowed to collect.

If the device is collecting far more data than it needs, encryption only protects an oversized risk. Good neural cybersecurity starts earlier, with data minimization.

The most realistic attacks may start before the implant

Another important correction is that neural cybersecurity is not only about the implant itself.

A weak mobile companion app, a cloud dashboard, a clinician portal, or a badly secured update server may all be easier to attack than the implanted hardware. In practice, many digital compromises begin through the softer edges of a system, not through its most dramatic component.

A comparison helps. People imagine breaking into a vault, but many real breaches begin with phishing, weak credentials, or a poorly configured admin panel. Brain-interface ecosystems will be similar. The neural device may be the crown jewel, but the attack path could begin with ordinary operational sloppiness around it.

That is why brain hacking should be understood as ecosystem hacking. The system includes hospitals, manufacturers, apps, cloud services, technicians, and support channels, not just electrodes.

What a real mental firewall would involve

The phrase mental firewall sounds futuristic, but the design principle is already familiar.

A firewall does not only block bad traffic. It defines what traffic is allowed in the first place. A meaningful BCI defense stack would likely include:

- strict authentication for every device and controller

- signed firmware and verified updates

- local processing where possible instead of unnecessary cloud transfer

- limited permissions on what neural data may be stored

- clear user-controlled modes for disconnecting, muting, or restricting functions

- tamper detection and graceful fail-safe states

That last point matters more than people think. If an ordinary app crashes, it is inconvenient. If a brain-linked system crashes or accepts bad input, the consequences may be physical, psychological, or both.

FDA guidance for implanted BCIs already treats device design and testing as serious medical matters rather than consumer convenience issues (FDA). Cybersecurity has to be built into that same seriousness.

Why user rights matter as much as technical controls

Security is not only a technical problem. It is also a governance problem.

If a company can legally collect broad neural data for vague future use, then even excellent engineering may still produce a bad privacy outcome. If a worker is pressured to wear neural monitoring for productivity, then the problem is not just hacking. It is coercive design.

That is why neuroprivacy has to include rights:

- the right to know what is collected

- the right to refuse non-essential collection

- the right to disconnect

- the right to delete or restrict stored neural data where possible

- the right to meaningful explanation when algorithms classify or infer mental states

WHO’s neurotechnology landscape report and UNESCO’s ethical framework both point toward this larger governance view. The danger is not only intrusion by criminals. It is also routine normalization of excessive access to brain-derived data (WHO, UNESCO).

What “ad-blocking” your brain should really mean

The phrase is a useful metaphor if it stays practical.

Ad-blocking your brain should not mean pretending perfect protection exists. It should mean refusing unnecessary data extraction and insisting on narrow, inspectable interfaces. In practice, that would look like:

- only sharing the minimum neural data needed for the task

- limiting background collection

- separating medical use from marketing use

- disabling remote features that are not essential

- demanding security updates and transparent logs

- choosing systems with real offline or local-control modes when possible

A simple comparison helps. Ad blockers work because they stop unwanted requests before they become an attention problem. Neural privacy should work the same way. The best defense is not waiting to clean up abuse after the fact. It is preventing unnecessary access in the first place.

Why neuroprivacy will become a mainstream policy issue

At first this field looks niche. It will not stay niche if neurotechnology spreads across medicine, work, gaming, and consumer devices.

Once brain-linked systems begin collecting richer cognitive or affective signals, regulators will be forced to decide whether existing privacy categories are enough. UNESCO’s language about mental privacy is important because it suggests ordinary data-protection logic may be too weak for brain-derived information that touches thought, identity, and inner response.

That means neural cybersecurity is likely to become part of a much larger debate about what kinds of access to the mind should be off limits by default.

The hardest part is trust, not just code

Even perfect cryptography would not solve the whole problem.

Users also need to trust the manufacturer, the clinic, the update process, the cloud pipeline, and the legal environment around the device. If any part of that chain is weak, then brain-interface security becomes a system problem rather than a purely technical bug.

This is why neural cybersecurity will probably evolve more like aviation safety or medical-device assurance than like ordinary app security. The standards will need to be stricter because the cost of failure is more personal.

There is also a practical governance lesson here. Security promises that cannot be independently checked are weak promises. Users, clinicians, and regulators will need evidence that protections are real, current, and testable rather than buried in marketing language.

Verifiable safeguards matter.

Final Thoughts

If brain interfaces become common, people will not only ask what they can do. They will ask who else can access them, shape them, or profit from them. That is the real meaning of neural cybersecurity.

The strongest defense will not be a single magic technology. It will be a layered approach: minimal data collection, strong authentication, trusted updates, clear user rights, and strict limits on how neural data can be used. In that sense, “ad-blocking your own brain” is a useful ambition. It means insisting that the future of BCI begins with boundaries, not with unlimited access to the most private signals a person can produce.