If you imagine the future as a cable running from the cloud straight into the cortex, the question sounds simple: can the human brain handle high-speed data downloads? The useful answer is less cinematic and more practical. What matters is not only how much signal a device can push into nervous tissue, but whether the brain can interpret, adapt to, and safely use that signal. The payoff here is straightforward: this article explains what current neural data transfer can already do, where brain bandwidth actually gets constrained, and why “more input” does not automatically become understanding.

Why the “brain download” metaphor breaks so quickly

The phrase makes the brain sound like storage hardware. That is the first mistake.

A hard drive can receive bits without caring what they mean. A brain does not work that way. Neural systems are constantly filtering, predicting, and integrating signals across sensation, attention, memory, and action. A digital file can be copied at high speed because the destination already knows exactly how each bit should be written and read back. The brain is different. A stream of electrical stimulation is only useful if it maps onto patterns the nervous system can interpret.

A simple comparison helps. Sending data to a laptop is a write operation. Sending signals to a brain is closer to trying to teach a living system a new code while it is still busy seeing, listening, balancing, remembering, and making decisions.

That distinction matters because popular coverage often collapses very different goals into one phrase. A neural interface may help decode intention so a person can move a cursor. It may deliver artificial sensation to improve grasp control. It may someday support communication or memory-related functions. None of those is the same as instantly downloading a language, a combat game strategy, or a semester of physiology notes.

WHO’s brain-health framing is useful here because it treats the brain as a system spanning cognitive, sensory, behavioral, motor, and social-emotional domains, not as a passive storage target (WHO). That is exactly why the “brain download” metaphor fails so fast. Throughput is only one small part of the problem.

What current neural data transfer can already do

The best way to stay grounded is to start with what BCIs already do in real research and clinical settings.

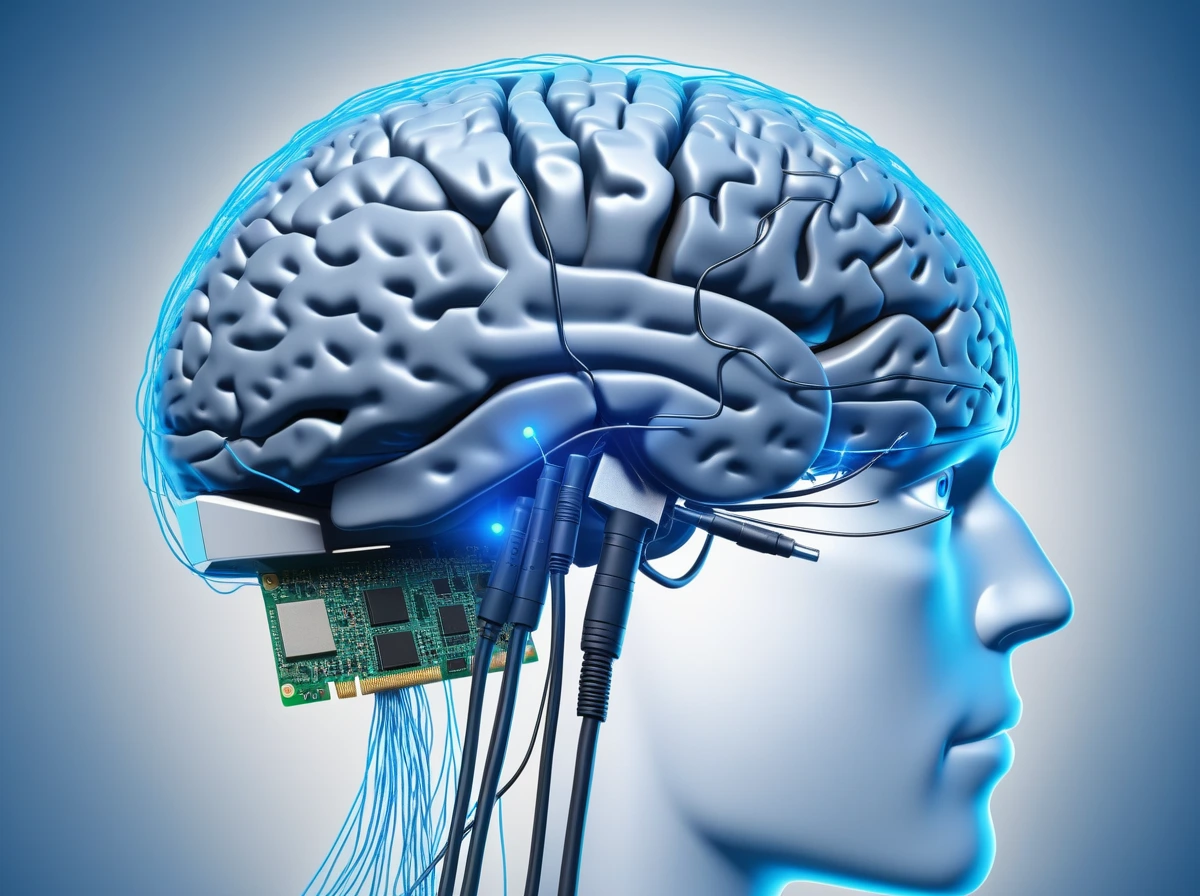

The FDA’s guidance on implanted BCIs describes these systems as neuroprostheses that interface with the nervous system to restore lost motor or sensory capabilities in patients with paralysis or amputation (FDA). That is already a meaningful kind of neural data transfer. It shows that brain activity can be decoded into action and that artificial stimulation can sometimes send useful information back.

Motor control is the clearest example. A person can generate neural signals that a system translates into movement of a cursor, robotic arm, or assistive device. That is not science fiction. It is a narrow, task-specific interface that works through calibration, repeated use, and careful decoding.

Sensory feedback gets closer to what most people imagine as “data going in.” In a human study on intracortical microstimulation, researchers found that stimulation-based feedback improved grasp-force accuracy compared with relying on vision alone (PubMed). That is an important example because it shows that digital input can become behaviorally useful. At the same time, it shows how specific the result is. The added signal helped with one controlled task. It did not amount to general knowledge transfer.

Recent human ICMS safety work points in the same direction. A decade-scale study reported that stimulation could consistently evoke hand-localized sensations across participants, with no serious stimulation-related adverse events reported in that early-feasibility setting (PubMed). That is encouraging evidence for restored sensation. It is not evidence that the brain is ready for broadband-style information dumping.

Brain bandwidth is not just about speed

When people hear brain bandwidth, they usually picture a throughput ceiling, as if the main issue were whether neurons can accept enough incoming traffic. That is too simple.

BCI researchers do use information transfer rate metrics, and those metrics matter. They help compare how quickly and accurately a user can control a system. But a performance metric inside a BCI task is not the same thing as measuring how fast the brain can absorb meaning, memory, or expertise. The classic limitation is not merely speed. It is speed plus error, training burden, and interpretation cost (PMC).

A comparison makes the difference clearer. Imagine subtitles in a language you do not know. The screen can deliver plenty of information per second. The bottleneck is not the display. The bottleneck is whether the symbols mean anything to you. Neural data transfer works the same way. A richer stream helps only if the code is meaningful, stable, and integrated with what the user already knows.

Encoding is the first bottleneck. If a stimulation pattern does not line up with existing neural organization, the person may experience it as vague, noisy, or ambiguous.

Attention is the second bottleneck. The brain is not a passive pipe. It has to allocate limited processing resources. A fast artificial input stream may compete with vision, touch, balance, working memory, and decision-making.

Meaning is the third bottleneck. Raw signals are not the same as concepts. A carefully timed tactile pulse might improve a grip task. That is very different from delivering the meaning of a textbook chapter or the flexible intuition behind a surgical technique.

Neuroplasticity helps, but it is not instant

This is where people often jump too quickly from “the brain can adapt” to “the brain can be loaded like software.”

Neuroplasticity is real, and it is one of the strongest reasons not to dismiss BCIs. Reviews on brain-computer interfaces and plasticity emphasize that adaptation is central to successful use. The person learns. The decoder learns. Performance improves with repeated interaction (PubMed).

But plasticity is not magic. It is usually slow, effortful, and task dependent.

A practical comparison is rehabilitation versus file transfer. Rehabilitation works partly because the nervous system can reorganize through training. Nobody mistakes that for an instant upload. The same caution applies to digital-to-brain interfaces. Even if future systems become much better at delivering precise stimulation patterns, users may still need days, weeks, or months of practice before those patterns become intuitive.

That matters because a “high-speed” signal can outrun the user’s adaptation rate. In plain language, a device may be capable of delivering more input than the person can meaningfully learn from at that moment.

WHO’s neurotechnology landscape report supports this grounded view. It notes that BCI and related neurotechnologies have progressed rapidly, while adoption in real human-health settings remains limited and challenging (WHO). That gap exists because scaling human interpretation is harder than scaling a demo.

Where neural overload risk would actually show up

If genuinely high-rate neural input ever becomes normal, overload will probably appear in a few predictable places first.

Cognitive overload comes before dramatic science fiction failure

The first limit is mental workload. Even ordinary sensory life can swamp attention when too much arrives at once. Artificial input would have to compete with natural perception, inner speech, memory retrieval, and decision-making. A user might technically receive a fast stream while still failing to integrate it into useful judgment.

A concrete comparison helps. Think about trying to learn from five overlapping tutorial windows while still playing the game. The screen can show all the information. Your brain still has to select, prioritize, and retain it. More visible information is not the same as more usable skill.

Sensory ambiguity can turn extra input into noise

The second limit is signal clarity. Artificial stimulation may create a sensation, but the sensation has to be distinct and interpretable enough to guide behavior. Human ICMS studies are promising precisely because they show that carefully mapped stimulation can evoke useful tactile percepts. That is encouraging. It is also a reminder that the field is still working hard to make limited sensations stable and specific.

That point is easy to miss in hype-heavy coverage. The problem is not simply “how do we push more bits in?” The problem is “how do we push signals in that the brain can reliably treat as something meaningful?”

Device and tissue safety still set hard boundaries

The third limit is biological and clinical. The FDA’s guidance exists because implanted BCIs are medical devices with real risks and serious study-design constraints. Long-term tissue response, device reliability, electrical safety, and human factors are not footnotes. They are part of the central engineering problem (FDA).

Even in encouraging ICMS work, the lesson is not “the brain can take unlimited input.” The lesson is that narrow, carefully controlled forms of stimulation can be safe and useful enough to justify continued progress. That is a very different claim.

What realistic future progress would look like

A realistic future probably will not arrive as a giant download knowledge button.

It is more likely to appear through narrow improvements that are easier to encode, test, and verify. Better sensory feedback for prosthetics. More reliable communication channels for people with paralysis. Closed-loop systems that adjust stimulation to the user’s state. Training systems that help the brain learn structured patterns more efficiently in a limited domain.

That path is less dramatic than the movie version, but it also fits the evidence better.

The strongest current case for neural data transfer is not “we can upload anything.” It is “we can sometimes send highly structured digital signals into the nervous system in ways that improve specific functions.” That is already important. It could change rehabilitation, assistive technology, and human-machine control. It just should not be confused with frictionless mind downloading.

Final Thoughts

The question is not whether neurons can receive artificial signals. They clearly can. The deeper question is whether those signals arrive in a form the brain can safely interpret, adapt to, and turn into reliable performance.

That is why the phrase high-speed data downloads hides more than it explains. Current BCIs already prove that neural data transfer is real. They also prove that the hard part is not raw transmission alone. The hard part is coding, calibration, plasticity, attention, and safety. So the short answer is yes, the brain can handle some digitally delivered information. But no, it does not handle it the way a hard drive handles files, and that difference is where the future of neural interfaces will be decided.