Have you ever cringed when you saw someone stub their toe? Or felt a sudden surge of excitement watching an athlete cross a finish line? That instantaneous, visceral connection isn’t just your imagination running wild—it’s the work of a highly specialized neurological system designed for empathy. Now, imagine trying to teach that instinct to a machine built on cold, hard silicon.

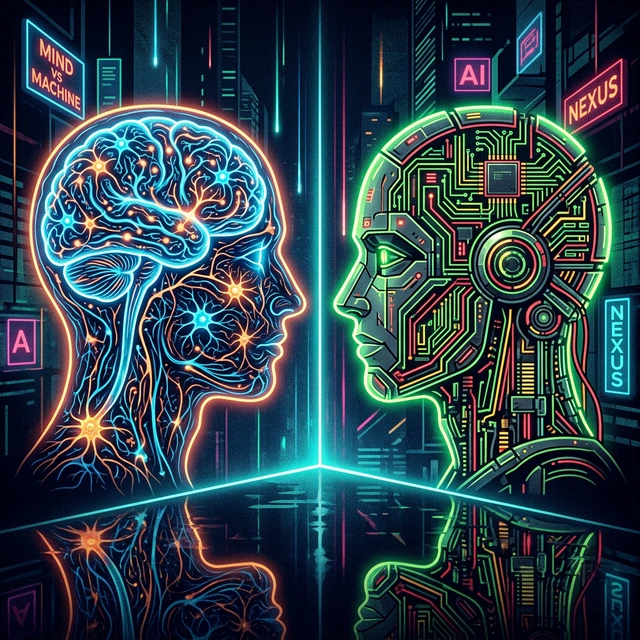

As developers and researchers push artificial intelligence to its limits, the conversation continually veers back to one fundamental question: what makes us human? In the relentless pursuit of Artificial General Intelligence (AGI), we are not just building smarter algorithms; we are holding up a mirror to our own minds. By examining the stark contrast between biological mirror neurons and the mathematical structures of microchips, we uncover deep truths about human consciousness, empathy, and cognition. If you want to understand the modern frontier of cognitive science, look no further than the server racks attempting to replicate it.

The Biological Mirror: How Humans Connect

To understand what we are trying to build, we must first understand what we already have. In the early 1990s, a group of scientists studying the brains of macaque monkeys stumbled upon an incredible discovery. They found that certain neurons fired not only when the monkey performed an action—like grasping a peanut—but also when the monkey observed a researcher performing the same action. They named these “mirror neurons.”

Mirror neurons act as biological simulators. They fire in a pattern that reflects an action in the external world as though the observer were actually performing it. In humans, this system is deeply intertwined with our capacity for empathy and social learning. It is the neurological bridge between self and other, allowing us to intuit intentions, feel shared emotions, and learn complex skills simply by watching. When a child learns to tie their shoes by observing a parent, their mirror neuron system is working overtime, mapping visual input to physical motor outputs before a single muscle twitches.

Evolution spent millions of years perfecting this process. Our brains operate in a chaotic, wet, chemical environment filled with noisy signals, yet this system manages to cut through the noise to forge immediate connection. This biological “hardware” doesn’t rely strictly on massive datasets to figure out how someone feels; it physically simulates the sensation. It is an intuitive, instantaneous response woven directly into the fabric of our nervous system. This capability forms the very bedrock of human society, cooperation, and morality. We are not just thinking machines; we are feeling machines, intricately wired to reflect the world around us.

The Silicon Mind: Pattern Recognition vs. Empathy

Now, consider the artificial neural networks (ANNs) attempting to replicate human thought. Stripped of the romanticism of science fiction, the “mind” of an AI is rooted in microchips, electricity, and mathematics. The silicon architecture operates fundamentally differently from biological synapses. Where our brains use a complex cocktail of neurotransmitters to modulate signals, machine learning models use simple mathematical weights and biases, passing numbers through layers of artificial neurons.

In an AI model like a Large Language Model (LLM), there is no hardware dedicated to “feeling.” There are only parameters optimized to predict the next word in a sequence based on vast amounts of training data. When an AI writes a comforting sentence to a user, it isn’t simulating their pain; it has merely computed that the words “I understand your pain” are statistically likely to follow the user’s input. It is exceptional at pattern recognition but utterly devoid of inner life.

Can We Hard-Code Compassion?

Many in the field of AI and cognitive architecture ask whether we can build an analog to mirror neurons in silicon. Can we design an artificial agent that not only predicts an action but internally simulates the “experience” of the user it interacts with?

Some researchers are trying to do just that by building recursive neural architectures where an AI model holds an internal state representing the mental state of its human counterpart—often referred to as Theory of Mind in AI. By giving the AI explicit feedback mechanisms to align its internal state with human emotional markers, they hope to simulate empathy.

Yet, this remains a fundamentally “hard problem.” While an algorithm can be trained on millions of images of human faces to detect sadness with greater accuracy than an actual person, the algorithm still does not know sadness. The microchip doesn’t care. It simply categorizes pixels. We can build phenomenally complex simulation layers that mimic the outputs of mirror neurons, but creating the actual internal experiential reality—the “qualia” of empathy—remains entirely elusive. The silicon mind simulates the behavior without experiencing the state.

What AI’s Limitations Teach Us About Ourselves

Herein lies the profound lesson: observing the limitations of artificial intelligence throws our own humanity into sharp relief. In the quest to build machines that think like us, we’ve discovered exactly how un-machine-like our thinking truly is.

If building AGI with true empathy is so incredibly difficult, it underscores how miraculous our biological hardware is. Humans tend to take their own cognitive abilities for granted. We process nuanced social cues, read body language, and intuit the emotional weather of a room the moment we walk into it. This happens effortlessly because our mirror neuron networks handle the heavy lifting below the threshold of our conscious awareness. AI acts as a negative-space mirror; by clearly detailing what AI struggles to do, we map the shape of human uniqueness.

Our struggles with machine consciousness remind us that human intelligence is embodied. We do not exist as pure logical processors floating in a void. Our minds are deeply integrated with our physical forms, our senses, and our neurochemical systems. A machine learning model has no body, no fear of death, no evolutionary history of social dependence. Therefore, its “intelligence” will always be alien compared to ours, regardless of how convincingly it talks.

Beyond the Turing Test: The Next Frontier

For decades, the Turing Test was considered the ultimate benchmark for AI. If a machine could converse so well that a human couldn’t tell it was a machine, it had achieved a form of intelligence. However, as LLMs routinely pass versions of the Turing Test today, we are quickly realizing that looking or sounding human is entirely different from being human.

The next frontier of AI philosophy isn’t whether machines can trick us into thinking they are empathetic. The frontier is understanding the fundamental boundary between simulated behavior and actual consciousness. And more importantly, acknowledging that biological empathy, facilitated by organic structures like mirror neurons, may be an emergent property of life itself—one that cannot simply be programmed into non-biological substrate, no matter how much compute power we throw at it.

The Future: Synergy Over Simulation

We find ourselves at a crucial juncture in history. The realization that AI cannot easily replicate the human “soul”—our empathetic, embodied cognition—should not be seen as a failure of computer science, but as a liberation.

Instead of obsessively trying to build human replicas or mechanical replacements for human interaction, we should pivot toward synergy. AI is magnificent at tasks humans struggle with: processing millions of documents in seconds, finding hidden statistical correlations, and simulating complex physical systems. Humans, on the other hand, are magnificent at the very things AI lacks: moral reasoning, ethical judgment, empathy, and creative leaps of faith.

This leads to the “centaur” model of human-AI collaboration. Just as a centaur combines the strength of a horse with the intellect of a human, the most powerful cognitive systems of the future will combine the raw data-processing power of silicon microchips with the empathetic, intuitive leadership of the human brain. We provide the moral compass and the mirror neurons; the AI provides the computational engine. In the end, the attempt to build an artificial mind didn’t just teach us how to create better computers. It taught us how irreplaceable we truly are.

Join the Conversation

The intersection of artificial intelligence and cognitive neuroscience is moving faster than ever, and understanding it is key to navigating the future. If you want to keep exploring how technology is reshaping our understanding of the human mind, subscribe to our newsletter for more deep dives into the philosophy of AI, neural networks, and the future of human cognition.