If you run a company, build software, or invest in AI startups, mixing up AGI and narrow AI creates expensive confusion. You can overpay for ordinary automation, expect human-level autonomy from systems that still need tight supervision, or miss the places where current AI already delivers value. This guide explains the distinction in plain language, shows where today’s systems actually sit, and gives you a practical way to evaluate products, roadmaps, and investment claims without getting pulled into hype.

AGI and narrow AI solve different kinds of problems

Narrow AI is a specialist

Narrow AI, often called weak AI, is built for a bounded task or a small family of related tasks. In IBM’s widely used industry taxonomy, it is the only form of AI that exists today. That does not make it weak in performance. It means its capability is scoped.

A narrow system can still be exceptional inside its lane. A fraud model can scan more transactions than an analyst. A computer vision model can inspect more units per minute than a human on a production line. A coding assistant can generate boilerplate, tests, or refactor suggestions faster than a developer starting from scratch. That is the same pattern behind many of the AI tools that already outperform humans at specific tasks already shaping daily work.

The limit is transfer. A fraud model cannot become a pricing strategist on demand. A forecasting model does not understand contract review. A support bot may answer common requests well, then fail when a customer asks something that falls outside the patterns the system was tuned for. As IBM’s AI taxonomy explains, narrow AI demonstrates intelligence in specialized domains, not across the full range of tasks humans handle.

That is why narrow AI is still the right mental model for most business deployments. Invoice routing, call summarization, quality inspection, code review support, document extraction, and demand forecasting all benefit from systems that work inside clear boundaries. Businesses do not need to apologize for this. A specialist tool that works is often more valuable than a supposedly general tool that does not.

AGI is a hypothetical general problem-solver

AGI, or artificial general intelligence, describes a system that can match or exceed human cognitive performance across a broad range of tasks. The key idea is generality. In plain language, that means the ability to transfer knowledge across domains, learn new tasks in unfamiliar settings, and perform with flexibility closer to a capable human than to a specialized tool.

The easiest way to see the gap is with a comparison. A tax calculator may outperform a person on one narrow task. A strong chief of staff can move from market research to planning, then shift into writing, prioritization, ambiguity resolution, and stakeholder management without bespoke retraining. That kind of cross-domain adaptation is what businesses usually imagine when they hear general intelligence. If you want the broader conceptual backdrop, see our related explainer on AI vs human intelligence.

IBM’s AGI explainer frames the difference directly: narrow AI covers nearly all current systems, while AGI remains hypothetical and would need non-domain-restricted problem solving. That makes AGI strategically important, but operationally tricky. There is no universal benchmark everyone accepts, and no settled date for when such systems might arrive. A deeper definition of the term itself is also covered in our guide to what artificial general intelligence means in practice.

A quick comparison

| Dimension | Narrow AI | AGI |

|---|---|---|

| Task scope | One bounded task or workflow | Broad range of cognitive tasks |

| Transfer | Limited outside training or tooling boundaries | Expected to adapt to new contexts |

| Supervision | Usually needs guardrails and human review | Would require much less task-specific oversight |

| Reliability | Can be strong in a defined lane | Would need reliable performance across many lanes |

| Business meaning | Useful for targeted automation now | Important strategic concept, not a normal product category today |

Why the distinction changes business decisions

This is not just a terminology debate. The label changes how teams budget, how leaders set expectations, how buyers evaluate vendors, and how much risk an organization accepts when it lets software make decisions or take action.

Product and operations planning

If you treat current AI as narrow, you design around task boundaries. That usually leads to better products and better outcomes. You pick one workflow, define success clearly, constrain the inputs, add a human escalation path, and measure error rates over time.

Take customer support. A realistic narrow-AI deployment might classify tickets, summarize conversation history, and draft a response for a human agent to review. That can shorten handle time without pretending the system understands every policy nuance or customer edge case. The same pattern works in software engineering: a code assistant can draft tests or explain a code path, while a human still decides what enters production.

If you treat those systems as if they were AGI, the planning logic changes in dangerous ways. You might assume the support bot can own the full relationship or the coding agent can safely modify production systems with light supervision. That is where performance gaps become operational failures.

Procurement and vendor claims

The distinction also matters when vendors describe products as general, agentic, or AGI-like. A lot of these products are still workflow layers built on top of a foundation model, retrieval system, prompt stack, and a handful of APIs. That can still be useful. It just is not the same thing as general intelligence.

A simple test helps: what happens when the system encounters a task it has not effectively seen before? Does it transfer robustly, or does it degrade once the workflow moves outside the narrow context it was designed for?

This is where many buyers get misled. A single chat interface can write copy, summarize a document, answer a question, and generate code, which makes it feel general. But surface flexibility is not the same as durable competence. For procurement, production evidence matters more than breadth-of-demo theater. A month of workflow-level results is far more meaningful than a dazzling live prompt sequence.

Risk, governance, and accountability

Risk management changes as autonomy rises. NIST’s AI Risk Management Framework explicitly targets organizations that design, develop, deploy, or use AI systems. That framing matters because risk is not just a model issue. It is a system issue.

With narrow AI, the risk surface is easier to bound. You can ask where the model is used, what kinds of mistakes it makes, and which human role catches those mistakes. A defect detection system might trigger extra inspection. A document extraction model might send uncertain cases to a specialist. The workflow remains legible.

As systems gain more autonomy, the governance problem changes. An AI that drafts code is different from an AI that merges pull requests. An AI that summarizes claims is different from an AI that approves payouts. The more action authority you grant, the less useful vague language like smart becomes. You need clearer limits, clearer audit trails, and clearer ownership.

Where current AI sits today

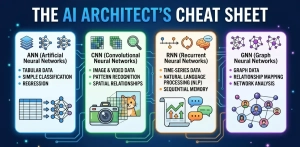

Machine learning evolution widened interfaces

Modern AI feels broader than earlier machine learning because the interface changed. Classic machine learning often meant one model for one task: churn prediction, spam detection, demand forecasting, image classification. Deep learning widened what models could represent. Foundation models widened what users could ask for through natural language and multimodal interaction.

IBM’s overview of AI is useful here because it places generative AI inside a longer arc of machine learning and deep learning, rather than treating it as a separate kind of intelligence. That part of the machine learning evolution has made AI interfaces broader and easier to use, but interface breadth is not the same thing as general intelligence.

This is why today’s tools can feel surprisingly capable while still remaining bounded. A model may write a memo, explain a spreadsheet formula, summarize a meeting, and draft code from one prompt window. That is a large jump in usability. It is not proof that the system can reliably transfer across open-ended business environments with human-like judgment.

Broad capability is not the same as general intelligence

The Google DeepMind paper Levels of AGI offers a stronger framework than hype-driven labels because it separates performance, generality, and autonomy. The authors argue that those factors should be evaluated separately and that shared definitions matter for comparison, risk assessment, and deployment decisions.

That perspective helps explain why modern models are easy to overread. A system might look broad because it can discuss many topics and complete many task types through language. But if the performance is inconsistent, or if success depends on prompts, workflow wrappers, tool routing, retrieval, and human correction, then it still has not crossed the threshold most businesses should call AGI.

Agents make the confusion worse. Add memory, planning loops, and tool use, and a system appears more independent. It can query a CRM, search internal documents, draft follow-ups, and open tickets. That can create real business value. It still may be narrow if it only succeeds inside one carefully bounded workflow. More autonomy does not automatically equal more general intelligence.

What the business data actually says

The adoption numbers are real, but they still describe a narrow-AI economy rather than an AGI economy. In Stanford HAI’s 2025 AI Index, 78% of surveyed organizations said they used AI in 2024, up from 55% in 2023. The same report says 71% used generative AI in at least one business function.

That should change how businesses think about the market. AI is no longer a side experiment. It is part of mainstream operating software. But the same report adds an important constraint: most companies that report financial benefits still describe those gains as relatively low. In other words, adoption is broad, while impact remains uneven and highly dependent on fit, workflow design, and execution.

That pattern is exactly what you would expect from a technology that is already commercially useful but still bounded. It also explains why calling current systems AGI is such a poor business shortcut. It compresses two truths into one slogan: yes, models are getting broader; no, that does not mean they can reliably do most economically valuable work with human-level flexibility.

A practical evaluation framework for entrepreneurs, investors, and developers

Five questions to ask any AI product or thesis

- What is the task boundary?

If the workflow can be defined clearly and measured cleanly, narrow AI may already be enough. That is good news because most business value comes from bounded tasks, not open-ended intelligence. - How much transfer is real?

Can the system handle genuinely new contexts, or is it mainly remixing patterns inside the same domain? Drafting three kinds of marketing copy is not the same as moving from marketing planning to legal review to incident response. - How much supervision is still required?

A system that drafts first-pass work may be valuable. A system that acts without review creates a different risk profile. Do not confuse reduced typing with delegated judgment. - What is the cost of error?

AI that gets a social caption wrong is inconvenient. AI that misstates a contract clause, deploys broken code, or rejects a legitimate claim can create financial or regulatory damage quickly. - What are the unit economics after human review?

A product can look magical in demo form and still fail the business case once you include verification, exception handling, retries, and integration overhead. If savings disappear after supervision, the system is not delivering AGI-like leverage. It is delivering assisted workflow support.

What each audience should focus on

Entrepreneurs should usually resist the urge to build for AGI. A tighter strategy is to find one painful workflow where current AI is already good enough to save time, reduce error, or increase throughput. A startup that automates one ugly, high-friction process with strong evaluation can create more value than a broad assistant with weak reliability.

Investors should separate demo surface area from deployment depth. A wide product demo can hint at future upside, but the more relevant questions are retention, workflow fit, switching costs, failure modes, and whether the product performs under real operating constraints.

Developers should engineer with boundaries. Treat models as components inside systems, not as magical replacements for systems. Add retrieval when knowledge freshness matters. Add tests when code quality matters. Add approval flows when action authority matters. Build for observability and rollback because today’s useful AI is still uneven.

What to watch in the future of AI

Signals that would point toward general intelligence

If you want to watch genuine progress toward AGI, focus on transfer and reliability rather than spectacle. A stronger signal would be a system that can enter a new domain, learn the task with limited additional training, maintain performance over long task chains, and do so with far less scaffolding than today’s agents require.

Another signal would be stronger performance under uncertainty. Humans handle messy instructions, changing goals, and incomplete information with memory, abstraction, and judgment. Current systems can imitate parts of that behavior, but they often become brittle once the structure disappears. Real movement toward general intelligence would mean less brittleness, not just more fluent output.

What still separates useful agents from AGI

The future of AI will almost certainly include more capable agents. They will plan longer sequences, use more tools, operate across more applications, and become easier to integrate into business systems. That should increase practical usefulness.

But more useful is not the same as general. A revenue operations agent that updates CRM records, drafts outreach, and flags renewal risk may save a team hours each week. It still may not understand the business the way a human operator does. It may not transfer cleanly to finance, compliance, product strategy, or crisis response. That gap is exactly why the distinction matters.

Businesses do not need to downplay progress to stay precise. Current AI can be commercially important and still not be AGI. That is the most useful position for planning.

Final Thoughts

AGI vs Narrow AI is not a semantic side quest. It is a planning distinction. Narrow AI is already useful, already spreading through companies, and already reshaping how teams work. AGI remains strategically important because it changes long-term expectations, risk models, and market structure, but it is not a category businesses can buy off the shelf today. The clearest advantage comes from separating present capability from future possibility, then building, buying, and governing accordingly.