If you are asking about AGI in business management, the useful question is not whether a robot will sit in the corner office. The real question is more practical: how much of management can software already do, and what happens when that software gets better?

That matters because the first version of an “AI boss” is not a humanoid CEO. It is a system that sets shifts, monitors work, scores performance, routes candidates, and nudges managers toward decisions they may not fully understand. In other words, the boss may not be a machine in the legal sense. It may be a manager whose judgment is increasingly shaped by algorithmic management.

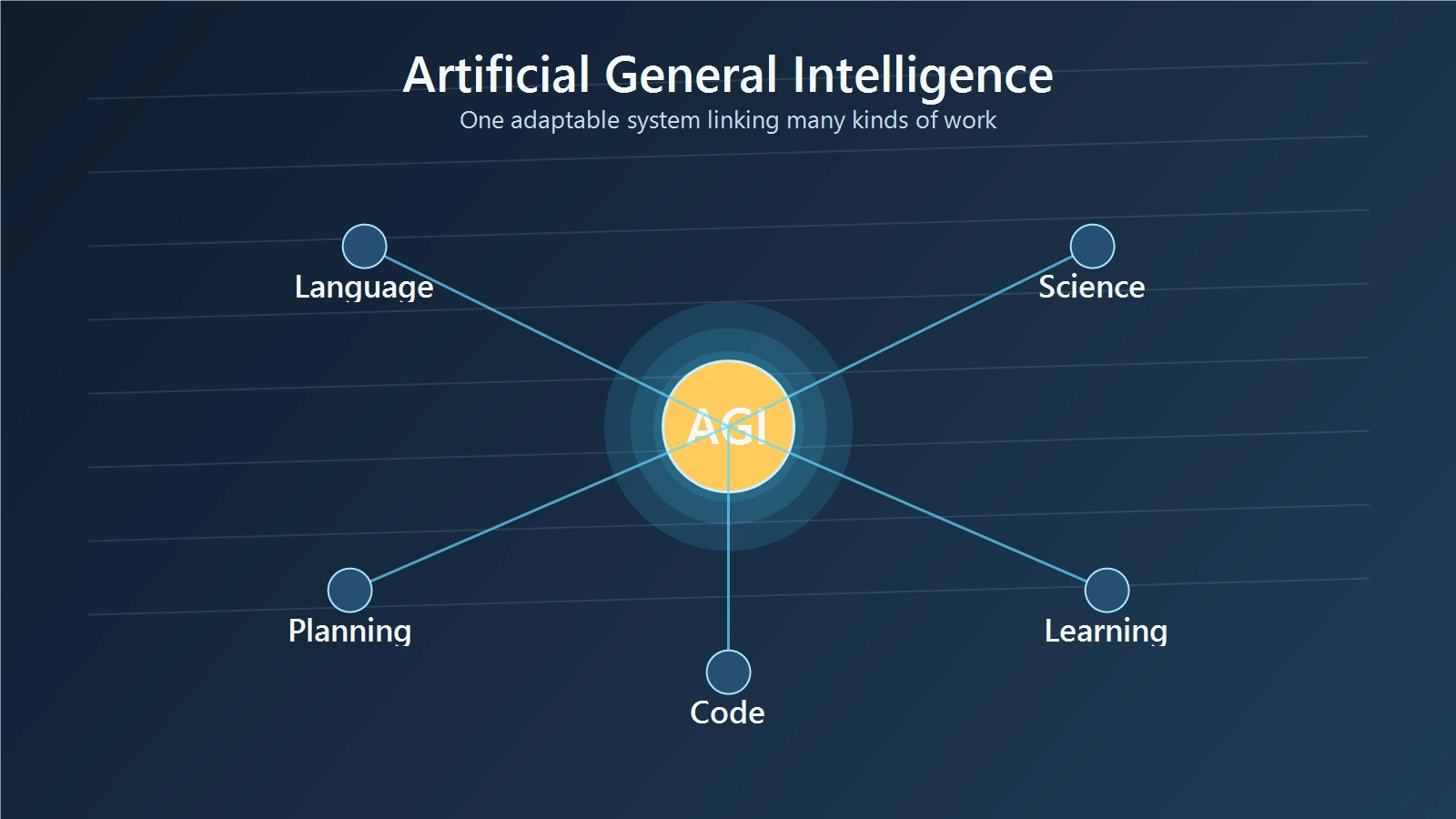

What an AI CEO actually means

An AI CEO is a metaphor, but it points to a real shift. A CEO is supposed to own strategy, allocate capital, set direction, absorb accountability, and represent the company outside the org chart. Management software can do pieces of that work. It can prioritize tasks, surface risks, recommend hires, flag outliers, and pressure teams to hit targets. It cannot, at least today, fully absorb responsibility for what happens next.

That difference is not semantic. The ILO describes algorithmic management as software used to automate aspects of management, including work schedules, work monitoring, and worker targets. It also notes that in extreme cases low- and middle-management functions can be reduced because algorithms can implement rules directly (ILO). The point is that the real boss-like behavior is happening one management task at a time.

So when people say “AI CEO,” they usually mean one of three things:

- software that advises human leaders

- software that constrains what managers can do

- software that gradually takes over routine management decisions

The first is a tool. The second is a control system. The third is the part business leaders should take seriously.

Why algorithmic management is already here

You do not need AGI to get algorithmic management. You only need digital systems that can collect data, apply rules, and output actions at scale.

The ILO’s work on algorithmic management shows how this already plays out in scheduling, monitoring, and performance control. In platform settings, algorithms coordinate labor transactions. In more traditional settings, they increasingly shape standardization, reporting, and supervision. The report also warns that algorithmic systems can become opaque enough that even the people running them may not fully understand the rules being applied (ILO).

The OECD adds a blunt data point: the use of algorithmic management tools is increasing significantly, with an adoption rate of 90% in US firms and 79% in the European Union, according to the OECD’s 2025 civil-service report (OECD). That does not mean every firm has a robot manager. It means that management is already being partially automated in a lot of places.

Concrete examples help:

- a warehouse system assigns shifts based on predicted demand

- a recruiting platform screens applicants and ranks them

- a sales dashboard flags who is behind target

- a performance tool suggests who should get more work or more oversight

In each case, a human may still sign off. But the software is doing more of the sorting, judging, and steering than many workers realize.

Which management tasks are easiest to automate

Not all management is equally automatable. The easiest tasks are the ones with structured inputs, repeated patterns, and clear metrics.

Screening is one obvious example. If a company wants to triage resumes, sort support tickets, or assign cases by urgency, software is very good at handling the first pass. The OECD notes that AI can speed HR processes, better target recruitment campaigns, and generate insights for HR managers and senior leaders (OECD).

Monitoring is another. If a team lives in dashboards, tickets, and productivity tools, the software can already see who is active, who is late, who is overloaded, and who is falling behind. The ILO points out that algorithmic management often affects work organization through monitoring and evaluation, not just task assignment (ILO).

Target-setting is also easy to automate because it turns judgment into rules. A system can say that a sales rep needs a certain call volume, a service agent needs a response time, or a logistics worker needs a delivery count. That is efficient, but it can also become a narrow version of management that values what is easy to measure over what is actually important.

The practical comparison is simple:

- humans are better at context, tradeoffs, and nuance

- software is better at repetition, scale, and pattern detection

The risk appears when a company starts confusing the second advantage for the first.

Why the CEO role is still hard to automate

The CEO role is harder to automate than scheduling or performance scoring because leadership is not just a bundle of metrics.

A CEO owns judgment under uncertainty. That includes choosing strategy, handling conflict, setting culture, answering the board, representing the company publicly, and taking responsibility when a decision fails. Software can recommend, but it cannot honestly absorb blame.

The ILO’s report captures the danger well. It notes that algorithmic systems can shift human intervention to a higher level of abstraction, and that the rules may even be unknown to those who run the system. It calls that a serious accountability problem (ILO).

That is why the CEO metaphor can mislead. A management system may tell you what to optimize. It cannot decide what kind of company you want to be. For example:

- should growth outrank employee trust?

- should a sales target be raised if it damages quality?

- should the company accept a short-term gain that creates long-term turnover?

Those are leadership questions, not dashboard questions.

There is also a cultural point. A real leader does more than issue instructions. Leaders explain tradeoffs, absorb uncertainty, and build consent. Algorithmic systems can be strict without being wise. They can be consistent without being fair.

What the law already says

The law is already signaling that workplace AI deserves special treatment.

The EU AI Act says AI systems used in employment, workers management, and access to self-employment should be classified as high-risk when they affect recruitment, promotion, termination, task allocation, monitoring, or evaluation. The reason is straightforward: these systems can affect future career prospects, livelihoods, and workers’ rights (EU AI Act recital 57).

That matters for managers because it means workplace AI is not legally treated like a harmless productivity toy. If a system helps decide who gets hired, who gets promoted, who gets monitored, or who gets cut loose, the compliance bar rises.

The OECD pushes the same logic from the management side. It recommends transparency, explanation, the ability to contest automated decisions, and human accountability for final decisions. It also warns that AI can reduce manager autonomy, hard code historical bias, and intensify surveillance if it is used to optimize productivity too aggressively (OECD).

The comparison with ordinary software is important. A calendar app is not a high-risk employment system. A tool that shapes hiring, promotion, firing, or work allocation is much closer to the center of employment law and workplace rights.

How managers can survive an algorithmic boss

The safest strategy is not to fight software blindly. It is to stay useful where software is weakest.

First, become the reviewer, not the rubber stamp. If a system recommends a hire, a target, or a disciplinary action, ask what data drove the result and what it missed. The OECD specifically calls for well-qualified humans interpreting results and making the final decisions (OECD).

Second, demand explainability and contestability. If nobody can explain why a tool produced a recommendation, it is not ready to govern people. Managers who understand the model’s inputs, limitations, and failure modes stay more valuable than managers who only repeat the output.

Third, protect team autonomy. The OECD warns that AI tools can devalue expertise, reduce motivation, and increase stress when they are used to direct how people spend their time or monitor their work too closely. In other words, the more the software behaves like a tiny dictator, the worse it may perform in the long run (OECD).

Fourth, learn to translate between business goals and system rules. If the model is optimized for speed, but the company actually cares about quality and retention, someone has to say so. That person is usually the human manager.

Fifth, keep human judgment visible. Document overrides. Explain why you accepted or rejected a recommendation. Build a habit of checking whether the machine is improving the decision or just making it faster.

In practice, the best managers in an AI-heavy workplace will be the ones who can do three things well:

- interpret data without worshiping it

- protect people from bad automation

- use software to extend judgment instead of replacing it

Deep Dive: Reclaiming Your Strategic Edge

If the survival strategy for 2026 is to stay useful where software is weakest, then the most important skill to build is strategic discernment. Most managers treat AI as a way to get tasks done faster; the leaders who survive will treat it as a way to think better.

Featured Resource: The AI-Driven Leader

For those looking for a concrete playbook on how to manage under the “algorithmic boss,” The AI-Driven Leader by Geoff Woods is the essential manual for the current era. It moves beyond the hype of chatbots and focuses on how executives can use AI to sharpen their own decision-making loops.

Where this book aligns with our survival guide is its emphasis on human accountability. It teaches you how to set the direction for the algorithm, rather than just reacting to the dashboard. It is a guide to moving from being a “cog in the system” to being the person who actually steers it.

Best for: Managers and executives who need to bridge the gap between technical data and human strategy.

Key Takeaway: AI can offer a recommendation, but only a leader can offer a reason. This book helps you find that reason.

Final Thoughts

The “AI CEO” headline sounds extreme, but the underlying trend is real. Management is becoming more software-mediated, especially in scheduling, monitoring, recruiting, and performance control.

That does not mean leaders disappear. It means leaders spend more time reviewing systems, explaining decisions, and defending judgment against automation bias. The companies that handle this well will not be the ones that hand over everything to algorithms. They will be the ones that use software to sharpen management without hollowing it out.

If you want the short version, it is this: the next boss may not be an algorithm in name, but the algorithm is already starting to boss the workflow.