Artificial neural networks can sound intimidating when you first meet the term. If you keep seeing ANNs mentioned in machine learning, deep learning, image recognition, or AI basics articles and still do not have a clear picture in your head, this guide is meant to fix that. You will learn what artificial neural networks are, how they work, what the main parts do, how training happens, and where different network types fit. By the end, terms like ANN in machine learning, backpropagation, CNN, and deep learning should feel much easier to follow.

What artificial neural networks are in simple terms

An artificial neural network, or ANN, is a model that learns patterns from data and uses those patterns to make a prediction. IBM’s overview of neural networks and NVIDIA’s ANN explainer both describe the same core idea: inputs move through connected layers, the model applies learned weights, and the network produces an output.

The brain analogy is useful as a starting point, but it can also confuse beginners. ANNs are inspired by biological neurons, not copied from them. They are better understood as adjustable pattern-finding systems.

Think about a music app trying to guess whether you will like a song. It might look at clues such as tempo, genre, artist, and your past listening behavior. An ANN learns how strongly each clue should matter. Over time, it becomes better at combining those signals into a useful prediction.

That is the simplest answer to what are artificial neural networks: they are layered systems that learn which input patterns lead to which outputs.

How an ANN is built

To understand neural network basics, it helps to look at the building blocks before worrying about training.

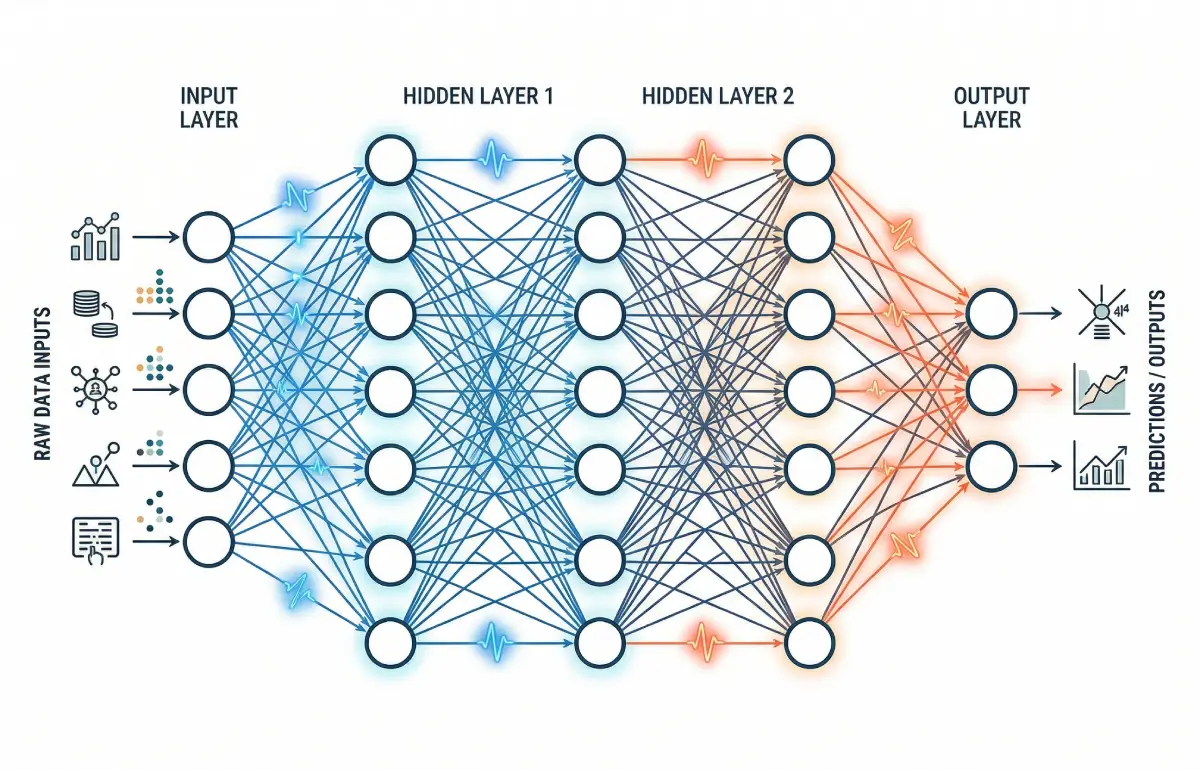

Input layer, hidden layers, and output layer

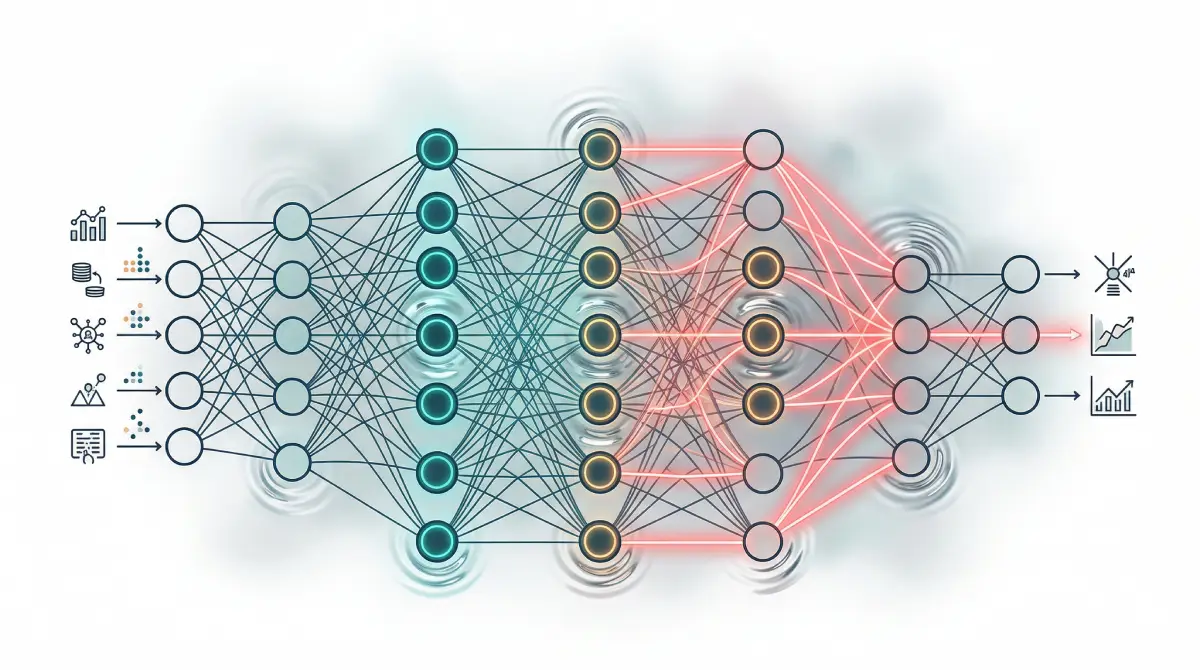

A basic ANN has an input layer, one or more hidden layers, and an output layer.

- The input layer receives the data.

- The hidden layers transform that data.

- The output layer returns the final result.

Take a spam filter as a concrete example. The input layer might receive signals such as whether the sender is unknown, how many links appear in the email, and how often suspicious words show up. Those signals move into hidden layers, where the network combines them in different ways. The output layer may then return a spam score.

Weights and bias in plain language

Every connection in the network has a weight. A weight tells the model how strongly one signal should influence the next step.

If the phrase “claim your prize” is a strong spam clue, the network may learn a larger weight for that feature. If the sender is in your contact list, the network may learn a weight that lowers the spam score.

Bias is another trainable value. It acts like a starting push before all the input evidence has been added together. In practice, weights decide how much each clue matters, while bias helps shift the starting point of the decision.

What an activation function does

After a neuron combines its inputs, the network usually applies an activation function. That sounds abstract, but the role is straightforward: it decides how strongly the neuron should respond.

Activation functions matter because many real problems are not linear. Fraud detection, language tasks, and image recognition do not follow one neat straight-line rule. Activation functions let ANNs capture more complicated relationships in the data.

How artificial neural networks learn

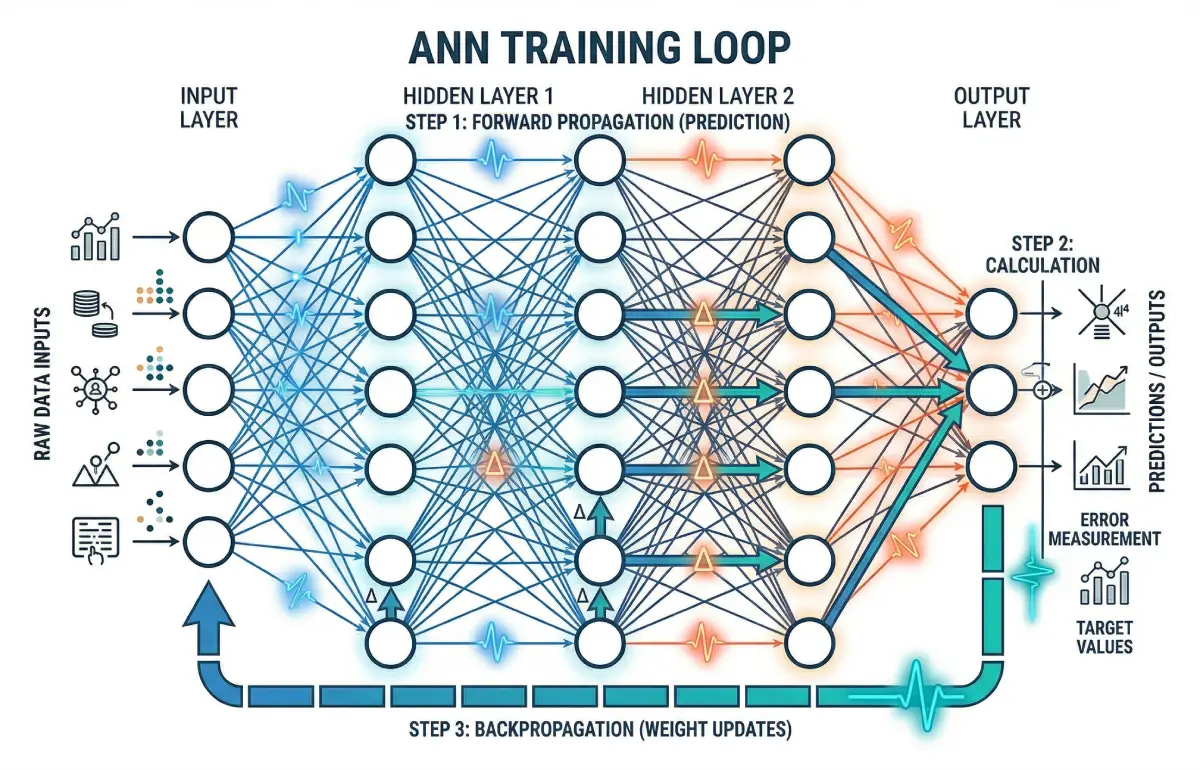

The structure explains what an ANN looks like. The training loop explains how artificial neural networks work.

Forward pass

During the forward pass, data moves from the input layer through the hidden layers to the output layer. The network uses its current weights and bias values to produce a prediction.

At the start of training, those values are not very useful. The network is essentially making rough guesses.

Error or loss

Next, the system compares the prediction with the correct answer. The difference is measured by a loss function.

If the network labels a clearly safe email as spam, the loss will be high. If it predicts well, the loss will be lower. TensorFlow’s beginner classification tutorial uses the same basic pattern: define layers, choose a loss function, choose an optimizer, and train the model on examples.

Backpropagation and weight updates

Then comes the core learning step. Google’s Machine Learning Glossary describes backpropagation as the process used to reduce loss by adjusting weights during the backward pass.

In plain language, backpropagation asks a practical question: which parts of the network helped cause this mistake, and how should their weights change next time? The network then nudges those weights and biases so the next prediction is a little better.

This loop repeats over many examples:

- make a prediction

- measure the error

- send that error backward

- update the weights

That repeated correction loop is the engine behind ANN learning.

A simple ANN example

A spam detector is one of the easiest ANN examples to follow because the inputs are familiar.

Imagine a training dataset with thousands of labeled emails. Some are marked spam. Others are marked safe. The input layer receives a set of features for each email, such as the number of links, the presence of suspicious phrases, whether the sender is known, and whether the message format looks unusual.

At first, the ANN does not know which clues matter most. It might treat all of them roughly the same. So when it sees an email saying “URGENT: Claim your free reward now” from an unknown sender, it may still make the wrong prediction.

That error becomes feedback. During training, the model may increase the influence of suspicious language and repeated links while reducing the influence of weaker clues. After enough examples, the ANN becomes better at separating likely scams from regular messages.

What matters here is the logic. The model is not reading the message like a human. It is learning a pattern from examples and adjusting itself when the pattern leads to the wrong answer.

Types of artificial neural networks

ANN is a family name, not one single architecture. Different network types are built for different kinds of data.

Feedforward networks

In a feedforward network, information moves one way from input to output. There are no feedback loops during prediction. This is the classic beginner architecture and a useful way to learn the basics of weights, layers, and activation functions.

Feedforward networks are often a good fit for structured data and straightforward classification problems.

Convolutional neural networks (CNNs)

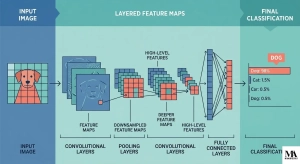

CNNs are a type of ANN designed for images and other grid-like data. Instead of handling every pixel as an isolated input, they look for repeating local patterns such as edges, curves, and textures.

If you want the image-specific version of this topic, read MindoxAI’s guide to convolutional neural networks. That article goes deeper into filters, feature maps, and why CNNs work so well for visual tasks.

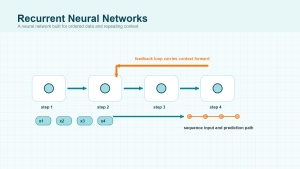

Recurrent neural networks (RNNs)

RNNs are built for sequence data, where order matters. NVIDIA’s explanation of recurrent networks highlights the key difference: they include feedback or memory-like loops, which makes them useful for sequences such as language, speech, and time series.

A simple comparison helps. A feedforward network treats each input as a fresh case. An RNN can carry information from earlier steps into later ones, which matters when context changes the meaning.

Deep neural networks

When a neural network has many hidden layers, it is often called a deep neural network. That is where deep learning fits in. IBM’s deep learning overview makes the distinction clearly: neural networks and deep learning are closely related, but they are not perfectly identical terms.

ANN vs neural networks vs deep learning

This is where many beginner articles stay fuzzy, so it is worth stating plainly.

Artificial neural network and neural network are usually interchangeable in general conversation. Most readers can treat them as the same basic idea.

Deep learning usually refers to training deeper neural networks with multiple hidden layers so they can learn more complex feature hierarchies.

A simple way to remember the relationship is:

- ANN or neural network: the broad category

- CNN and RNN: specific types within that category

- deep learning: the branch that focuses on deeper multi-layer networks

So if you are comparing deep learning vs neural networks, the clean answer is that deep learning is usually a subset of neural network-based machine learning, not a separate universe.

Common ANN applications

Artificial neural networks are useful when the relationship between input and output is too messy to capture with a short list of hand-written rules.

One common application is classification. ANNs can help decide whether a transaction looks fraudulent, whether an email is spam, or whether an image likely contains a certain object.

Another is prediction. Neural networks can estimate demand, forecast patterns, or score the chance that a user will click, buy, or cancel.

Specialized network types expand the range of tasks. CNNs support image recognition and visual inspection. Sequence-aware models support speech and text tasks. Recommendation systems often use neural networks to combine user behavior, item features, and context.

If you want a broader comparison between machine pattern recognition and human reasoning, MindoxAI’s piece on AI vs Human Intelligence is a helpful follow-up.

Strengths and limits of ANNs

Artificial neural networks are powerful, but they also come with tradeoffs.

What ANNs do well

They are good at learning complex, non-linear patterns from large amounts of data. That makes them useful when rule-based systems break down or become too brittle.

They also reduce the need to manually write every decision rule. Instead, the model learns from examples and feedback.

Where they struggle

ANNs often need substantial data and computing resources, especially as networks become deeper. They can also be harder to interpret than simpler models because the reasoning is spread across many weights rather than a short list of explicit rules.

Another risk is overfitting, where the model learns the training examples too closely and performs worse on new data.

It also helps to keep expectations realistic. A neural network can be very good at narrow pattern recognition without having human-like understanding. For a wider look at that distinction, see MindoxAI’s article on why AI still can’t think like a human mind.

Final Thoughts

The clearest way to understand artificial neural networks is to stop thinking of them as digital brains and start thinking of them as learnable pattern detectors. Inputs go in, weights shape the signal, layers transform the data, the network makes a prediction, and training pushes the model to make fewer mistakes.

Once that cycle makes sense, the rest of the vocabulary becomes easier. Feedforward networks, CNNs, RNNs, and deep learning are all related ideas built on the same basic foundation: learning useful patterns from data.