If you want to understand OpenAI’s AGI push, you have to read it in two ways at once. AGI is a technical idea about systems that can do broad, valuable work. But in OpenAI’s relationship with Microsoft, AGI has also been a contract term that can change who gets what rights, when, and under whose judgment. This article explains both sides in plain language. You will see how OpenAI defines AGI, how the Microsoft partnership turned that definition into a business trigger, what changed on January 21, 2025, October 28, 2025, and February 27, 2026, and why the issue now sits at the center of OpenAI strategy and AGI governance.

If you want a broader primer before getting into the contract layer, see our AGI explainer.

OpenAI’s AGI definition sounds precise until you try to use it

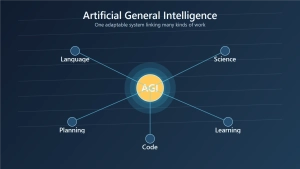

OpenAI’s Charter offers one of the most quoted definitions in the industry: AGI means highly autonomous systems that outperform humans at most economically valuable work. It sounds crisp. It sounds measurable. It sounds like the kind of threshold a lab could point to and say, ‘we are there.’

But ‘most economically valuable work’ is not one benchmark. It is a changing bundle of tasks. Software engineering counts. So does sales, legal drafting, logistics, scientific research, management, customer service, and a long list of jobs where judgment and context matter as much as raw output. A model can dominate several digital workflows and still fail at long-horizon planning, embodied work, or reliable decision-making in messy real settings.

That is why AGI is better understood as a category judgment than a finish line. A decathlon is a useful comparison. You do not decide who the better athlete is from one sprint. You look across events, and the scoring system shapes the result. AGI works the same way. What you count, how you weigh it, and which kinds of work you treat as central all affect the label.

OpenAI’s own public writing shows the slippage. In ‘Planning for AGI and beyond’, published on February 24, 2023, OpenAI described AGI more broadly as AI systems that are generally smarter than humans. That points in the same direction as the Charter, but it is not identical. One phrasing is tied to economically valuable work. The other sounds closer to a broad intelligence comparison. Even inside OpenAI’s own materials, the term is doing more than one job.

Outside OpenAI, the debate is even less settled. The 2025 AAAI Presidential Panel report and the arXiv paper ‘Stop Treating AGI as the North-Star Goal of AI Research’ both reflect the same underlying reality: AGI is still a disputed concept, not a universally accepted technical checkpoint.

That matters because the rest of the OpenAI story depends on a term that is already fuzzy before any contract language enters the room.

In the Microsoft deal, AGI was never just philosophy

The AGI debate would matter on its own. In OpenAI’s case, it matters more because the company built itself through unusually deep partnerships, especially with Microsoft.

When OpenAI announced on January 23, 2023 that Microsoft was extending the partnership with a multiyear, multibillion-dollar investment, the public summary focused on research, products, and infrastructure. Azure would remain OpenAI’s exclusive cloud provider across research, API services, and products. That already made the relationship unusually tight.

The AGI wrinkle made it tighter and stranger. Public reporting over time suggested that AGI was not treated like ordinary pre-AGI technology. The clearest later summary came from the Associated Press on October 28, 2025, which explained that OpenAI had previously said its nonprofit board would decide when AGI had been reached, effectively ending the old Microsoft arrangement as people had understood it. Even without the full contract text in public, the pattern was clear: AGI was not just a label. It was a trigger.

The easiest comparison is a long-term supply agreement with a trigger clause. Before the trigger, certain rights apply. After the trigger, the map changes. Once you see AGI that way, a lot of OpenAI’s history makes more sense. The argument is no longer only about benchmark scores or model capabilities. It is also about licensing, exclusivity, bargaining power, and timing.

OpenAI’s governance structure adds another layer. The Charter says OpenAI’s primary fiduciary duty is to humanity, not to investors in the ordinary corporate sense. That means an AGI declaration was never going to look like a normal boardroom event. It would carry mission language, governance consequences, and commercial fallout all at once.

So Microsoft did not just back a lab that talked about AGI. It tied itself to an organization whose AGI threshold could reshape the partnership itself.

The deal changed shape in 2025

The easiest way to understand the current OpenAI Microsoft agreement is to follow the dates.

On January 21, 2025, Microsoft said in an official blog post that the key elements of the partnership would remain in place through 2030. Microsoft listed them plainly: access to OpenAI IP for Microsoft’s products, revenue sharing that flows both ways, and API exclusivity on Azure. It also said new capacity would move to a right of first refusal model. In practical terms, Microsoft no longer had to be the automatic provider for every future expansion, but it still got the first chance to supply it.

That alone was a notable shift. It suggested a relationship that stayed commercially close while becoming more flexible on infrastructure.

The bigger change came on October 28, 2025, when OpenAI published ‘The next chapter of the Microsoft-OpenAI partnership’. This was not a vague reaffirmation. It described a definitive agreement with more explicit terms.

Under OpenAI’s public summary, once OpenAI declares AGI, an independent expert panel verifies that declaration. Microsoft keeps exclusive rights to productize OpenAI’s model and product IP through 2032, including post-AGI technology. Microsoft’s access to OpenAI’s confidential research and infrastructure IP lasts until AGI verification or through 2030, whichever comes first. OpenAI also said Microsoft keeps a 20% revenue share through 2032. At the same time, Microsoft is no longer OpenAI’s right-of-first-refusal compute provider, and OpenAI can develop open-weight models and products that use other cloud providers.

That is a major structural rewrite. The earlier public story looked like a hard cutoff. AGI happens, and the old arrangement stops. The October 2025 structure looks more like several clocks running at once. Product IP has one clock. Research IP has another. Revenue sharing has another. Cloud capacity has another. AGI still matters, but it no longer acts like one simple switch.

Then came the February 27, 2026 update. In a joint statement from OpenAI and Microsoft, the two companies said that new funding and new partners did not change the Microsoft relationship they had already described. Their IP relationship was unchanged. Their commercial terms and revenue share arrangement were unchanged. Azure remained the exclusive cloud provider of OpenAI’s stateless APIs. And the AGI definition and process remained unchanged as well. At the same time, OpenAI kept the ability to work with Stargate and other providers for additional compute.

That sequence matters because it turns a fuzzy public story into a readable timeline. January 21, 2025 kept the partnership strong through 2030. October 28, 2025 rewrote what post-AGI and pre-AGI rights actually look like. February 27, 2026 confirmed that the rewritten structure still holds.

Why the issue is still tricky after the rewrite

The October 2025 agreement clarified a lot. It did not make the subject simple.

The first reason is visibility. The public still does not have the full agreement set. We have official summaries from OpenAI and Microsoft, plus credible reporting, but not a public contract packet that outside analysts can audit line by line. That matters. Reading a partnership through blog posts is like reading a merger through a press release instead of the full filing. You can understand the broad structure, but you should not pretend every clause, exception, and definition is visible.

The second reason is that definition and governance are now braided together. Once AGI is tied to expert-panel verification, licensing windows, and revenue-sharing timelines, the label stops being a purely technical boast. It becomes part of the machinery that allocates rights.

Imagine a model that clearly outperforms human workers across coding, support, and research assistance but still fails in high-reliability physical settings. Is that AGI under the Charter? Under the 2023 planning-post language? Under the expert-panel process in the 2025 definitive agreement? Those are not only academic questions. Depending on the answer, they can influence how long certain Microsoft rights continue and how OpenAI frames its own governance choices.

The ordinary public conversation often misses that procedural layer. People say ‘OpenAI reached AGI’ as if the moment would be self-evident. The public documents now suggest something more administrative and more consequential: there is a declaration, there is verification, and different rights continue on different schedules. That is less dramatic than science fiction, but it is much closer to how real institutions work.

So the rewrite solved one problem and revealed another. It made the contract easier to summarize, but it also made it harder to pretend that AGI is just a neutral technical term.

What this reveals about OpenAI strategy and AGI governance

Once you stop treating AGI as only a research slogan, OpenAI’s broader strategy becomes easier to read.

OpenAI is trying to balance three pressures at once: mission control, capital intensity, and partner flexibility. Its updated structure page says the nonprofit remains in control while the operating business runs through a public benefit corporation, or PBC. A PBC is a company form meant to pursue a stated public mission alongside business objectives. In OpenAI’s case, that structure is supposed to make room for vast fundraising while keeping the Foundation in command of the mission.

The triangle of mission, money, and compute is a useful way to picture it.

Mission means OpenAI wants to preserve the claim that AGI should benefit humanity broadly, not just one investor or one corporate partner. Money means frontier AI costs too much for mission language alone to carry the business. Compute means no serious frontier lab wants to depend on one supplier forever if it can help it. The January 2025, October 2025, and February 2026 partnership terms fit that triangle almost perfectly.

For example, OpenAI can now say all of the following at once without obvious contradiction. The Foundation still controls the company. Microsoft still has large rights and commercial upside. OpenAI can commit to massive Azure purchases while also using other providers where the agreement allows it. That sounds awkward only if you expect a clean startup story or a clean nonprofit story. OpenAI is trying to finance frontier AI while preserving a governance identity that is deliberately unusual.

The governance lesson goes beyond one company. If an AGI label affects licensing rights, revenue share, expert-panel review, or nonprofit control, then AGI is not just a forecast about the future. It is a present-day rule for allocating power.

That is why the debate deserves more than the usual ‘who wins the AGI race?’ framing. OpenAI AGI is tricky because it is carrying two burdens at once. It has to describe a future class of systems, and it has to organize a real partnership in the present.

If you want the adjacent risk debate, our piece on the AGI alignment problem is the most natural follow-on read.

Final Thoughts

The simplest way to read OpenAI AGI is also the least accurate. It is not just a label for very powerful AI, and it is not just a promise about the future. In OpenAI’s case, AGI sits at the intersection of concept, governance, and contract.

That is why the story stays complicated. The concept is not fully settled. The contracts have evolved. The public record is still partial. And the stakes are high enough that every definition choice carries commercial consequences. If you want to understand OpenAI strategy, the right question is not only whether AGI will arrive. It is also who gets to define it, verify it, and live with the contractual consequences once that happens.