If you are trying to separate real AI-driven business automation from sci-fi marketing, this guide gives you the practical answer. It shows where AI agents already work inside an office, where a fully autonomous enterprise still breaks down, what the real cost of AGI looks like, and why human-in-the-loop design remains the safer default. The short version is this: a company can automate more office work than most people think, but a true 0% human office still fails on governance, liability, exception handling, and trust.

The confusion starts with how people picture a company. They imagine a pile of tasks: answer support emails, route approvals, write reports, update records, pay invoices, draft presentations. That picture is only half right. A company is also a legal body, a strategy system, and a reputation system. It does not just move information. It sets priorities, accepts responsibility, resolves conflict, and decides what to do when the script no longer fits.

What a 0% Human Office Would Actually Have to Do

The phrase “0% human office” sounds simple, but it hides four very different kinds of work:

- repetitive workflow execution

- judgment under ambiguity

- legal and financial accountability

- relationship-heavy communication with employees, customers, regulators, and partners

Automation is strongest in the first category. The other three are where the idea of a fully autonomous enterprise becomes much harder.

This is also where terminology matters. Artificial general intelligence, or AGI, usually means a system with broad capability across many tasks rather than a tool built for one narrow job. Even if AGI-style agents become broadly useful, a company still has to answer a harder question: who owns the consequences when an important decision goes wrong?

A plain comparison helps.

- Invoice routing: a digital worker can match an invoice to a purchase order, flag a missing field, and send it to the right queue.

- Firing an employee: the system has to interpret context, check policy, assess legal exposure, consider morale, and carry the consequences.

Both actions happen in an office. Only one is mostly a workflow problem.

That is the first reality check for anyone evaluating AI workforce claims. Running steps is not the same as running a company.

Where AI-Driven Business Automation Already Works

This is the part that hype critics often miss. AI-driven business automation is already useful, measurable, and worth deploying in many settings.

When companies talk about digital workers, they usually mean software agents that log into systems, retrieve data, generate a first pass, and move work to the next step. An AI workforce is simply the larger version of that model: many digital workers supporting finance, operations, support, research, recruiting, and reporting.

The best evidence still comes from scoped workflows rather than whole-company autonomy. In the NBER paper Generative AI at Work, researchers studying 5,179 customer support agents found that access to a generative AI assistant increased productivity by 14% on average, with much larger gains for novice and lower-skilled workers (NBER w31161). That is a strong business result, but notice what it actually shows: AI can improve performance in a defined operating system with measurable outputs and clear feedback.

Anthropic’s Economic Index points in the same direction. It reports that current AI use leans more toward augmentation than full automation, with a 57% to 43% split (Anthropic Economic Index). In plain language, AI is more often helping people do the work than replacing the entire task chain from start to finish.

That matches where AI agents are strongest today:

- summarizing long documents

- drafting standard emails and reports

- routing customer tickets

- extracting information from forms

- searching policy libraries

- preparing first-pass analysis

These systems perform best when the work is mostly digital, repeated often, easy to check, and low enough risk that mistakes can be caught before they spread.

That last point is important. An AI workforce is closer to a fast junior operations layer than to a self-governing management team. If you want a wider view of where narrow AI already beats humans on speed or pattern recognition, see AI tools that outperform humans at specific tasks. That article makes the same underlying point: strong task performance does not automatically translate into broad, accountable autonomy.

Why a Fully Autonomous Enterprise Still Breaks in Practice

A fully autonomous enterprise sounds plausible until the workflow leaves the happy path.

That is the real bottleneck. Businesses are not made only of standard cases. They are full of missing information, conflicting goals, edge cases, policy gaps, emotional reactions, legal risk, and situations where nobody can say with confidence that one rule applies cleanly.

Take a simple refund example. An AI agent can:

- read the order

- check the refund window

- verify payment status

- trigger the refund

That looks clean. Now add one complication: the order came through a third-party marketplace, the customer used two payment methods, and the customer is threatening to escalate to a regulator. The issue is no longer just a refund workflow. It has become a policy, legal, and brand problem.

This is why current agents still need strong boundaries. NIST’s AI Risk Management Framework is built around governance, measurement, and management rather than blind autonomy (NIST AI RMF 1.0). OpenAI’s practical guide to building agents makes a similar point in implementation terms: start with clear workflows, use guardrails, evaluate performance, and hand off when needed (OpenAI practical guide).

That guidance matters because it reveals the real state of the field. If the builders themselves still recommend scoped workflows and handoffs, a self-running company is not a mature operating model yet.

The real question is not whether an agent can produce a plausible output. It is whether the system can:

- detect unusual cases

- stop when confidence is low

- escalate cleanly

- preserve a useful audit trail

- avoid turning one small mistake into a chain of expensive decisions

That is why a company can automate a process far more easily than it can automate ownership of the process.

For readers thinking at the capability level, AI vs human intelligence is relevant here. The business problem is really a judgment problem.

Why Law Still Keeps Humans in the Loop

Human-in-the-loop means a person reviews, approves, or can override an AI system at important decision points. In some presentations, that sounds like a temporary patch. In real organizations, it is still one of the clearest markers of responsible deployment.

One reason is corporate law. Delaware remains the most influential corporate law jurisdiction in the United States, and Section 141 of the Delaware General Corporation Law provides that directors are natural persons (Delaware Code, Section 141). That alone undercuts the literal version of the 0% human company idea. A bot can prepare a board memo. It cannot legally be the board.

Regulation points in the same direction. The EU AI Act entered into force on August 1, 2024, with obligations phasing in after that date, and the European Commission’s public guidance keeps human oversight central for high-risk systems (European Commission timeline; AI Act FAQ). Employment-related AI is one of the clearest examples. If a system influences hiring, worker management, or access to work, the oversight question is not optional.

A simple comparison keeps this grounded:

- Resume screening: AI can extract skills, rank candidates, and draft a shortlist.

- Hiring decision: a human should still check context, spot missing evidence, challenge bias, and own the final call.

That is not anti-AI. It is what accountability looks like when the decision has consequences beyond efficiency.

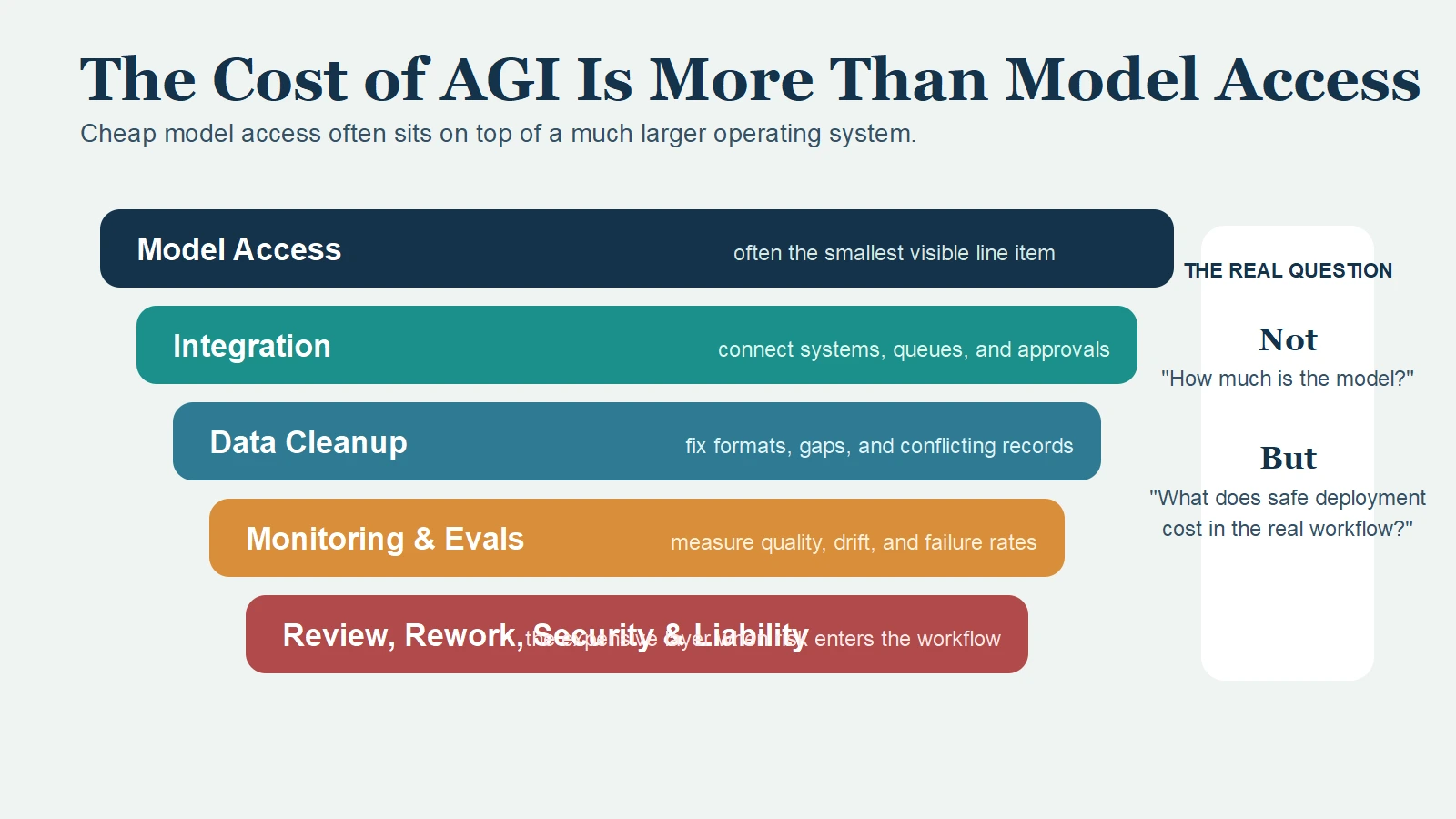

The Cost of AGI Is Mostly in the System Around It

When people talk about the cost of AGI, they often reduce the question to model pricing. That is the wrong frame for an operating decision.

Model access may be the cheapest part of the stack. The larger costs usually sit around it:

- workflow integration

- data cleanup

- identity and permissions

- monitoring and evaluation

- review queues

- security controls

- rework when outputs are wrong

- legal or brand exposure when failures reach the real world

This is why “the model is cheap” often turns into “the program is expensive.”

The comparison below is more useful than any generic pricing screenshot:

| Goal | Looks cheap at first | Gets expensive in practice |

|---|---|---|

| Replace first drafts of reports | model access and prompting | review standards and workflow integration |

| Automate invoice coding | extraction and routing | exceptions, approvals, and audit trails |

| Automate recruiting support | parsing and ranking | fairness review, documentation, and accountability |

| Automate management decisions | dashboard summaries | liability, ethics, and trust |

This is the hidden weakness in the fully autonomous enterprise idea. As risk rises, the supporting system around the AI grows larger. In many cases, that supporting system still includes people.

The better question is not “How much does the model cost?” It is “What does it cost to run this workflow safely, reliably, and accountably at scale?”

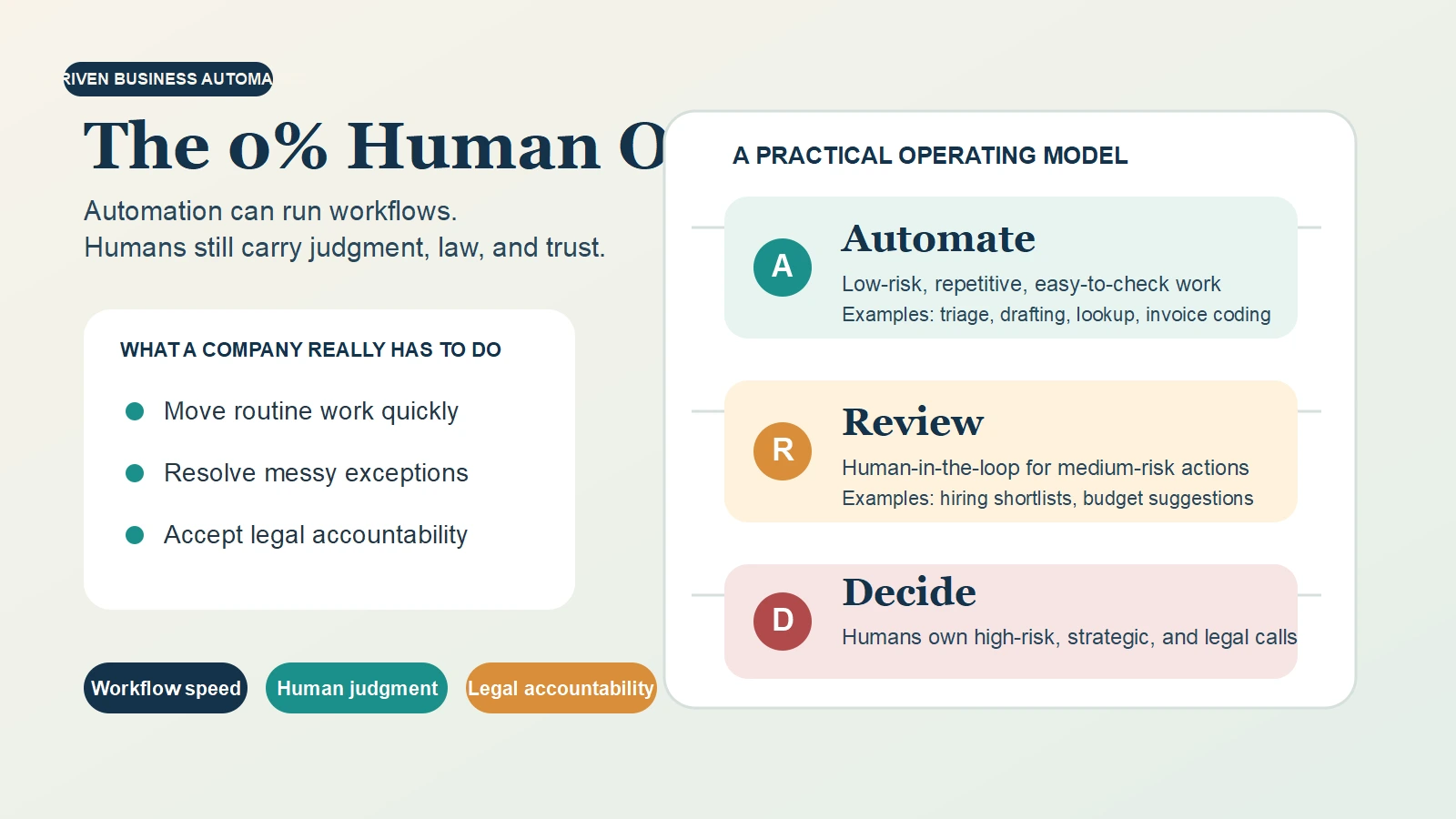

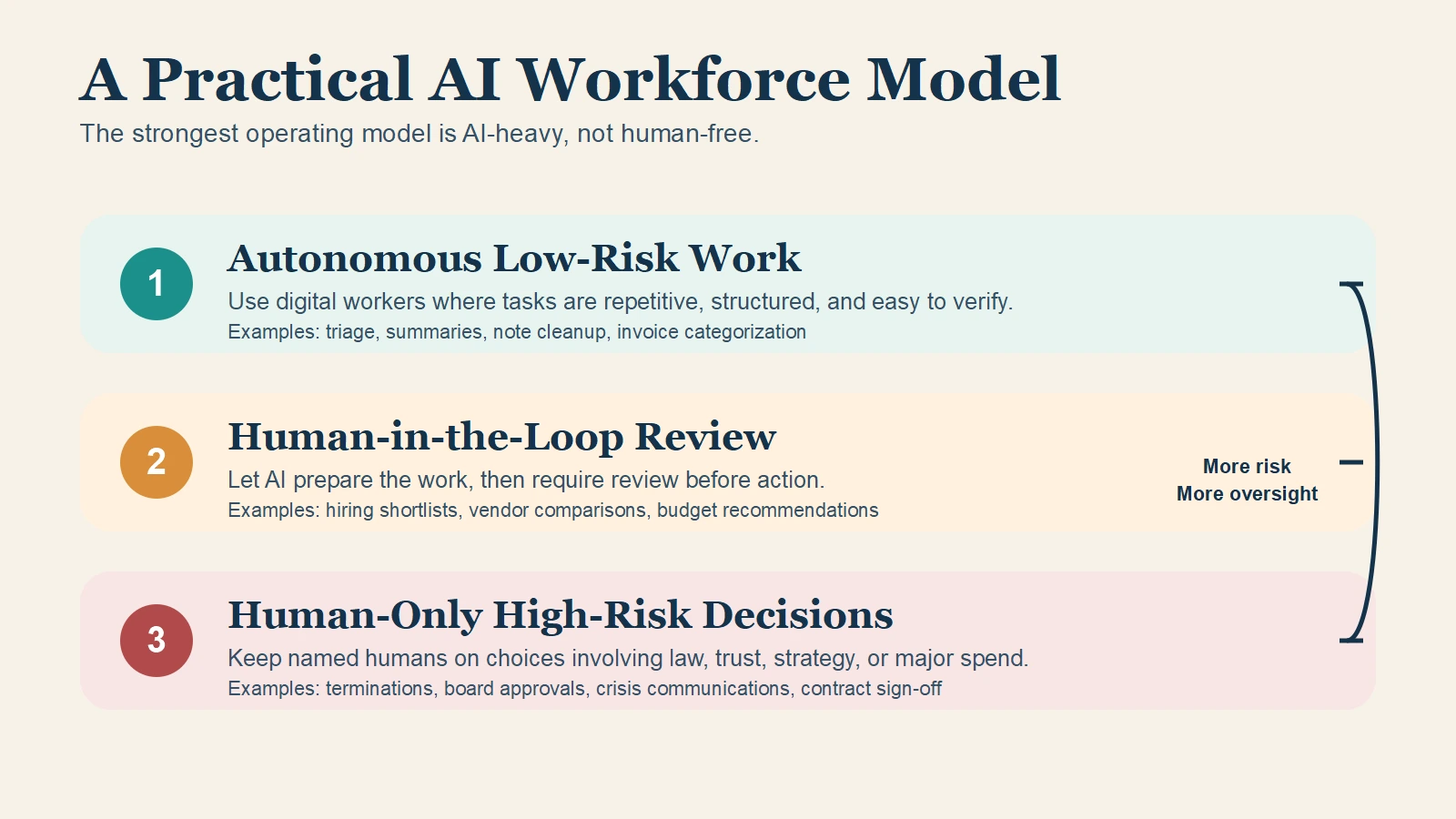

The Practical Model Is an AI Workforce with Human-in-the-Loop Design

The strongest near-term model is not a 0% human office. It is a human-led company with an AI-heavy operating layer.

The cleanest way to run that model is to sort work into three levels:

1. Autonomous low-risk work

Use AI agents where the work is repetitive, structured, and easy to verify.

Examples:

- inbox triage

- meeting note cleanup

- FAQ drafting

- policy lookup

- invoice categorization

2. Human-in-the-loop medium-risk work

Use AI to prepare the work, then require review before action.

Examples:

- candidate shortlists

- vendor comparisons

- customer exception handling

- performance review summaries

- budget recommendations

3. Human-only high-risk work

Keep accountable humans in charge where the decision changes legal exposure, employment status, major spending, or strategic direction.

Examples:

- terminations

- board resolutions

- financing approvals

- crisis communications

- major contract sign-off

This model sounds less dramatic than the fully autonomous enterprise pitch. That is exactly why it is more credible. It fits the evidence, the law, and the way real businesses absorb risk.

For readers who want the capability background before the management conclusion, this primer on what artificial general intelligence is helps separate broad intelligence claims from the much harder problem of self-governing corporate autonomy.

What CEOs, HR Managers, and Business Students Should Watch Next

The right takeaway depends on where you sit.

For CEOs

Do not ask, “Can AI replace the office?” Ask:

- Which workflows are repetitive enough to automate well?

- Where do errors become expensive?

- Which decisions require a named human owner?

- What is the fallback path when the agent is wrong?

The best pilot is usually narrow, measurable, and attached to a queue, not a grand promise to replace management.

For HR Managers

The real shift is job redesign, not simple removal. The World Economic Forum’s Future of Jobs Report 2025 is useful because it frames AI as a force that changes task mix and skill demand, not just headcount arithmetic (WEF 2025 PDF). That means HR has to define:

- which tasks can be automated

- which tasks need review

- what escalation rules look like

- how fairness is checked

- how employees are trained to supervise the work, not only perform it

For Business Students

The best preparation is not learning prompting in isolation. It is learning how organizations actually work. Process mapping, compliance basics, decision rights, operations design, and data quality will matter more as AI systems become normal. The people who understand both business structure and AI limits will be more valuable than the people who only know the tools.

Final Thoughts

Can a company run entirely on AGI agents? Not in the strong sense that most readers mean.

A company can automate a surprising amount of office work. It can build a serious AI workforce. It can use digital workers to compress cycle time, reduce routine effort, and improve first-pass output. But a true 0% human office still hits three hard walls: messy exceptions, legal accountability, and the trust required in consequential decisions.

That is why the smarter target is not “no humans.” It is knowing exactly where AI-driven business automation creates leverage, exactly where humans need to stay in the loop, and exactly what the full cost of AGI really is.

For a broader long-range perspective, the debate around superintelligence risks is the right next read after this one.

Sources

- NIST AI RMF 1.0

- European Commission: AI Act enters into force

- European Commission AI Act FAQ

- Delaware General Corporation Law, Section 141

- Anthropic: The Anthropic Economic Index

- NBER: Generative AI at Work

- NBER: The Rapid Adoption of Generative AI

- World Economic Forum, Future of Jobs Report 2025

- OpenAI: A Practical Guide to Building Agents