Reader payoff: By the end of this article you’ll know exactly which aspects of intelligence—learning, reasoning, and consciousness—are still uniquely human and which are already mastered by today’s most advanced AI systems. You’ll also get a clear roadmap of the research frontier, so you can decide whether to bet on AGI, collaborate with it, or stay focused on human‑centric innovation.

1. Defining Intelligence: Human vs. Machine

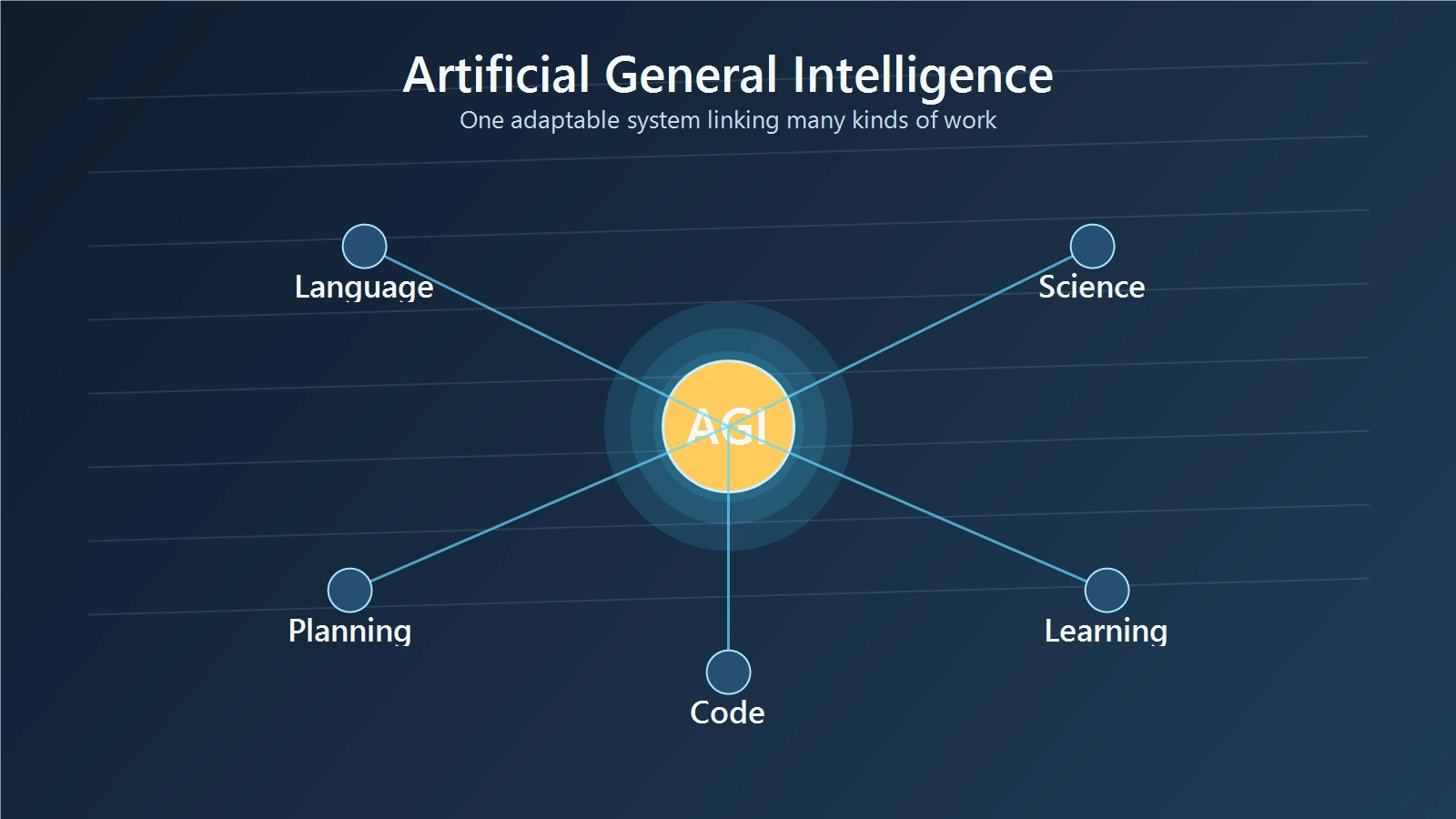

Psychologists have long measured human intelligence with the g‑factor, a statistical construct that captures performance across diverse cognitive tasks (Spearman, 1904). In contrast, AI researchers define “Artificial General Intelligence” (AGI) as a system that can perform *any* intellectual task a human can, without task‑specific tuning (Bostrom, 2014). The distinction matters: human intelligence is a blend of reasoning, perception, and consciousness, while current AGI prototypes excel at pattern‑recognition and large‑scale inference.

Key distinction

- Breadth vs. depth: Humans can switch domains effortlessly (e.g., from poetry to physics). Most AI systems require massive data and fine‑tuning for each new domain.

- Adaptivity: Human cognition reorganises neural pathways (plasticity) throughout life; AI weights are static after training unless retrained.

2. The Architecture of Thought: Brain vs. Transformer

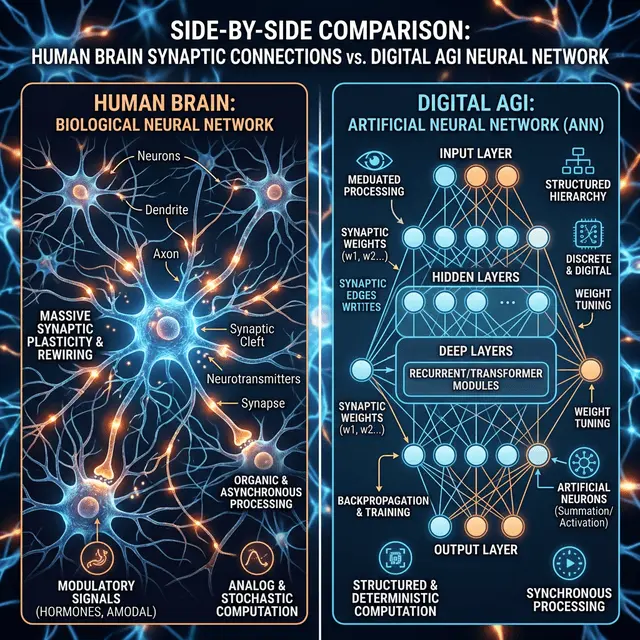

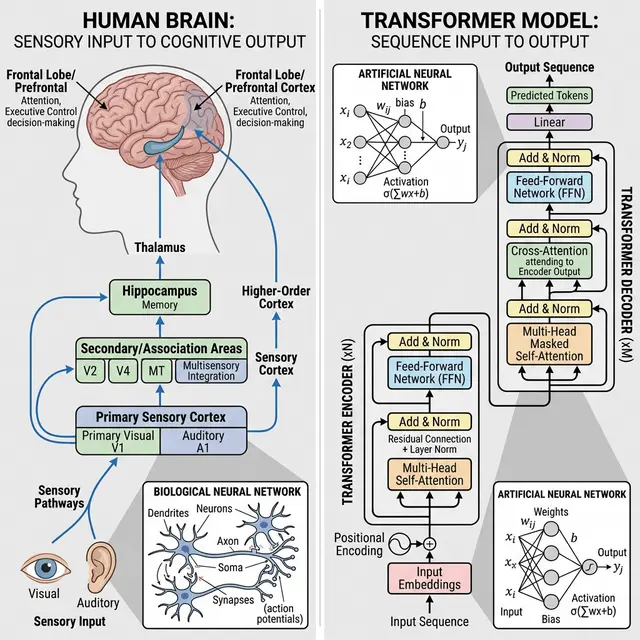

The human brain contains roughly 86 billion neurons, each forming thousands of synapses. Neuronal firing follows electrochemical gradients, enabling parallel, low‑energy computation (Markram et al., 2015). Modern AGI models—most notably the transformer architecture—use “attention heads” that weigh the importance of each token in a sequence (Vaswani et al., 2017). While both systems process information hierarchically, the brain’s connectivity is dense and recurrent, whereas transformers are sparse and feed‑forward.

Concrete comparison

Imagine a cortical column (≈ 100 µm tall) as a “mini‑processor” that repeatedly refines sensory input. A transformer block performs a similar refinement, but it does so by mathematically scaling dot‑product attention across the entire input sequence—anan operation that can be executed in milliseconds on a GPU.

3. Learning Mechanisms: Experience vs. Data

Human learning follows a developmental trajectory: infants first acquire sensorimotor skills, then language, then abstract reasoning. Critical periods—windows of heightened plasticity—shape lifelong abilities (Kuhl, 2004). AGI, by contrast, learns from massive static datasets. GPT‑4, for example, was trained on 45 TB of text, then fine‑tuned on a curated subset (OpenAI, 2023). The model does not “experience” the world; it merely detects statistical regularities.

Example

A child learns the concept of “gravity” by watching objects fall, feeling the force, and receiving corrective feedback. GPT‑4 “learns” gravity only because billions of sentences mention it, never feeling the pull itself.

4. Understanding & Consciousness

Philosophers differentiate functional understanding (the ability to manipulate symbols) from phenomenal consciousness (subjective experience). Thomas Nagel’s famous “what is it like to be a bat?” argument illustrates that even a perfect functional model of bat sonar would lack the bat’s first‑person perspective (Nagel, 1974). Current AGI systems exhibit functional understanding—they can answer questions, translate languages, and generate poetry—but they lack any form of subjective experience.

Thought experiment

Mary, a neuroscientist, knows every physical fact about colour vision but has never seen red. When she finally sees red, she gains new *qualia*—a type of knowledge no amount of data could provide. An AGI, even with perfect knowledge of colour theory, will never acquire such qualia because it lacks a “what‑it‑is‑like” substrate.

5. Real‑World Benchmarks: Where Humans Win

Human cognition shines in common‑sense reasoning. The ARC (AI2 Reasoning Challenge) tests everyday problem solving. Top human participants score ~ 95 %, while GPT‑4 reaches ~ 78 % (OpenAI, 2023). Similarly, the Winograd Schema Challenge still shows a gap: humans achieve near‑perfect scores, whereas the best models hover around 80 %.

Concrete example

Question: “The city council refused to grant a permit because the building was too tall. Who was disappointed?” Humans instantly infer that the *architect* is disappointed, not the *city council*. Many models still mis‑interpret the pronoun.

6. Real‑World Benchmarks: Where AGI Wins

AGI excels at massive pattern extraction. AlphaZero learned chess, shogi, and Go from scratch in a matter of hours, defeating world champions (Silver et al., 2018). GPT‑4 can generate coherent code, write legal briefs, and summarise scientific papers in seconds—tasks that would take humans weeks of focused effort.

Side‑by‑side example

Writing a 10‑page literature review on “neuroplasticity” takes a graduate student weeks; GPT‑4 can draft a first‑pass in under a minute, citing dozens of papers (though verification is required).

7. The Road Ahead: Hybrid Cognition

Researchers are exploring **neuromorphic hardware** that mimics neuronal spiking (Merolla et al., 2014) and **brain‑computer interfaces** that blend biological and digital processing (Neuralink, 2022). These hybrid systems could combine the brain’s energy‑efficient parallelism with AI’s raw data throughput, potentially narrowing the gap in *understanding*.

Implication for founders

Startups that integrate neuromorphic chips for edge‑AI (e.g., low‑power vision) may achieve performance that pure GPUs cannot, opening new markets in autonomous robotics and medical diagnostics.

8. Final Thoughts

AGI has already surpassed humans in raw data processing, pattern recognition, and speed. Yet **true understanding**—the blend of functional competence, common‑sense reasoning, and conscious experience—remains a uniquely human domain. The most realistic near‑future scenario is a **hybrid ecosystem** where AGI augments human cognition, while research into neuromorphic and brain‑computer interfaces strives to bridge the remaining gaps.

For readers, the actionable insight is clear: leverage AGI for scale‑intensive tasks, but continue to cultivate the human qualities—creativity, ethical reasoning, and experiential learning—that machines cannot yet replicate.