If you want to use AI without turning work into a copy-paste exercise, the right model is augmented intelligence. In practical terms, that means using AI as a co-processor: a system that helps with search, synthesis, drafting, and coordination while people still set goals, interpret nuance, and own the outcome. By the end of this guide, you will have a workable framework for human-AI collaboration, a clear way to think about cognitive tools and digital brain systems, and a sharper sense of where AI should support work rather than quietly take it over.

The short version is this: AI delivers the most value when it handles the parts of knowledge work that are repetitive, expansive, or computationally heavy, while humans keep the parts that need judgment, context, accountability, and social trust. That is a better fit for the evidence than either full-replacement rhetoric or vague copilot branding.

What augmented intelligence actually means

Why the term matters more than it sounds

Augmented intelligence is often treated like a softer way to say AI. That misses the point. The useful meaning is operational: the system is extending human capability rather than trying to replace the human role altogether.

That framing aligns well with NIST’s AI Use Taxonomy, which argues for describing AI in terms of human goals and outcome-based human-AI activities. That is a better lens for workplace use than model-centric language because work is not only prediction. It is also sequencing, prioritizing, interpreting, and deciding.

The comparison is simple. Automation tries to remove the person from a defined task. Augmented intelligence keeps the person in the loop but changes what they can handle per hour, how much context they can manage, and how quickly they can move from raw information to a usable output. A summarizer that prepares a brief for a manager is augmented intelligence. A rule-based system that processes claims with no human touch is closer to automation.

The co-processor analogy in plain English

The co-processor idea is more precise than calling AI a helper or teammate. In computing, a co-processor takes on a specialized class of operations so the larger system can work faster or more effectively. The main processor still matters. The co-processor does not become the whole machine.

That is a strong metaphor for modern workplace AI. A model can scan a large document set faster than a human, draft a first pass more quickly, or reorganize notes into a cleaner structure. It still depends on human direction when the work requires deciding what matters, what can be ignored, which tradeoff to accept, or how the result fits an organizational context.

Take a market analyst preparing a weekly briefing. The AI can search prior notes, summarize announcements, and cluster repeated themes. The analyst still decides what belongs in the final recommendation and what the business should do next. That is augmented intelligence in practice.

Why AI works best as a co-processor, not a replacement

What the evidence says about human augmentation

The main reason to favor a co-processor model is that the research is more nuanced than the common claim that humans plus AI are automatically best.

A 2024 meta-analysis in Nature Human Behaviour examined 106 experiments and found that, on average, human-AI systems performed better than humans alone. That is human augmentation. But on average those combined systems did not outperform the best standalone human or AI setup. They helped, but they were not automatically the top-performing arrangement in every case. Source

The task type mattered too. Decision tasks were more likely to show performance losses from combining humans and AI, while creation tasks looked more promising. That is a useful correction for workplace teams. If the job is narrow, high-stakes, and decision-heavy, the combined workflow needs more design and more review than people usually expect.

Field evidence shows the upside when the task and interface are a better fit. In Generative AI at Work, Brynjolfsson, Li, and Raymond studied 5,179 customer support agents and found a 14% average productivity increase from AI assistance, with a 34% improvement for novice and lower-skilled workers. Customer sentiment improved too. That looks less like replacement and more like a system spreading stronger operating practice across the workforce.

Why workflow design matters more than raw model power

The same pattern shows up in team settings. Harvard Business School’s 2025 summary of the Cybernetic Teammate experiment describes 791 professionals at Procter & Gamble working individually or in teams with AI support. Individuals using AI produced stronger work, and AI-enabled teams produced the highest-quality solutions overall.

The important point is not simply that AI helped. It helped by changing how expertise moved. AI gave individuals access to some of the benefits of a team, and it helped teams mix technical and commercial thinking more effectively. That is why replacement is often the wrong frame. The better question is which parts of cognition should stay human-led and which parts should be accelerated by a machine.

For entrepreneurs, this matters directly. Product advantage does not come only from a better model. It also comes from a better handoff between human and model. A weaker model inside a well-designed workflow can outperform a stronger model inside a sloppy one.

The four work patterns where augmented intelligence creates leverage

Retrieval and synthesis

The first high-value pattern is retrieval and synthesis. Knowledge work slows down when people spend more time hunting for material than reasoning about it. AI can pull notes, summarize documents, extract action items, and compress a long trail of context into something a human can use quickly.

A consultant is a good example. Before a client meeting, the co-processor can search prior call notes, summarize last quarter’s deck, extract themes from emails, and produce a short prep memo. The consultant still decides which insight is real and which point belongs in the room. The leverage comes from starting with a map instead of a mess.

Drafting and transformation

The second pattern is drafting and transformation. This is where AI turns rough material into a usable first pass.

A founder might dictate notes after a customer conversation and have AI turn them into a follow-up email, a product brief, and a CRM update. An HR manager might do the same with policy notes, turning them into a first draft of a job description or training memo. The model is saving transformation time, not replacing the final reviewer.

This is also where tools can feel most impressive, which is why they are easy to over-trust. Drafting speed is real. Final judgment still matters. Readers who want adjacent examples can see MindoxAI’s roundup of AI tools that already outperform humans at specific tasks.

Structured analysis

The third pattern is structured analysis. AI is useful when the data is too large or repetitive for fast human scanning but still needs a human conclusion.

Imagine an HR team reviewing hundreds of open-ended survey responses. A model can cluster comments by theme, highlight recurring complaints, and pull representative examples. What it should not do alone is decide which issue deserves policy change, how severe the problem really is, or what message leadership should send in response.

The same logic applies to support-ticket analysis, research coding, contract comparison, and competitive monitoring. AI can shrink the search space. The human should still own the conclusion.

Coordination and memory

The fourth pattern is coordination and memory. This is where digital brain systems become useful rather than trendy.

Many teams do not lose time because the core work is intellectually impossible. They lose time because context is scattered. Meetings end without clean follow-ups. Decisions live inside chat threads nobody can find. The same question gets answered repeatedly because no shared memory layer exists.

The NBER working paper on the labor-market effects of generative AI found that, in the November 2024 wave, workers using generative AI reported average time savings equal to about 2.2 hours per week among users. Source Those savings do not come only from one brilliant prompt. They come from repeated reductions in friction across searching, summarizing, rewriting, and follow-up work.

Build a digital brain without outsourcing judgment

What a digital brain is and is not

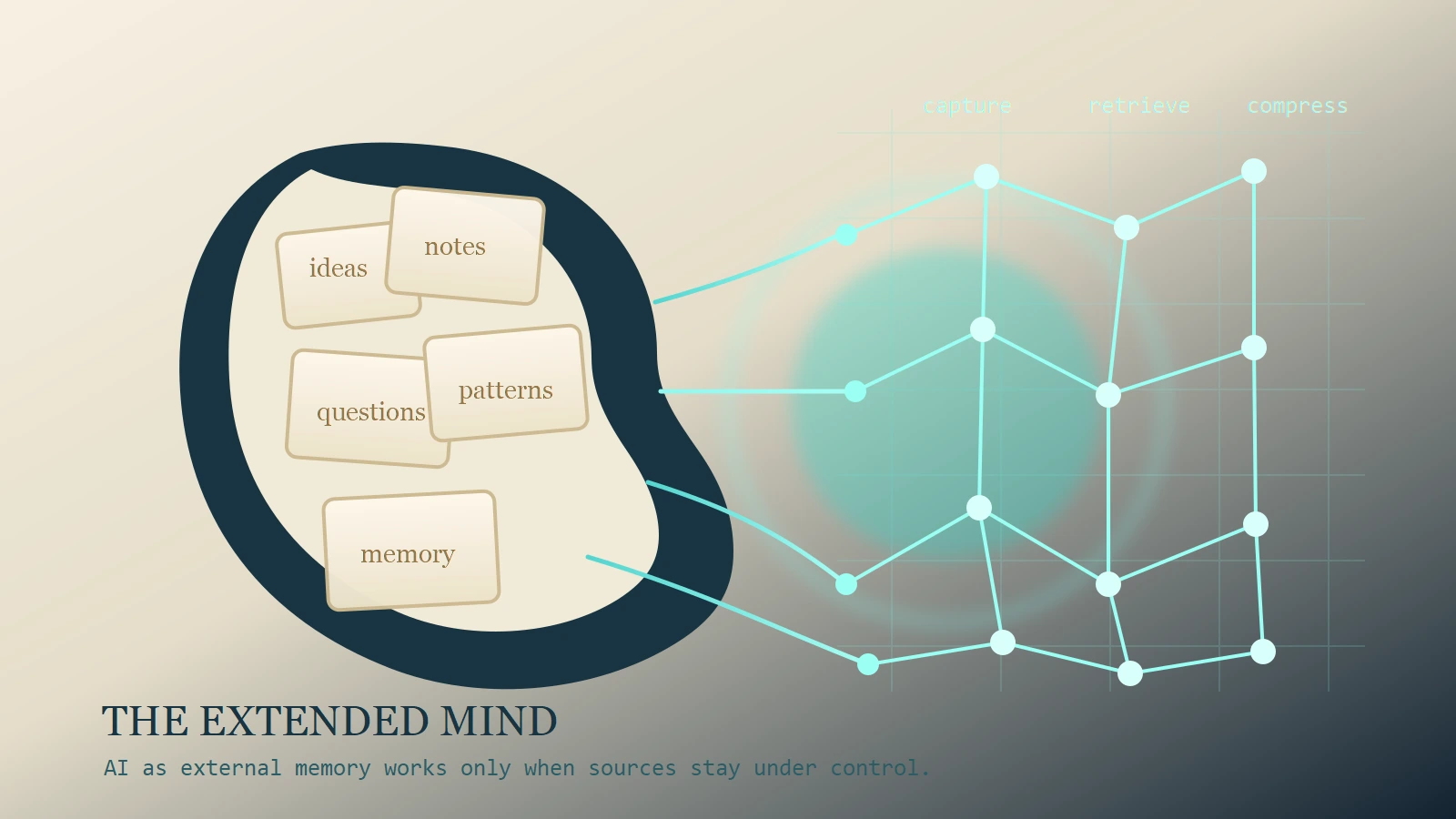

Digital brain is a useful phrase if it stays grounded. It should not mean a second mind that thinks for you. It should mean an external knowledge layer: notes, transcripts, documents, meeting summaries, bookmarks, and retrieval systems organized so that people and AI can find the right context at the right time.

That is why the term cognitive tools fits here. A cognitive tool extends memory, attention, or pattern detection. It does not remove the need for thinking. The most useful digital-brain systems do not replace judgment. They reduce the cost of recall.

Compare two workflows. In the first, a model with no company context is asked to generate a board memo from scratch. In the second, the same model has access to curated notes, previous memos, meeting transcripts, and approved strategic language. The second setup is not better because the model got smarter overnight. It is better because the human built a better knowledge environment around it.

A simple operating model for capture, retrieval, and review

The practical version is straightforward.

- Capture the raw material: notes, transcripts, source documents, customer conversations.

- Structure it enough that retrieval works: tags, naming, folders, or a searchable knowledge base.

- Use AI for recall and first-pass synthesis.

- Keep humans responsible for final interpretation and sign-off.

That last step matters more than most teams think. A March 15, 2026 study in Scientific Reports found that passive AI use, such as copying AI-generated content with minimal engagement, reduced self-efficacy, ownership, and meaning. Active collaboration preserved workers’ psychological connection to the task much better. Source

So the right digital-brain workflow is not ask the model, paste the answer, move on. It is ground the model in real context, use it to accelerate thinking, then make a visible human decision. For a more conceptual version of that idea, see MindoxAI’s take on the extended mind.

What managers, founders, and HR should redesign

Training, review thresholds, and task ownership

The implementation lesson is clear: buying an AI tool is not the same thing as deploying augmented intelligence.

Managers need to decide which tasks are AI-assisted, which tasks are AI-drafted but human-approved, and which tasks should remain mostly human-led. A sales follow-up draft is one kind of task. A performance review, disciplinary memo, or compensation recommendation is another. The review threshold should not be the same across all three.

Founders should think similarly. The useful question is not where can I drop a chatbot. It is where does a co-processor reduce friction without weakening accountability. In most organizations, that points to information-heavy work before it points to action-heavy automation.

Why consultation improves outcomes

HR leaders should care about consultation because it changes outcomes, not just sentiment. OECD survey work across seven countries found that workers using AI were more likely to report better performance and better working conditions when employers had consulted workers or worker representatives about the adoption of new technology. One concrete example from the report: workers in consulted organizations were 9 percentage points more likely to say AI improved health and safety. Source

That does not mean consultation fixes every rollout. It does mean implementation quality and worker voice are part of the productivity story. The ILO policy brief on generative AI points in the same direction: augmentation and task transformation are generally more plausible near-term outcomes than sudden full replacement. Source

The strongest teams will design training around judgment, verification, and workflow decomposition. They will not train people only to prompt. They will train them to decide what to delegate, how to review, and when to override the system. Readers who want the broader contrast between flexible human reasoning and specialized machine capability can see MindoxAI’s explainer on AI vs human intelligence.

Where augmented intelligence breaks down

Passive dependence weakens ownership

The most obvious failure mode is passive dependence. If the model becomes the first drafter, the final drafter, and the invisible thinker behind the work, the human role can degrade into approval theater.

That is not only a quality problem. It is a capability problem. The 2026 Scientific Reports study is useful here because it shifts attention from output speed to the worker’s relationship with the work. When people rely on AI passively, self-efficacy and ownership can drop. Over time, that matters as much as a temporary time saving.

High-stakes decisions need tighter human control

The second failure mode is overusing AI in decision-heavy, high-stakes contexts. The Nature Human Behaviour meta-analysis found that decision tasks were more likely than creation tasks to show performance losses from human-AI combinations. That does not mean AI is useless in those settings. It means the combined system needs much tighter design. Source

A hiring shortlist is a good comparison. AI can summarize applicant materials, normalize formatting, and surface keywords. It should not quietly become the real decision-maker. The same is true in legal escalation, compliance review, performance management, or sensitive people operations. Once the model is steering consequential judgments, human oversight has to get tighter, not looser.

If the model has weak context, the failure mode gets worse. A generic tool with no grounded organizational memory can still produce fluent nonsense. That is why a well-designed augmented-intelligence workflow usually beats a free-floating prompt workflow, even if both use the same base model.

Final Thoughts

The strongest case for augmented intelligence is not that AI has become a magical teammate. It is that knowledge work contains a large layer of retrieval, drafting, comparison, and coordination that machines can accelerate well.

What that does not remove is the need for judgment. It makes judgment more valuable, because the faster the first pass becomes, the more important it is to decide what is true, what matters, and what should happen next. That is why the co-processor model works so well. It gives teams a way to adopt AI seriously without pretending the machine has become the whole worker.