AI vs Human Intelligence: Key Differences and Similarities

If you are trying to understand what AI can do, what humans still do better, and where both should work together, this guide gives you a practical map. You will get clear definitions, evidence-based comparisons, and a simple framework for choosing AI-led, human-led, or hybrid work. The goal is not hype. The goal is decision clarity.

The key point is straightforward: AI and human intelligence overlap in some abilities, but they are not interchangeable. AI can be faster and more scalable in many narrow tasks. Human intelligence remains stronger in context, values, and accountable judgment, especially when stakes are high or goals conflict.

First, What Do We Mean by “Intelligence”?

Comparisons fail when definitions stay vague. So start here.

Human intelligence is the ability to learn, reason, adapt, communicate, and make decisions across changing social and physical contexts. It includes judgment, emotional interpretation, and value-based choices.

Machine intelligence in current AI systems is the ability to detect patterns, predict likely outputs, and optimize results for defined objectives. It can feel broad in conversation, but its performance depends on training data, architecture, and evaluation design.

A practical comparison: a teacher can explain the same concept differently for a confident student, an anxious student, and a distracted student. An AI tutor can generate many explanations quickly, but the teacher still carries the responsibility for emotional context, fairness, and long-term learning outcomes.

The AGI-levels framework is useful because it separates performance, generality, and autonomy rather than mixing everything into one label: Levels of AGI (Morris et al.).

7 Core Differences Between AI and Human Intelligence

1) Learning style: data-heavy optimization vs lived experience

Humans can often learn from fewer examples by combining memory, social cues, and previous knowledge. AI models generally require larger datasets and repeated training signals to become reliable across task variants.

That does not mean humans are always efficient or AI is always inefficient. It means the mechanisms differ. Human learning is embodied and socially embedded. Machine learning is statistical optimization.

Cognitive science has long highlighted this gap in sample-efficient concept formation: Building Machines That Learn and Think Like People.

2) Generalization: narrow excellence vs broad adaptability

AI already delivers outstanding narrow-domain results. AlphaFold transformed protein structure prediction workflows in biology: Nature (2021). GraphCast showed strong performance in medium-range weather forecasting: DeepMind publication.

Those are real breakthroughs. But they are not proof that one system can automatically transfer those abilities to unrelated tasks like negotiation, ethical conflict resolution, and public communication.

Concrete comparison: a hospital team can integrate diagnosis, family communication, policy constraints, and uncertainty in one decision flow. Current AI systems usually handle slices of that process, not the full context stack.

3) Speed and consistency vs context and intent

AI can process large volumes quickly and repeatedly. This is a major advantage in classification, summarization, retrieval, and pattern-heavy workflows.

The 2025 AI Index reports rapid capability and adoption growth while noting that complex reasoning remains a challenge in many settings: Stanford HAI AI Index 2025.

Example: AI can summarize 100 incident reports in minutes. A human operations lead still decides what is material, who is affected, and which action is ethically and legally appropriate.

4) Creativity type: recombination vs purpose-driven originality

AI can generate high-quality drafts, images, and code by recombining learned patterns. Humans also recombine ideas, but often with deeper purpose, social meaning, and accountability to consequences.

Comparison: an AI can write a persuasive apology email quickly. A person decides whether that message should be sent at all, how it will be received in a specific relationship, and whether the wording is ethically honest.

5) Reasoning under ambiguity and value conflict

AI works best when goals and metrics are explicit. Humans become more valuable as ambiguity rises and value tradeoffs become unavoidable.

The “jagged technological frontier” result shows how performance gains vary by task fit and can decline when AI is used outside its strongest scope: MIT/SSRN study.

Example: AI can produce several policy options. Humans still decide which option is acceptable when communities carry different risks and benefits.

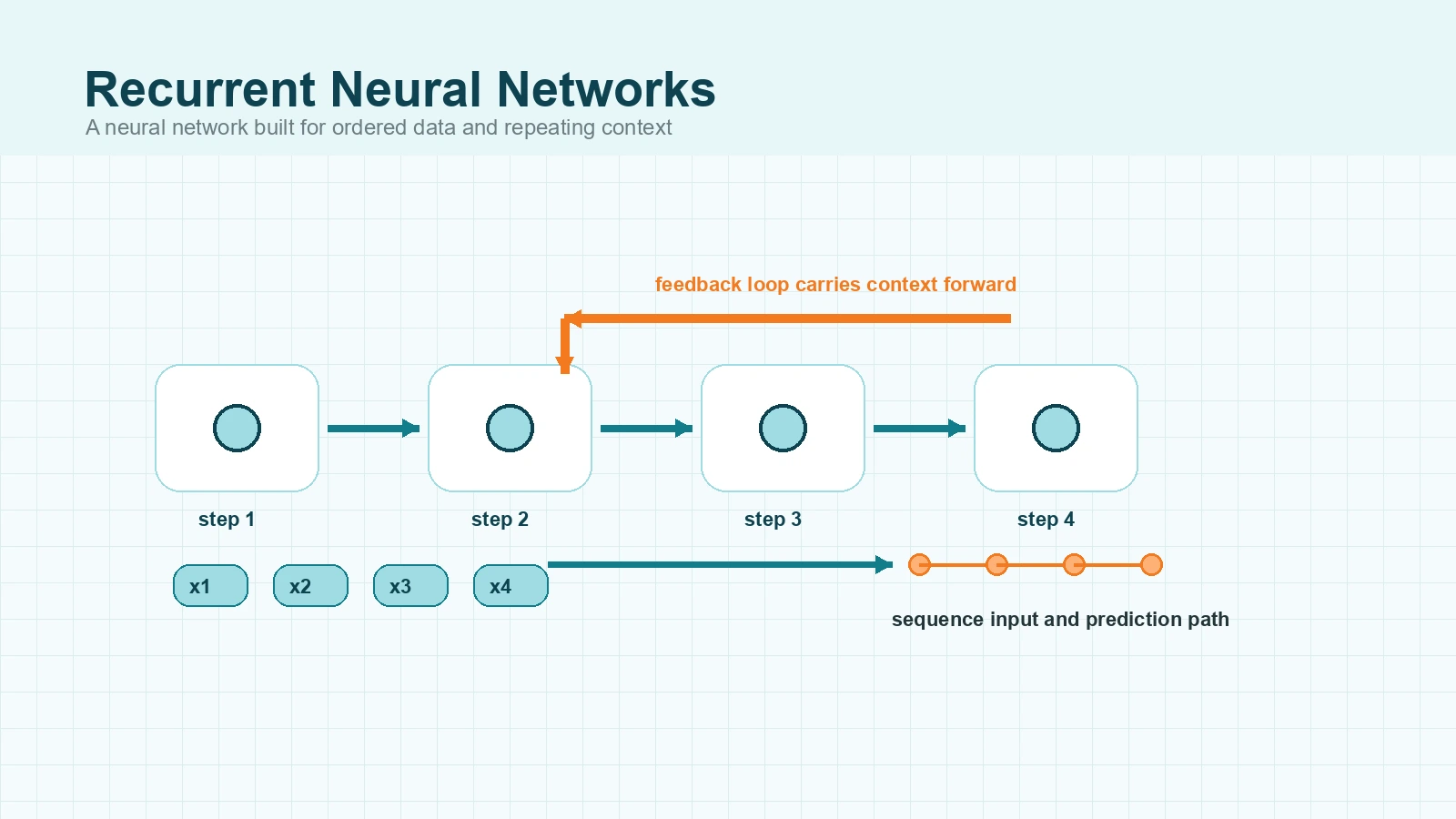

6) Memory and attention profile

Human working memory is constrained. A foundational review estimates a core short-term capacity around four chunks in many tasks: Cowan (2001).

AI systems can work with long contexts and external retrieval. But longer context does not guarantee stable multi-step reasoning or factual reliability. A fluent answer can still contain hidden inconsistencies.

So this is not a “who has more memory” contest. It is two architectures with different strengths and different failure modes.

7) Agency and accountability

Humans can be morally and legally accountable. AI systems cannot bear responsibility in that sense. Responsibility remains with people and institutions that design, deploy, and govern those systems.

NIST’s AI Risk Management Framework is explicit that trustworthy AI requires governance, mapping, measurement, and active management across the lifecycle: NIST AI RMF 1.0.

Comparison: if an AI-supported hiring process causes unfair outcomes, accountability does not disappear into the model. It belongs to the organization and decision-makers.

5 Real Similarities Between AI and Human Intelligence

A useful comparison should also acknowledge overlap.

1) Both learn from feedback

Humans improve through coaching and reflection. AI models can be refined with human feedback signals, including reinforcement learning from human feedback methods: InstructGPT.

2) Both rely on pattern recognition

Humans detect patterns in language, social behavior, and environment. AI detects patterns in tokens, images, logs, and sensor data. In practice, both are doing signal extraction under uncertainty.

Example: in fraud review, AI can flag large-scale anomalies while human investigators evaluate motive, context, and consequences.

3) Both can make confident mistakes

Humans show bias and overconfidence. AI can produce confident but incorrect outputs. The shared lesson is process discipline: confidence should not be mistaken for correctness.

4) Both improve with structure

Clear goals, better feedback, and explicit evaluation improve both human and machine performance. Poorly scoped tasks degrade results for both.

Example: teams that define success criteria before using AI get higher-quality outcomes than teams that accept first-pass fluent output without checks.

5) Both perform better in collaboration

Evidence from workplace studies supports this in context-specific ways. The NBER call-center study found notable productivity gains from AI assistance, with larger gains for less experienced agents: Generative AI at Work.

This does not mean AI improves every task equally. It means hybrid design often outperforms all-or-nothing thinking.

AI Compared to Humans in Everyday Tasks

Most readers need practical choices, not abstract debate. Use this matrix.

| Task type | AI is usually stronger at | Humans are usually stronger at | Best mode |

|---|---|---|---|

| High-volume writing support | Draft speed, restructuring, first-pass variation | Audience fit, nuance, reputational judgment | AI draft, human final edit |

| Pattern-heavy analysis | Large-scale anomaly detection | Causal interpretation and tradeoff choices | AI signal, human decision |

| Education support | Instant explanation and practice generation | Mentorship, motivation, social-emotional context | AI tutor plus human teacher |

| High-stakes domains | Decision support and documentation assistance | Ethics, consent, legal responsibility | Human-led with AI support |

A concrete example in healthcare: AI can prioritize likely risk patterns quickly, but clinicians still integrate patient history, ethics, and legal accountability before final action.

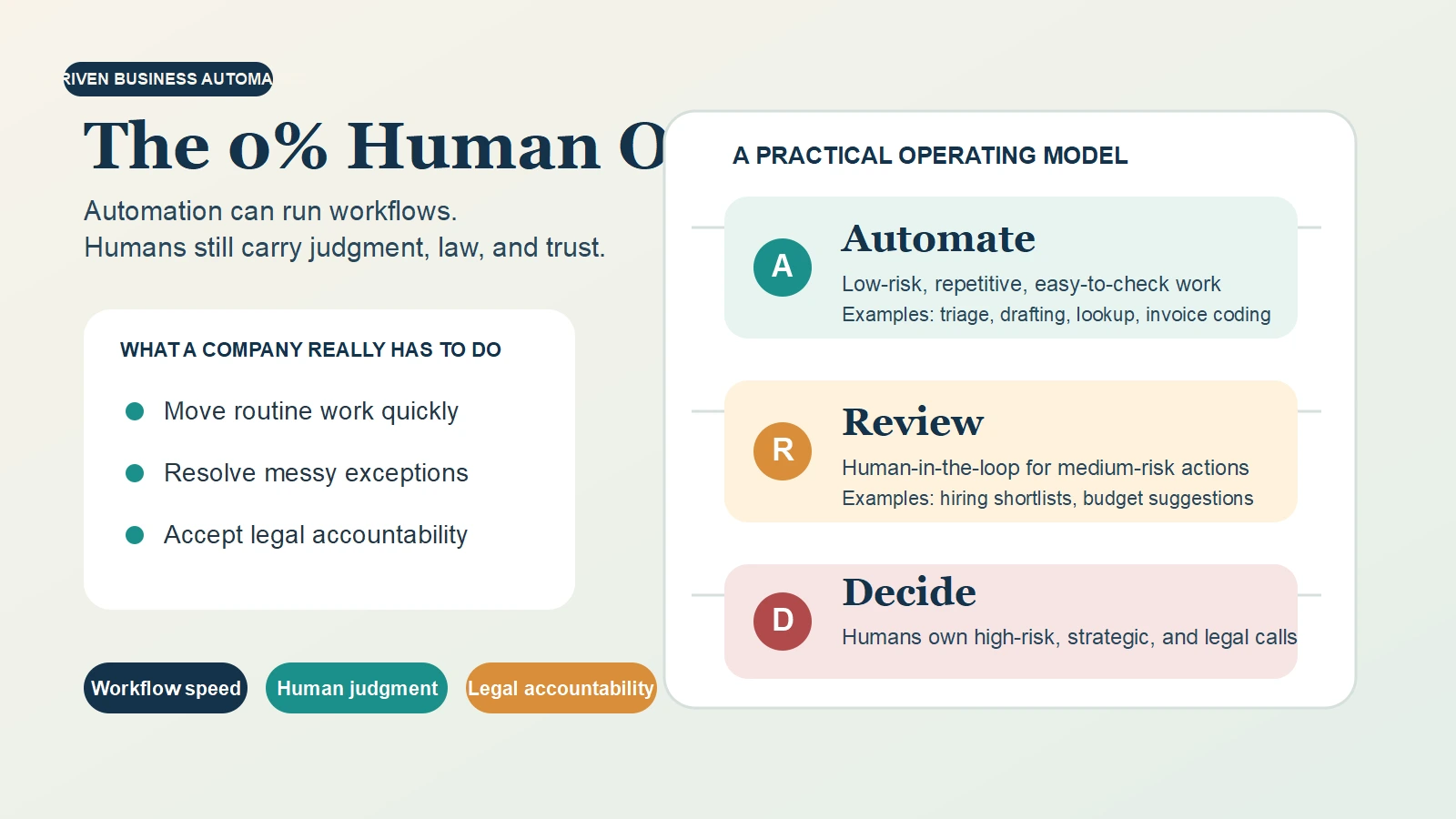

Human Intelligence and AI Together: A Practical Hybrid Model

For most knowledge work in 2026, hybrid intelligence is the most robust default.

A simple division of labor

- Use AI for retrieval, summarization, classification, and first-pass generation.

- Use humans for problem framing, value judgments, edge cases, and final accountability.

A decision rule you can apply today

- AI-led when tasks are repetitive, measurable, and low-risk.

- Human-led when stakes are high, ambiguity is high, or ethics/legal issues are central.

- Hybrid for most workflows that need both speed and judgment.

Common failure modes and fixes

- Automation bias: trusting polished output too quickly. Fix: mandatory verification checkpoints.

- Scope creep: using AI outside validated tasks. Fix: clear boundaries and escalation rules.

- No audit trail: unclear ownership of decisions. Fix: role-based approval logs and traceability.

This approach aligns with risk-based governance models such as NIST AI RMF.

Governance and Ethics: Why This Comparison Matters in 2026

Capability growth is only half the story. Governance determines real-world safety and trust.

- OECD AI Principles emphasize human-centered, trustworthy AI: OECD.

- UNESCO’s AI ethics recommendation was adopted on November 23, 2021, creating a global normative reference: UNESCO.

- The EU AI Act is rolling out obligations in phases across 2025, 2026, and 2027: European Commission overview and implementation timeline.

As of March 11, 2026, this means organizations need both technical capability and governance readiness. A strong model with weak oversight is still a weak system.

Final Thoughts

“AI vs human intelligence” sounds like a contest, but in practice it is a systems-design question.

AI is strongest where scale and speed dominate. Human intelligence is strongest where meaning, accountability, and value tradeoffs dominate. The best outcomes usually come from combining both intentionally, with clear boundaries and clear ownership.

If you keep one takeaway, use this: let AI expand your options, but keep human judgment in charge when consequences are real.

Sources

- Stanford HAI: 2025 AI Index Report

- NIST AI RMF 1.0

- Levels of AGI (arXiv)

- AlphaFold Nature paper

- GraphCast publication page

- Generative AI at Work (NBER)

- Jagged Technological Frontier (SSRN)

- Lake et al., Behavioral and Brain Sciences

- Cowan (2001), PubMed

- OECD AI Principles

- UNESCO AI Ethics Recommendation

- EU AI Act overview

- EU AI Act implementation timeline