The phrase “AI can read our scrambled inner thoughts” sticks because it sounds intimate, unsettling, and a little unbelievable. It also compresses several different breakthroughs into one headline. Researchers are now decoding inner speech from implanted brain signals, generating scene descriptions from fMRI activity, and reconstructing the gist of language from non-invasive scans. This article explains what those systems are actually reading, how close they are to usable thought-to-text technology, and why the biggest story is not sci-fi mind reading but a new frontier in accessibility, neuroscience, and mental privacy.

What “scrambled inner thoughts” really means

The most important clarification is that thoughts are not stored in the brain like subtitles waiting to be copied out.

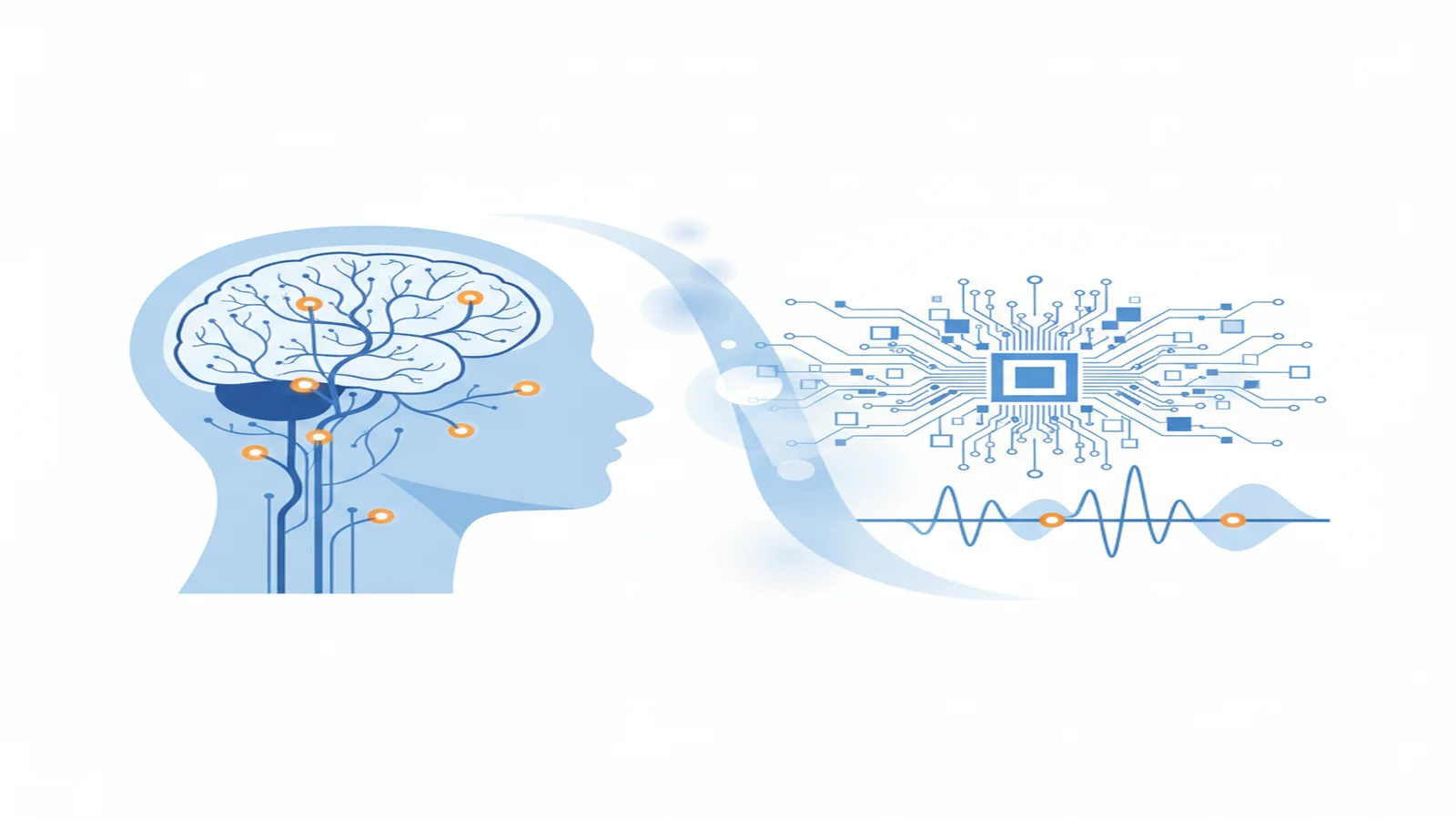

When researchers talk about inner speech AI, they usually mean systems that decode the silent words a person is trying to say in their head. Mind captioning means something different: turning brain activity into a short text description of what someone is seeing, remembering, or imagining. Neural decoding is broader still. It refers to methods that map brain signals to meaning, intention, or language.

Those categories overlap, but they do not point to the same technical problem.

The word “scrambled” makes sense because the signal itself is mixed and indirect. In an fMRI study, scientists measure changes in blood oxygenation, not words. In an implant study, they record electrical activity from neurons involved in movement or speech planning, not a complete feed of subjective thought. A 2024 Nature Human Behaviour study showed that internal speech has detectable structure at the single-neuron level, which helps explain why the field is advancing. But even there, the signal is partial, task-dependent, and distributed across systems.

An everyday comparison helps. Speech-to-text starts with a direct external signal: sound. Thought decoding starts with traces of planning, meaning, imagery, or recall. It is less like copying a transcript and more like rebuilding a sentence from scattered clues.

That is why the current wave of AI mind reading headlines is simultaneously too strong and not strong enough. Too strong, because these systems do not read everything. Not strong enough, because they miss how different kinds of internal content are starting to become legible in different ways.

How scientists are turning neural activity into text

The fastest way to understand the field is to look at three concrete examples side by side.

Inner speech decoding is reading speech intent, not every thought

In the 2025 Cell paper “Inner speech in motor cortex and implications for speech neuroprostheses”, Stanford-led researchers worked with four BrainGate2 participants who had severe dysarthria. They implanted microelectrode arrays in the motor cortex and asked participants to produce self-paced imagined sentences.

That detail matters. The system was not scanning for any hidden thought. It was decoding speech-related neural activity from a person who was intentionally trying to communicate.

The study showed real-time decoding from both a smaller vocabulary and a large open vocabulary. It also introduced user-control ideas that deserve more attention than they usually get. The authors described a keyword-based strategy to unlock decoding and an imagery-silenced training mode to reduce false outputs during inner speech. In other words, the work did not treat privacy and agency as optional add-ons.

The best plain-language comparison is a speech prosthesis. This line of thought-to-text technology is aimed at translating intended utterances from neural speech signals, not exposing a person’s entire mental stream.

Mind captioning works more like semantic scene description

The 2025 Science Advances paper “Mind captioning: Evolving descriptive text of mental content from human brain activity” used fMRI rather than implants. Researchers recorded whole-brain activity while six participants watched video clips and later recalled or imagined them. The system decoded semantic features from those brain patterns and used a language-generation process to build descriptions that matched the underlying scene.

This is one reason recent reporting has leaned on the phrase mind captioning. The model is not transcribing spoken words. It is generating caption-like descriptions from brain activity tied to perception or memory.

For viewed scenes, the paper reports about 50 percent accuracy when choosing the correct description from 100 candidate texts, compared with a 1 percent chance level. Recall was harder, but stronger participants still approached 40 percent accuracy among 100 candidates. That is not perfect language generation, but it is far beyond random guessing.

The right comparison is not “AI now reads minds like a diary.” It is “AI can sometimes infer the best matching scene description from brain-based semantic traces.”

Non-invasive semantic decoding proves the importance of cooperation

The 2023 Nature Neuroscience paper “A semantic decoder for language from non-invasive brain recordings” pushed another frontier. After roughly 16 hours of subject-specific training, the decoder could reconstruct the gist of perceived speech, imagined stories, and even some silent-video content from fMRI activity.

That result drew wide attention, and for good reason. It showed that a non-invasive system could recover meaningful language-level information from brain recordings.

But the same paper also supplied one of the best reality checks in the field: the decoder required subject cooperation. The authors note that it can only be trained with a participant’s willing help and that resistance methods can interfere with decoding. That is a crucial detail for anyone worried that AI thought decoding already works like secret surveillance.

Put those three studies together and a clearer picture appears:

- inner-speech systems decode speech-related intent

- mind-captioning systems decode scene-level semantic content

- non-invasive semantic decoders recover gist-level language with cooperation

That is real progress. It is also very different from saying AI can freely read any thought.

What these systems can actually do today

Current systems are much better at recovering structured fragments than at producing exact inner transcripts.

That distinction gets lost because “mind reading” sounds cleaner than “statistical reconstruction of narrow internal signals under controlled conditions.” But the second phrase is closer to the science.

Take the difference between speech recognition and thought decoding. Speech recognition listens to explicit words already present in sound. Brain-based decoders usually infer a probable meaning from indirect signals. The result may preserve the gist while missing the exact wording. A mind-captioning model may identify the right scene description from many candidates without generating a polished sentence from scratch. An inner-speech prosthesis may decode intended words from speech motor signals without revealing unrelated beliefs or memories.

Three limits still define the state of the art.

First, these systems are task-bound. They work in settings such as imagined speech, story listening, or scene recall, not on unconstrained private thought.

Second, they are person-specific. Many of the strongest results depend on training a model to one participant’s signal patterns.

Third, they are hardware-intensive. The headline-grabbing breakthroughs rely on implanted arrays or fMRI scanners, not lightweight consumer devices.

So the fairest interpretation is this: AI can sometimes read our scrambled inner thoughts in the sense that it can recover pieces of inner language or semantic content from neural activity. It still cannot read the whole private mind on demand.

Why the accessibility case matters more than the hype

The most serious reason to care about this research is communication, not spectacle.

The Cell inner-speech study focused on people with severe dysarthria. For them, decoding speech-related neural intent could become a practical route to expression when spoken language is no longer reliable. That is a very different story from a headline about futuristic telepathy.

A concrete comparison helps here. Existing assistive tools such as eye-tracking keyboards or switch systems can be life-changing, but they are often slower and more effortful than natural speech. A successful speech neuroprosthesis could shorten the path from intention to text or speech output.

Mind captioning points to a related possibility. If a system can translate brain activity into rough descriptions of what someone is seeing or imagining, it may eventually support people who struggle to express nonverbal mental content through ordinary language. The authors of that study mention possible future relevance for language disabilities such as aphasia. That is promising, but it is still early-stage science, not a finished accessibility product.

This balance matters. The assistive promise is real enough to take seriously and early enough to discuss carefully.

The privacy question is now practical

Once researchers can convert meaningful parts of neural activity into text, mental privacy becomes a design problem, a legal problem, and a governance problem.

That does not mean the current systems are ready for covert mind reading. Far from it. Today’s strongest examples still require surgery, large scanners, long calibration, or active cooperation. In the semantic-decoder study, willing participation was necessary for training, and deliberate resistance could block useful output. In the inner-speech study, the system included an unlock keyword and suppression methods to reduce unintended decoding.

Still, the trajectory matters. A 2024 Neuron review on cognitive biometrics and mental privacy argues that the privacy debate should not wait until decoding systems become effortless. As AI systems learn more from neural and adjacent biological data, the ability to infer language, intention, or cognitive state may expand well beyond the current lab setup.

The practical questions are straightforward:

- Does the user have to opt in?

- Can the user stop or gate decoding?

- Is the model limited to a chosen task?

- How is brain-derived data stored and reused?

Those questions belong in the same conversation as accuracy numbers.

What to watch next in AI and neuroscience

If you want to follow this field intelligently, watch the bottlenecks rather than the headlines.

One bottleneck is portability. fMRI is powerful, but it is expensive and slow. Implants can be precise, but they require surgery. A major next step will be improving decoding with less burdensome hardware.

Another is generalization. Today’s systems still learn heavily from one person at a time. A big advance would be a decoder that transfers more cleanly across users or tasks.

The third is user control. The best future brain-computer interface systems will not just decode more mental content. They will let people decide when decoding happens, what type of signal is in scope, and how the output is used.

That is why the future of AI and neuroscience will depend on both model quality and human safeguards. The science is strong enough to demand attention. It is not loose enough to justify sensational language.

CTA

If this topic matters to you, the next useful step is to read across the field rather than around one headline. Start with how brain-computer interfaces work, what fMRI can and cannot tell us, and mental privacy in the age of AI. That broader view is what turns a striking research story into durable understanding.