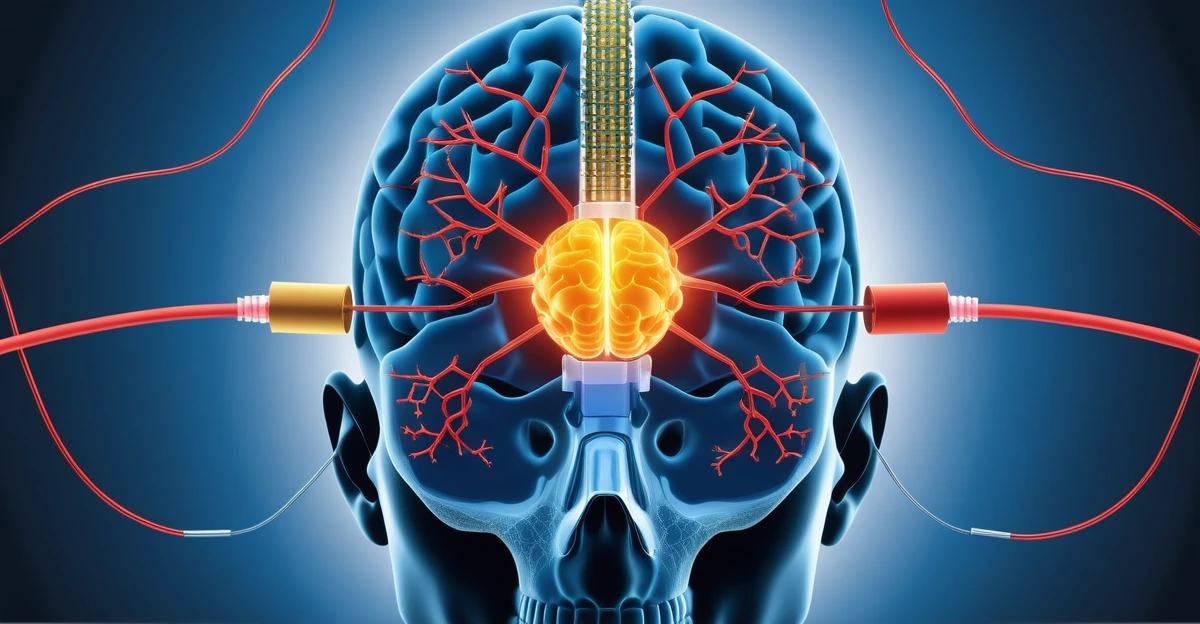

What if your tools could keep up with your intent before it faded? That question sits underneath the excitement around brain-computer interfaces, or BCIs. People often jump straight to the dramatic version: mind-controlled apps, silent typing, or instant capture of thought. Those ideas may arrive in limited forms over time. The deeper lesson is already useful now. The best interfaces reduce the distance between intention and action.

That matters to founders and knowledge workers because research work is full of translation loss. A good question turns into ten tabs. Ten tabs turn into scattered highlights, copied quotes, AI summaries, and half-finished notes. By the time you need to brief a team or write a memo, the source is harder to find and the reasoning is harder to trust.

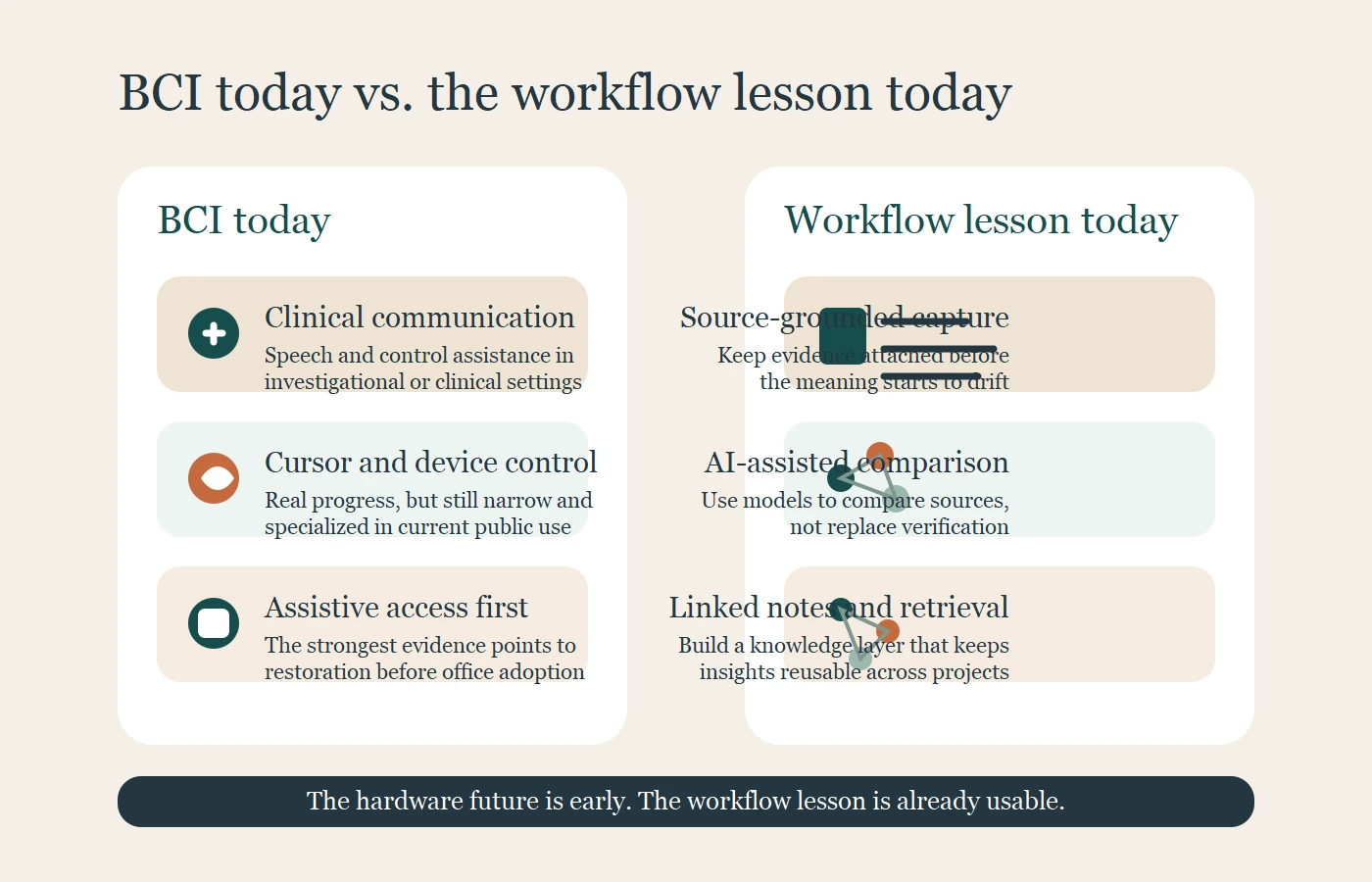

As of March 8, 2026, public BCI progress is real, but still early. A 2025 review in Nature Reviews Bioengineering identified 28 implantable BCI clinical trials and 67 participants through 2023. Companies such as Neuralink and Synchron are pushing the field forward, yet current public use cases still center on communication, cursor control, and device access in investigational or clinical settings. That is important progress. It is not the same thing as everyday office workers replacing keyboards next quarter.

That is why this topic is worth taking seriously without overselling it. BCIs give us a clean way to think about the future of productivity. They ask what happens when the interface preserves more of the original signal and adds less friction on the way to action.

For most teams, the practical version of that future is not an implant. It is ai note taking for research that keeps sources visible, helps compare ideas across a bounded set of material, and turns raw reading into reusable knowledge. In other words, the neural handshake is already starting to show up in a good research workflow AI system.

Why brain-computer interfaces matter to knowledge work

A brain-computer interface is a system that translates brain activity into commands for an external device. In clinical contexts, that can mean communication support, cursor movement, environmental control, or basic interaction with personal devices when normal motor pathways are impaired.

At first glance, that seems far removed from a founder reviewing market reports or a strategist comparing papers and policy notes. But the underlying design challenge is similar. In both cases, the system has to preserve intent across noisy inputs and turn that signal into something useful.

That is why BCIs matter beyond the clinic. They show that the future of productivity will not be defined only by faster typing or prettier dashboards. It will be defined by how well tools carry intent through a workflow without losing context.

For knowledge work, the bottleneck is usually not idea generation. It is the handoff between stages:

- A question appears.

- Sources are collected.

- Notes are created.

- A claim is formed.

- The claim is used in a decision, memo, or draft.

Every break in that chain creates waste. You end up re-reading, re-checking, or redoing work that should have compounded.

What the field can do today and what it still cannot do

Real progress is happening in clinical settings

It is a mistake to treat BCIs as fantasy. Synchron’s public materials show a clear investigational goal: help people with severe motor impairment control digital devices hands-free. Neuralink’s patient brochure frames its early work around people with major unmet medical need while also signaling a broader long-term ambition around human-AI interaction.

That tells us two important things. First, there is real technical progress. Second, the strongest use cases today are still assistive and clinical. This is the difference between taking the field seriously and turning it into hype.

Mainstream productivity use is still early

The same evidence also tells us to stay disciplined. Trial counts remain small. Long-term durability, safety, calibration burden, user training, and regulation still matter. Many current systems are investigational. Even if performance improves quickly, everyday knowledge workers already have cheaper and safer ways to interact with software.

The responsible reading is straightforward: mainstream BCI productivity is possible in the long run, but current public evidence supports medical restoration first and broader workplace adoption later. That is an inference from today’s trial and company materials, not a firm timetable.

Privacy and autonomy are part of the product question

WHO and UNESCO both frame neurotechnology as a governance issue as well as a technical one. Privacy, agency, dignity, and freedom of thought are not side notes. They are adoption constraints.

This matters because any interface that moves closer to the mind also moves closer to highly sensitive data. The more personal the signal, the higher the trust requirement. That lesson already applies to today’s AI tools. If a workflow handles strategic research, customer conversations, or internal analysis, data discipline matters before any discussion of future neural interfaces begins.

The real lesson of BCI is lower cognitive friction

Productivity is a handoff problem

Most knowledge workers are not blocked by raw input speed. They are blocked by the number of handoffs a thought has to survive. A useful question becomes a search. The search becomes a reading list. The reading list becomes highlights. The highlights become a rough synthesis. Then the original purpose gets blurred.

That is why so many systems feel productive in the moment and weak a week later. They create artifacts, but they do not preserve meaning.

The best systems preserve context, not just speed

BCIs are difficult because signals are noisy, context matters, and the system has to keep learning what the user means. Knowledge work is different, but the same rule holds. If the interface strips away context, the output becomes less trustworthy.

In research, context usually means:

- where the claim came from

- what the source actually said

- what interpretation you added

- what question the note was meant to answer

- where the idea could be used next

This is why fast summaries are not enough. A polished answer without a source trail is a fragile asset.

The neural handshake is a design principle

The most useful way to interpret the phrase neural handshake is as a design principle. A good system should preserve as much of the user’s intent as possible while reducing unnecessary translation. It should help the person think with the tool, not fight the tool.

That principle is what makes brain-computer interface productivity a useful topic even before BCIs become normal workplace hardware. You can apply the lesson right now by designing workflows that stay close to the source, clarify the meaning of a note, and keep the next action visible.

The practical version today is ai note taking for research

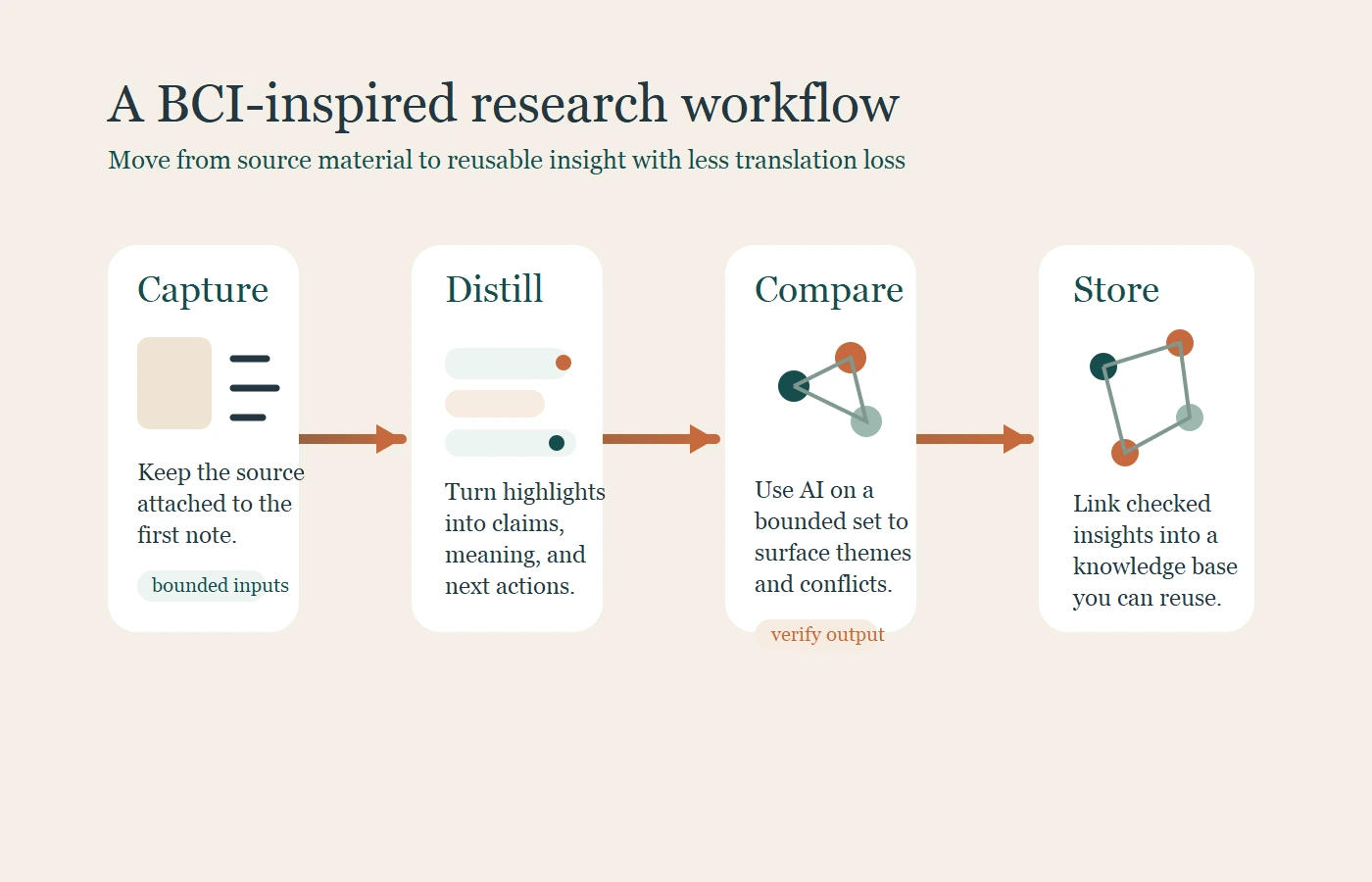

Capture the source before the idea drifts

The first move is simple: start with the source, not the summary. If you cannot trace a note back to the paper, report, interview, or article that produced it, you are already carrying risk.

This is where ai note taking for research becomes different from generic note capture. NotebookLM is useful when you want to work from a bounded set of material and ask grounded questions against static copies of those sources. Zotero is useful when you need citation control, PDF reading, and annotations that remain attached to the source context. Both support the same habit: preserve evidence before interpretation drifts.

For a founder, that may mean loading a tight source set around a market question instead of asking an open-ended chatbot to summarize an entire category from memory. That sounds less magical, but it is much more useful.

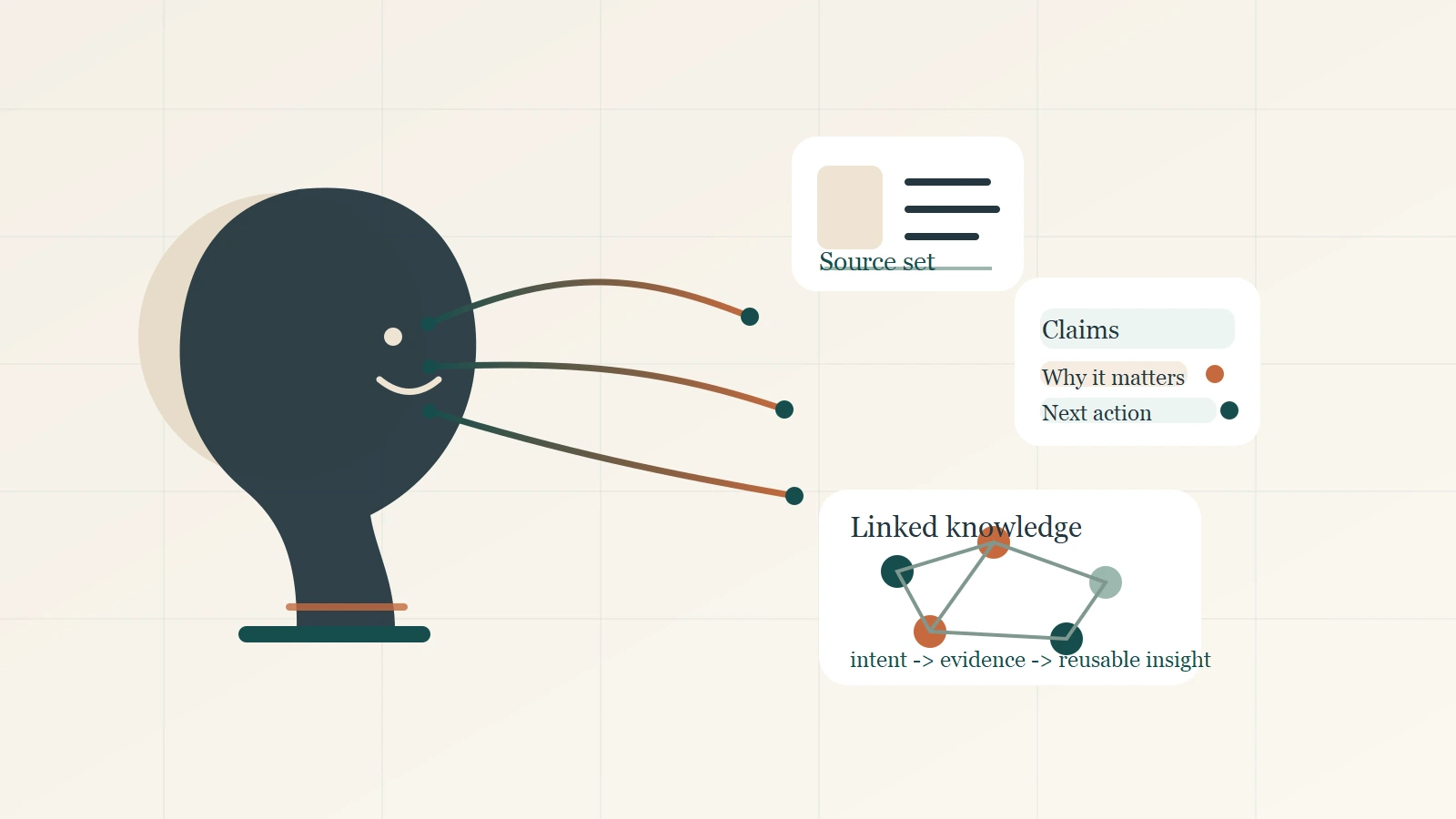

Distill notes into claims you can reuse

Raw highlights are not knowledge. They are inputs. The durable part comes when you rewrite the point in plain language and explain why it matters.

A practical note often fits four fields:

- Source

- Claim

- Why it matters

- Next action

That structure works because it forces a decision. Are you storing evidence, interpretation, or a task? Once that is clear, the note becomes portable.

Source: 2026 market analysis on AI adoption in operations

Claim: Teams gain more value from AI after they standardize the workflow around review and evidence handling.

Why it matters: Process clarity matters more than adding one more tool.

Next action: Audit where research context gets lost between reading and decision-making.

This is the part most note taking tools underserve. They are good at capture, but weaker at forcing clear thinking. AI can help compress wording, compare similar notes, or suggest themes. You still need to decide which claim is worth keeping.

Use AI for comparison, not blind trust

The safest place to use AI aggressively is after you have bounded the material. Once the source set is controlled, ask the model to compare arguments, cluster themes, surface contradictions, or flag gaps.

That is where research workflow AI becomes a force multiplier. The tool is no longer pretending to know everything. It is helping you work faster on material you can inspect.

For ai literature review, Elicit is useful because it now organizes work around distinct research workflows and semantic search rather than a single generic chat box. NotebookLM is useful when you already have the source set and want grounded comparison. The better question is not Which tool thinks for me? It is Which tool reduces friction without breaking the evidence chain?

Store finished insights in a knowledge management layer

A note is only valuable if it can be retrieved later with its meaning intact. That is where knowledge management matters.

Obsidian and other linked-note systems become useful after the reading stage. One note about BCI governance can link to another on AI trust. A third note on product strategy can pull both together. This is how isolated reading turns into a reusable knowledge base.

If your system makes capture easy but retrieval hard, it is incomplete. A strong workflow lets you answer three questions fast:

- What did this come from?

- What does it mean?

- Where could I use it next?

A BCI-inspired research workflow AI stack

The most practical way to evaluate a stack is by job, not by brand category.

| Layer | Best job | Example tools | Why it fits |

|---|---|---|---|

| Source-grounded capture | Keep evidence attached to the note | Zotero, NotebookLM | Protects traceability from the start |

| Comparison and synthesis | Compare sources and surface patterns | NotebookLM, Elicit | Works best on bounded material |

| Durable knowledge layer | Store clarified insights for reuse | Obsidian | Makes retrieval and linking part of the workflow |

Source-grounded capture tools

Use source-first tools when the cost of losing context is high. This matters for strategy research, technical reviews, policy scans, and anything that may feed a public claim.

AI literature review and synthesis tools

Use AI after the material is constrained. This is where the model can help with speed while you keep control over trust.

Long-term note taking tools and retrieval

Treat the knowledge base as the home for checked insights, not as a dumping ground for every highlight. A smaller set of clarified notes usually beats a larger archive of clutter.

What founders should do before BCIs arrive at work

Standardize note structure

If research matters in your company, standardize the unit of knowledge. A simple template is enough:

- source

- claim

- why it matters

- next action

This creates cleaner handoffs across people and projects.

Separate evidence from interpretation

Do not let quotes, summaries, and opinions blur together. Label them. That one habit improves trust more than adding another AI layer.

Build for trust before automation

This is the deeper lesson from BCI. Better interfaces are not just faster. They are better at preserving signal. If you build that standard into your current research stack, you will be ready for more advanced tools later. If you ignore it, a stronger interface will only scale confusion.

The point is not to wait for neurotechnology. The point is to behave as if interface quality already matters, because it does. Teams that can move from source to claim to action with less friction will learn faster than teams that collect more information without a system.

Source notes

- WHO publication page on neurotechnology for health and well-being for the public-health and governance framing.

- UNESCO guidance on neurotechnology governance for privacy, dignity, and freedom of thought concerns.

- Nature Reviews Bioengineering review of implantable BCI trials for the current scale of clinical progress.

- Neuralink PRIME Study brochure for the company’s early patient-facing framing.

- Synchron technology page for the assistive device-control use case.

- NotebookLM Help for static source copies and bounded-source workflow details.

- Google Blog on NotebookLM for source-grounded responses with citations and relevant quotes.

- Zotero PDF reader and note editor docs for annotation-to-note traceability.

- Elicit support docs for workflow-specific literature review support.

- Obsidian internal links docs and graph view docs for connected knowledge management.

Final takeaway

The future of BCI is not only a hardware story. It is a story about how little friction should exist between a human intention and a useful action. That is why it belongs in the same conversation as ai note taking for research.

You do not need an implant to apply the lesson. Tighten the workflow you already control. Keep each note tied to a source. Rewrite evidence into clear claims. Use AI to compare, not to guess. Then store finished insights where they can compound.

If you want a simple next step, run one active project through a four-field note template this week: source, claim, why it matters, next action. That is a practical version of the neural handshake you can use right now.