AI is no longer just a technical story. It helps people write, compare options, study, brainstorm, and plan. So the real question is not only what AI can do. It is what happens to human thinking when we start thinking with it.

This guide explains where AI strengthens work, where it weakens judgment, what research says about creativity and decision-making, and how to use AI without outsourcing your mind.

What “Mind and AI” really means

When people hear AI, they often picture automation. But the bigger shift is cognitive. AI now helps organize thought before a human decides. It helps search, draft, compare, summarize, and simulate.

We have seen lighter versions of this before. A calculator changed arithmetic. GPS changed navigation. Search changed recall. Generative AI goes further because it does not only retrieve information. It creates candidate answers, outlines, and explanations. Instead of facing a blank page, you face a first version. A 2024 Nature Human Behaviour review on generative AI and learning is useful here because it treats AI as something that changes the learning environment, not just the speed of output.

That changes the human job. The hard part is often no longer starting. It is asking a better question, spotting weak logic, and checking what deserves trust. A student, founder, or designer using AI all face the same new task: judging polished suggestions.

How AI changes human thinking

A useful phrase from cognitive science is cognitive offloading. It means moving mental work to an external aid. A grocery list does this. So does a reminder app. AI extends that habit into harder tasks such as summarizing a document, clustering notes, or producing a first draft.

That can be genuinely helpful. In Generative AI at Work, researchers Erik Brynjolfsson, Danielle Li, and Lindsey Raymond found that a generative AI assistant increased productivity for customer support agents, with the biggest gains going to less experienced workers. One reasonable inference is that AI can spread expert patterns faster than traditional apprenticeship alone.

But AI shifts effort rather than removing it. If the model drafts first, the human must do more second-pass thinking: comparing, rejecting, and verifying. That is valuable only if the person still does it. If not, offloading becomes surrender.

Consider a student writing a paper on climate policy. AI can suggest a structure in seconds. That saves time. Yet if the student accepts the outline before testing the argument or checking the sources, the result may look organized while the thinking stays thin. The same pattern appears at work. A founder can ask AI to summarize user interviews, then miss the disagreement or edge cases the summary smoothed away. The danger is not mere use. It is mistaking fluent output for finished thinking.

What AI does to creativity

Creativity is often less about sudden inspiration than about generating and selecting options. That is one reason AI feels useful on creative work.

A 2024 Science Advances study on short-story writing found that AI assistance improved judged creativity, especially for weaker writers. AI can raise the floor. It can help people who struggle to start or who need more possible directions before they can choose.

The same study also found a cost: outputs became more similar overall. Individual creativity improved, but collective diversity fell. That is the tradeoff many readers already sense. AI helps people start faster, but shared model patterns can narrow the range of voices.

You can see this in something as ordinary as headline brainstorming. The first ten ideas may be useful. The next ten often echo the same verbs and structures. Use AI for divergence, then shift to human taste. Ask for contrasts, counterexamples, or weird alternatives, then rewrite in your own cadence. AI is strong at expanding options. It is weaker at deciding which option deserves to represent you.

AI decision-making: faster is not the same as wiser

Decision-making with AI is not only a technical problem. It is also a trust problem. Behavioral research shows that people can both overtrust and undertrust algorithms.

One line of research calls this algorithm appreciation. In Algorithm Appreciation, people often preferred algorithmic to human judgment in estimation tasks. Another is algorithm aversion. In Algorithm Aversion, people became less willing to use algorithms after seeing them err, even when the algorithms still beat human forecasters on average. These tendencies can live side by side.

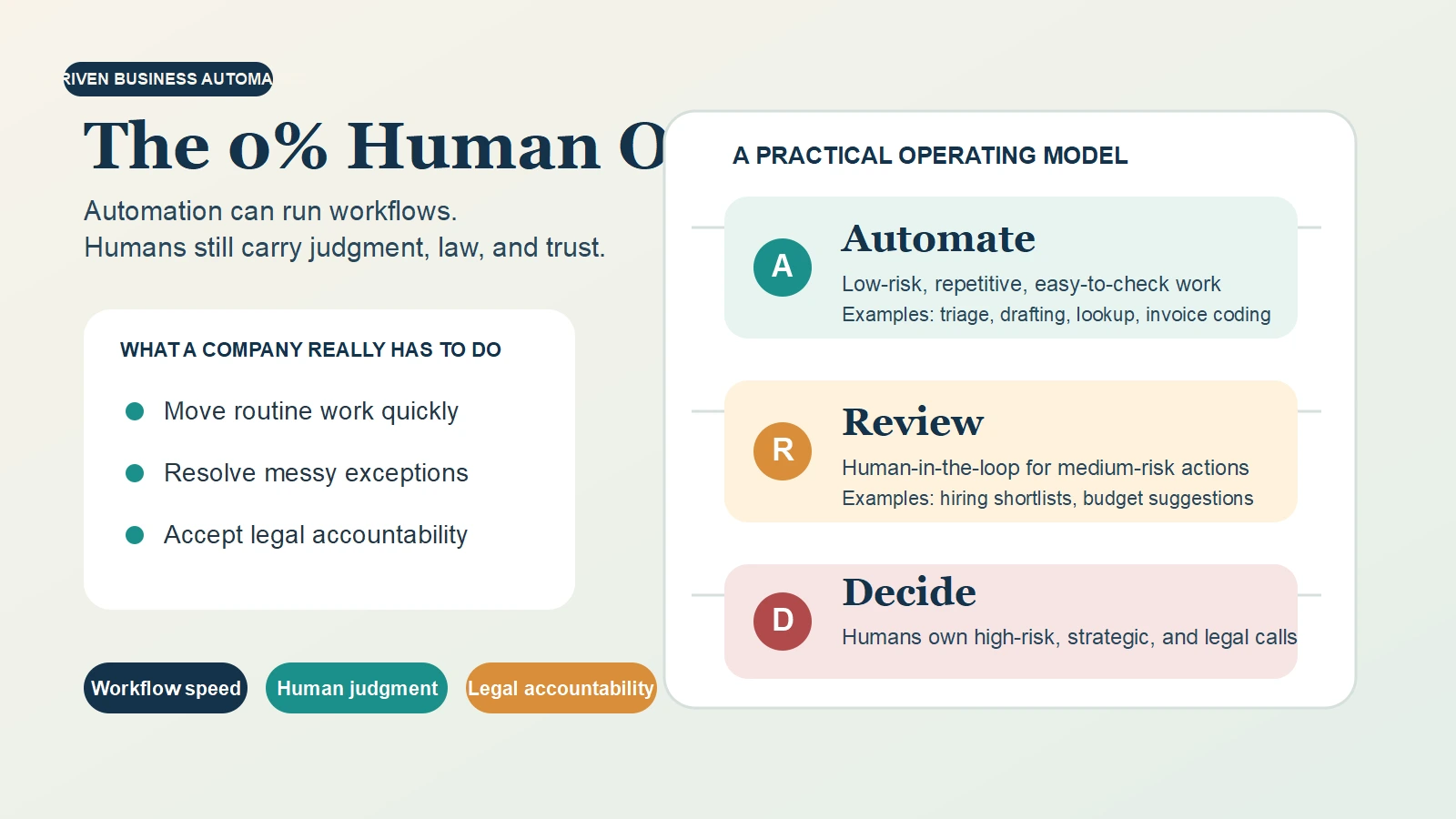

Think about a hiring shortlist. AI can speed first review. It can also carry old patterns from historical data and make them look objective. Once the output appears as a score, people may treat it as neutral rather than constructed.

That is why the NIST AI Risk Management Framework frames AI as a socio-technical system. Risk does not sit in the model alone. It sits in data, workflow, incentives, and oversight. In high-stakes settings, AI is best used as structured input. Accountability remains human.

The best results usually come from collaboration, not handoff

The strongest pattern in the evidence is that collaboration beats blind handoff, not that humans plus AI always win.

A 2024 systematic review and meta-analysis in Nature Human Behaviour reviewed 106 experimental studies and found results were conditional. When work was divided well, collaboration helped. When AI already outperformed humans, naive combination could reduce performance.

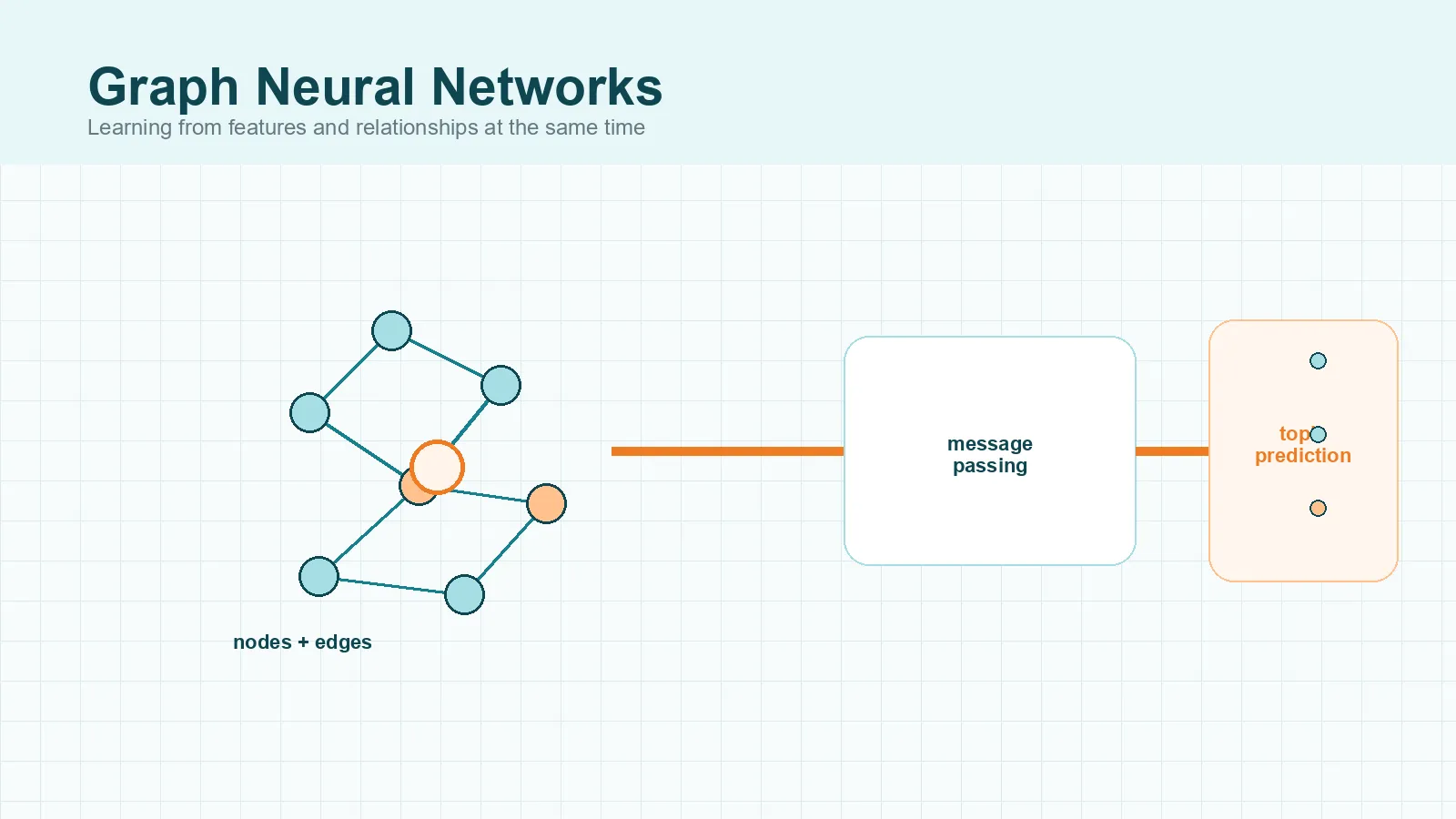

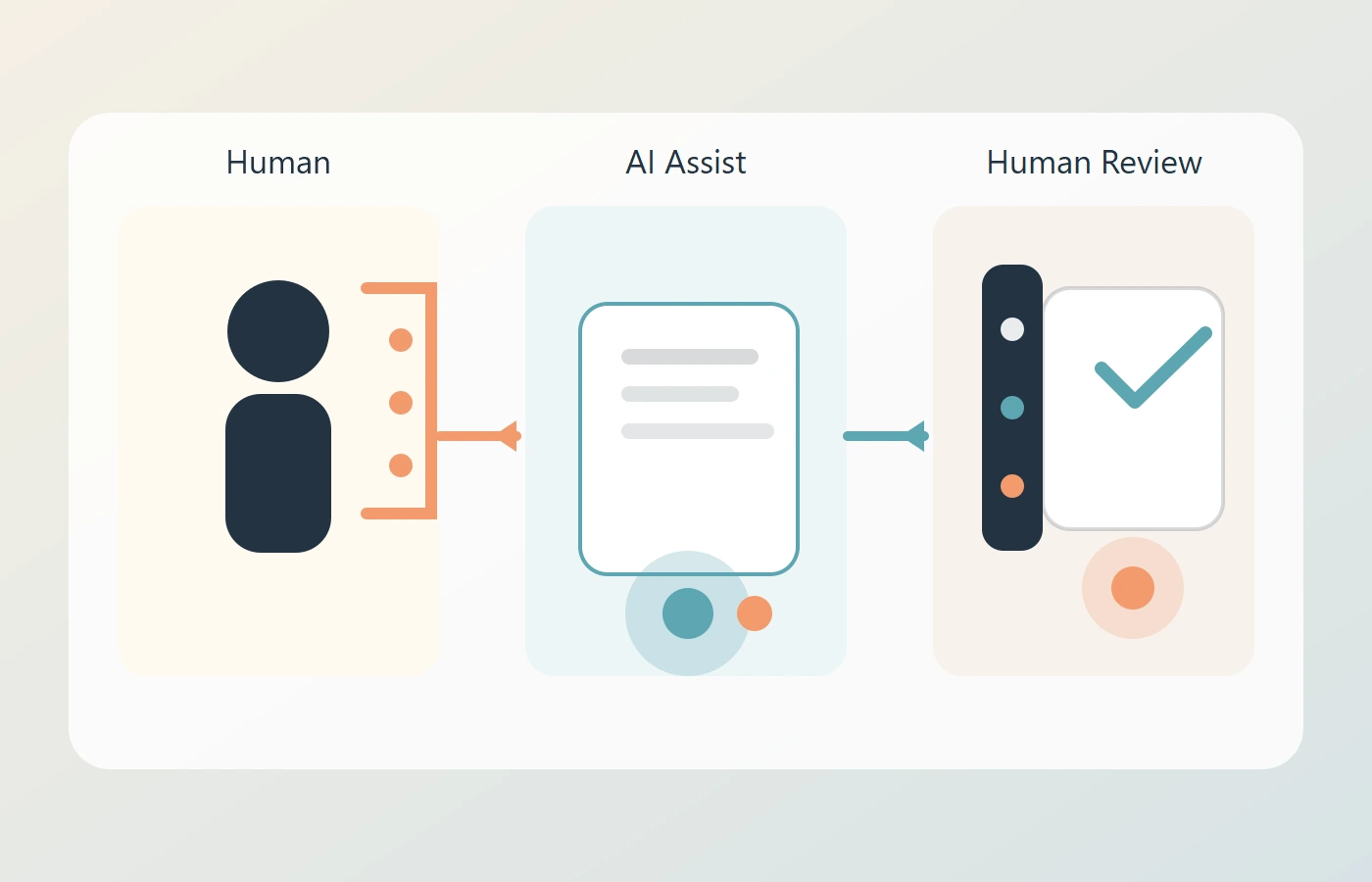

In practical terms, good collaboration usually follows a sequence:

- Human frames the problem.

- AI proposes options or drafts.

- Human checks assumptions and sources.

- Human makes the final call.

Take a product team. AI can summarize fifty interviews before planning. That saves time. But people still need to ask what got flattened, which complaints were rare but important, and what context the summary dropped. AI compresses information. Humans decide what the compression means. This also matters for groups. Research on collective intelligence suggests large language models can reshape how teams share and combine knowledge.

Does AI make us less intelligent?

Not automatically. But it can make us less practiced in certain skills if we let it.

GPS can reduce our feel for routes if we follow it blindly. AI can do something similar with summarizing, writing, or problem solving.

If you let AI do every first draft, you may get worse at hearing when an argument is thin. If you let it answer every hard question instantly, you may skip the productive struggle that helps learning stick.

This is why deliberate friction matters. Try solving a problem briefly before asking AI. Predict what a source will say before reading a summary. Keep track of which claims came from you, which came from the tool, and which were verified elsewhere.

The better question is not whether AI makes people smarter or dumber. It is which skills you are practicing and which you are outsourcing. A healthy habit is skill rotation: sometimes use AI to accelerate, sometimes work without it, and always mark what you personally verified.

Is AI conscious or just convincing?

Consciousness means subjective experience: there is something it is like to be that system. Current AI is very good at producing language about feelings and inner states, which makes the public debate slippery.

A system can sound reflective without being conscious. An actor can describe grief without grieving. A model can generate sentences about fear or desire because it learned human patterns in text. Language about experience is not the same as experience.

Recent scientific work, including Identifying Indicators of Consciousness in AI Systems, argues for stricter standards than surface fluency. A related review, Consciousness in Artificial Intelligence: Insights from the Science of Consciousness, argues that current systems do not show strong evidence of consciousness under its framework. The field is unresolved, but there is no scientific consensus that today’s chatbots are conscious.

This matters because people anthropomorphize easily. When a system sounds human, users may trust it too much or confuse style with moral status.

What the future of human intelligence may look like

The future of human intelligence looks less like a competition and more like a redesign of everyday cognition around tools that draft, search, and simulate.

Workplace evidence already hints at this. In Shifting Work Patterns with Generative AI, active users spent less time on email and after-hours work. That suggests routine communication is getting easier, which raises the value of framing, verification, taste, ethics, and responsibility.

For different readers, that means different things:

- Students need stronger source judgment.

- Managers need better review loops.

- Creators need stronger voice and taste.

- Founders need better strategy questions.

The scarce skill is less likely to be raw output and more likely to be wise selection. Seen this way, future human intelligence is not just memory or speed. It is the ability to direct powerful tools without quietly being directed by them.

How to use AI without outsourcing your mind

You do not need to reject AI to protect human thinking. You need rules.

- Start with your own view before you prompt.

- Use AI for options, not verdicts.

- Make uncertainty visible.

- Check factual claims at the source.

- Keep one human pass before you publish or decide.

- Protect high-stakes decisions with real review.

- Watch for sameness in tone, structure, and assumptions.

The goal is not to prove that you can work without AI. It is to make sure AI expands your capability without flattening your attention, standards, or voice.

CTA

If this is the kind of AI coverage you want, continue with the next layer of the topic: AI bias in everyday tools, practical prompting for knowledge work, and human review in AI-assisted workflows.