If you want a clear answer to where brain-computer interfaces are headed, this guide gives you the practical picture. You will see what BCIs can do today, what AI actually adds, where constraints still dominate, and why this field matters to the future of artificial intelligence. The key point is simple: recent BCI progress is not just a hardware story. AI decoders now convert difficult neural signals into usable communication and control, but performance still depends heavily on context, calibration, and governance.

For readers who want extra background before diving in, this companion explainer provides broader context: The Neural Handshake: The Future of Brain-Computer Interfaces.

What a Brain-Computer Interface Actually Is

A brain-computer interface (BCI) is a system that records brain activity and translates it into commands for digital devices or assistive systems. The BCI Society describes BCIs as direct interfaces that bypass conventional neuromuscular pathways, allowing users to interact through neural signals rather than muscle movement (BCI Society).

A concrete comparison helps:

– A keyboard converts finger movement into text input.

– A microphone converts sound into audio commands.

– A BCI converts neural activity into text, speech, cursor movement, or device control.

You will also see the term brain-machine interface (BMI). In practice, many researchers and institutions use BCI and BMI interchangeably, while treating both as part of broader neurotechnology. The U.S. GAO emphasizes that this is a family of technologies, not one single product category (GAO Technology Assessment 2025).

Invasive, minimally invasive, and non-invasive systems

Most public confusion comes from mixing these classes together.

| Approach | Signal source | Typical strength | Typical limitation |

|---|---|---|---|

| Invasive | Implanted electrodes in/near cortex | Highest signal fidelity and faster decoding potential | Neurosurgery burden and long-term implant considerations |

| Endovascular/minimally invasive | Electrodes delivered through blood vessels | Lower surgical burden than open-cranium implants | Usually lower signal granularity than intracortical arrays |

| Non-invasive | EEG/fMRI/MEG outside skull | No implant surgery, broader accessibility for research | Noisier signals and lower practical bandwidth |

A reliable rule of thumb: cleaner neural signals generally require more invasive access.

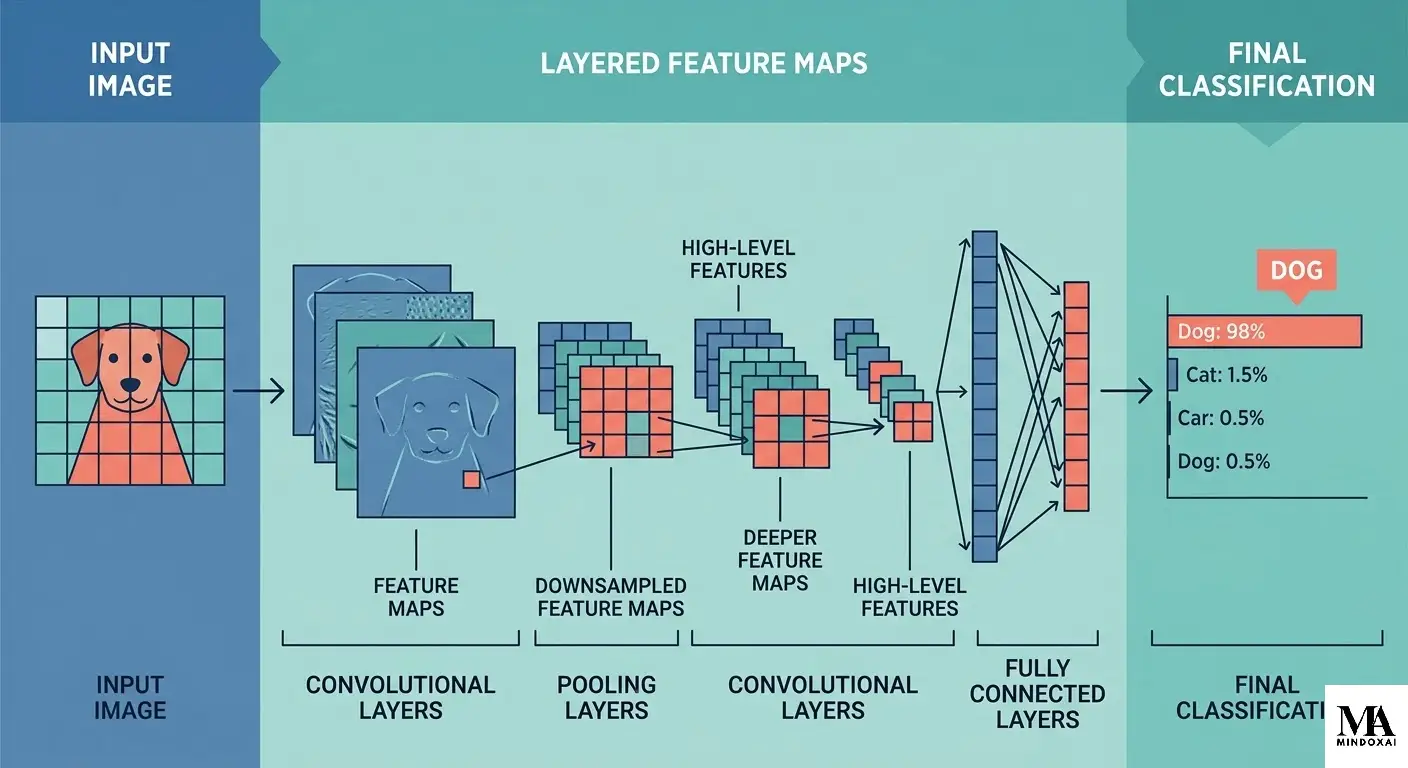

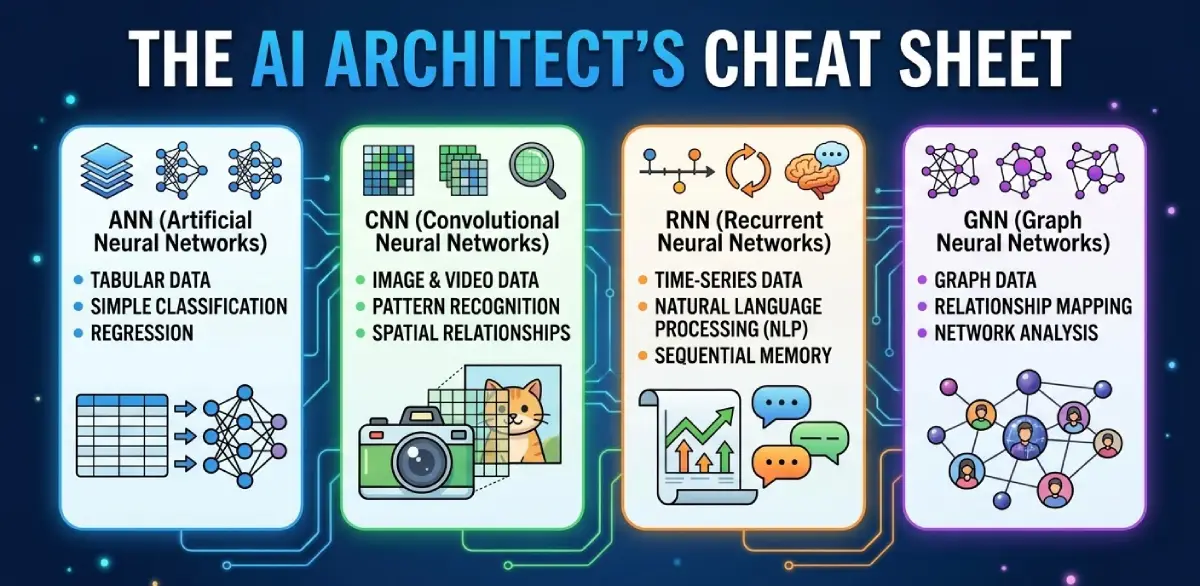

Why AI Changed BCI Performance

BCIs need two layers to work:

1. Signal acquisition (hardware)

2. Signal interpretation (AI models)

Earlier systems leaned on hand-engineered features and narrow classifiers. Modern AI pipelines can model high-dimensional neural patterns, adapt to noise, and use language priors to produce more coherent outputs.

The comparison is similar to translation software:

– Sensors answer: “What electrical pattern did we record?”

– AI decoders answer: “What was the likely intention in this context?”

Without strong decoding models, many BCI outputs remain too noisy for useful communication.

Example: imagined handwriting to text

A 2021 Nature study showed high-performance brain-to-text communication by decoding imagined handwriting from intracortical activity (Willett et al., Nature 2021). NIH’s summary reported around 90 characters per minute, with raw character-level accuracy above 94% and above 99% with autocorrect (NIH Research Matters).

That is a practical shift from letter-by-letter scanning toward much faster communication.

Example: speech neuroprosthesis advances

A 2023 Nature paper reported a speech neuroprosthesis achieving a median rate of roughly 78 words per minute in a participant with severe paralysis (Willett et al., PubMed). A related Nature study on speech decoding and avatar control reinforced this direction (Metzger et al., PubMed).

Compared with traditional spelling boards, this points toward more natural conversational interaction rather than purely serial character selection.

Example: non-invasive semantic reconstruction

A 2023 Nature Neuroscience paper reconstructed semantic content from non-invasive recordings using fMRI plus model-based decoding (Tang et al., Nature Neuroscience 2023).

Important constraints remained clear:

– expensive imaging infrastructure,

– substantial participant-specific training,

– controlled conditions rather than everyday environments.

So the right interpretation is progress, not magic. If you want a deeper look at this exact boundary between strong decoding and overstatement, this internal analysis is useful: AI Reading Minds: What Neural Tech Can Actually Do.

Where BCIs Stand in 2026: Real Capability vs Real Constraint

A disciplined way to assess any BCI claim is to ask:

– What did the system do in a measured setting?

– How reproducible is that performance outside that setting?

What works today

Several capabilities now have strong peer-reviewed support:

– Assistive motor control: BrainGate-era work demonstrated robotic reach-and-grasp control in people with tetraplegia (Hochberg et al., Nature 2012).

– Assistive communication: Intracortical text and speech pipelines now support practical communication in specialized clinical contexts.

– Less-invasive clinical pathway: Endovascular BCI work showed users performing digital tasks such as messaging and online activity in early human studies (Oxley et al., JAMA Neurology).

What still blocks scale

1) Personal calibration is still heavy

Many high-performing systems are tailored to each user. Cross-user generalization remains a major engineering challenge.

2) Neural signals drift over time

Signal quality can change with fatigue, medications, stress, and day-to-day physiology. Decoders must adapt continuously.

3) Hardware burden remains high

Implants require specialist workflows; non-invasive high-resolution setups are still expensive and operationally complex.

4) Safety and governance are now core constraints

The GAO identifies privacy, equitable access, and oversight as policy priorities that should be addressed before broad commercialization (GAO Technology Assessment 2025).

A practical comparison: the field has moved from “can this work in principle?” to “can this be robust, affordable, and governable in real-world systems?”

The Future of AI Through Neural Interfaces

The future likely unfolds in stages, not a single leap.

Near term (next 3 to 5 years)

Most credible advances are likely to concentrate in medical and assistive use cases:

– more natural communication support for people with severe motor/speech impairment,

– better decoder adaptation with reduced retraining burden,

– stronger closed-loop systems that adjust to changing neural signals.

A 2025 study combining fMRI data with a fine-tuned language model improved reconstruction quality, showing how modern model design can push non-invasive pipelines forward while still operating within strict setup limits (Liu et al., Communications Biology 2025).

Mid term (about 5 to 10 years)

If the current research trajectory holds, we may see:

– richer multimodal control (neural signals + gaze + residual speech/motor channels),

– stronger intent ranking and context-aware decoding,

– expanded neurorehabilitation systems that personalize therapy continuously.

The best comparison here is interface design history. Early BCIs resemble command-line systems: explicit, narrow, and effortful. Future systems may become more like adaptive operating layers for communication and control.

Broader AI implications

Neural interfaces matter for AI strategy because they connect AI to human intent at the input layer, not only the output layer. That shifts the conversation from “AI as external tool” to “AI as embedded assistive mediation” in health, communication, and eventually work.

For readers tracking long-range AI capability debates, this internal primer helps frame the boundary between practical systems and broader AGI claims: What Is Artificial General Intelligence? AGI Explained. For a wider risk perspective, see: Superintelligence: AGI, Risks, and What Comes Next.

Ethics, Privacy, and Governance Before Consumer Scale

If BCIs advance, governance has to move early. Neural data is not ordinary behavioral telemetry.

Why neural data needs stronger protections

Neural signals can encode deeply personal patterns and may be difficult to treat like revocable app data. Neuroethics literature has argued for explicit protections such as cognitive liberty, mental privacy, and mental integrity (Ienca & Andorno, 2017).

Concrete comparison:

– With a social platform, you can usually delete an account and rotate credentials.

– With a neural model trained on personal brain-derived data, practical revocation is harder if derivatives and embeddings already exist.

Governance signals now in motion

- UNESCO’s General Conference adopted the first global recommendation focused on neurotechnology ethics in November 2025 (UNESCO).

- The EU AI Act continues phased implementation (European Commission).

- NIST AI RMF gives organizations a practical risk-management structure for high-impact AI systems (NIST AI RMF 1.0).

A pragmatic checklist for teams building BCI-AI systems:

1. Collect only neural data needed for functional performance.

2. Isolate identity-linked records from model-development pipelines when possible.

3. Define auditable human override for consequential actions.

4. Treat safety incidents and model failures as reportable events with clear response ownership.

Trust will not come from model quality alone. It will come from model quality plus governance quality.

Final Thoughts

Brain-computer interfaces and the future of AI are converging through one central mechanism: better decoding. Hardware enables access to neural signals, but AI is what turns those signals into useful communication and control.

The strongest path forward is evidence-first optimism. Clinical and assistive milestones are real and meaningful. At the same time, personalization burden, safety pathways, and governance constraints remain substantial.

If this field keeps maturing on both tracks, capability and responsibility, BCIs could become one of the most consequential ways AI extends human agency in the next decade.

Sources

- BCI Society

- GAO Technology Assessment 2025

- Hochberg et al., Nature 2012

- Willett et al., Nature 2021

- NIH summary of imagined handwriting BCI

- Willett et al., Nature 2023 speech neuroprosthesis

- Metzger et al., Nature 2023 speech decoding + avatar control

- Tang et al., Nature Neuroscience 2023

- Liu et al., Communications Biology 2025

- Oxley et al., JAMA Neurology

- Ienca & Andorno 2017

- UNESCO neurotechnology recommendation (2025)

- European Commission AI Act framework

- NIST AI RMF 1.0