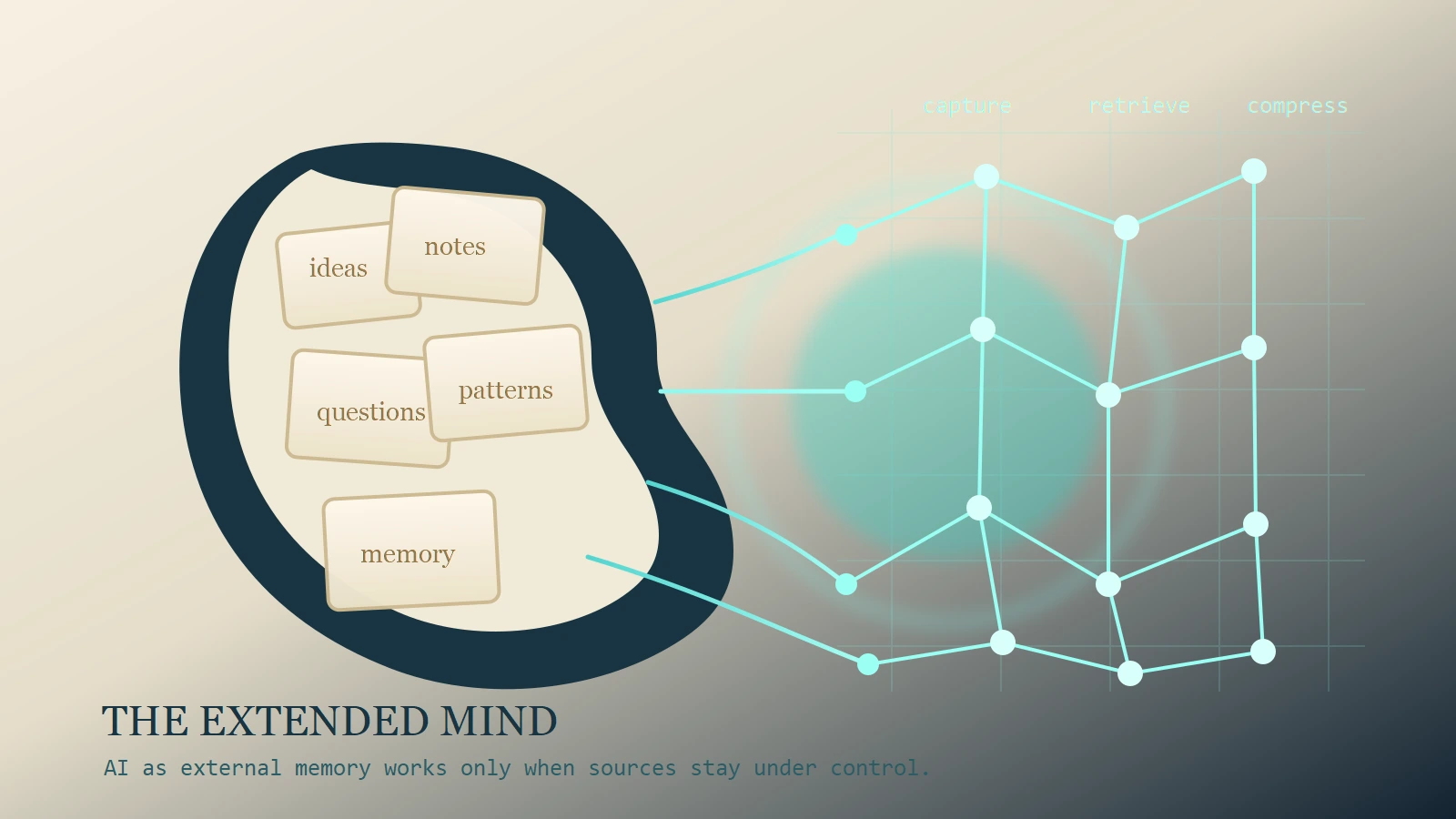

Most people already use AI as if it were a strange new memory organ. They paste meeting notes into it, ask it to resurface forgotten ideas, have it summarize books they only half remember, and expect it to hold context across projects that would otherwise spill out of the human brain in every direction. The habit feels natural because human cognition has never been sealed inside the skull. We have always used notebooks, calendars, whiteboards, search engines, and other people as memory supports. AI compresses all of those habits into a more interactive interface.

That is why the real question is not whether you should ever rely on AI for memory. You already do, or soon will. The better question is what kind of memory system you are building when you do it. If you get the architecture right, AI can become a useful external memory layer. If you get it wrong, it becomes a fluent machine for confusion, dependency, and false confidence.

What the extended mind actually means

The key intellectual framework here is the extended mind thesis. In their 1998 paper, Andy Clark and David Chalmers argued that cognition can extend into the world when external resources are reliably available and tightly integrated into how a person thinks. Their famous example compares a person who remembers an address internally with Otto, a man who relies on a notebook because of memory impairment. If Otto consults that notebook in the same dependable way another person consults biological memory, then the notebook is not merely a convenience. It is functioning as part of the thinking system.

Whether or not one accepts the strongest philosophical version of that claim, the practical lesson is durable: humans think through tools. A calendar changes how we plan. A notebook changes what we bother holding in working memory. A bookmarking system changes how we revisit ideas. External supports do not sit outside cognition like dead accessories. In many cases, they reshape the cognitive process itself.

There is a social version of the same insight in transactive memory research. Daniel Wegner and others showed that people in close relationships and teams often remember by knowing where knowledge lives. They do not each store every fact internally. Instead, they rely on a network in which one person remembers the legal detail, another remembers the client history, and another remembers the financial constraint. A good team remembers through coordinated access.

AI makes that logic personal. Instead of asking a colleague where the key insight from January lives, you may ask an assistant with access to your notes, transcripts, and project archive. Instead of opening fifteen tabs to reconstruct your own argument, you ask the system to surface it. In that operational sense, AI can absolutely function like an external memory layer.

What AI is genuinely good at offloading

Capturing weak signals before they disappear

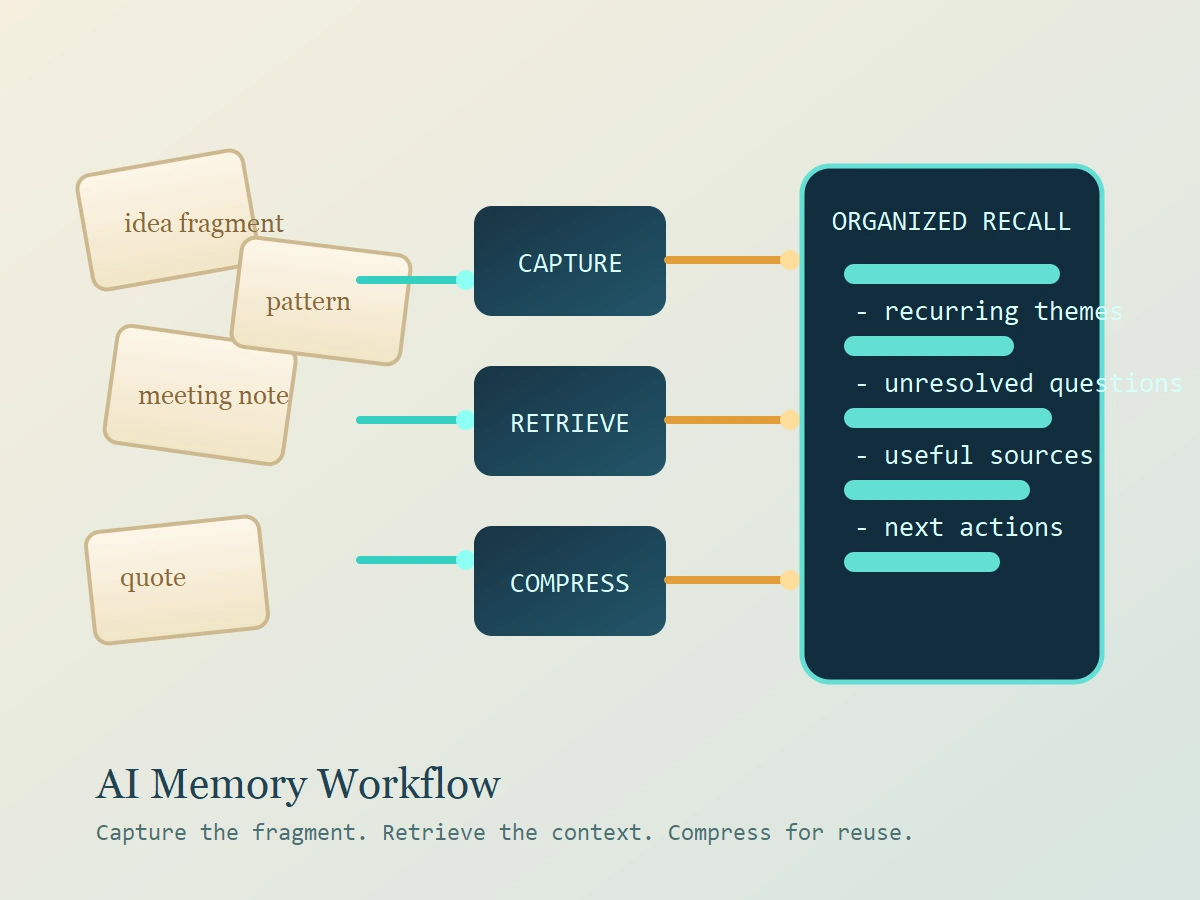

Most valuable ideas do not arrive fully formed. They show up as fragments: a phrase from a meeting, a sharp line from an article, a pattern you noticed during a walk, or a half-articulated objection that vanishes by the time you sit down to write. AI helps by lowering the friction of capture. It can turn rough voice notes into structured text, cluster related fragments, and tag material so future-you can actually recover it.

That matters because memory is lossy. Many good ideas are not forgotten because they were unimportant. They are forgotten because they were never stored in a usable form. If AI improves the odds that weak signals survive first contact with reality, it is already doing meaningful memory work.

Retrieving across messy personal archives

This is the strongest case for the “external hard drive” analogy. Storage is rarely the real bottleneck anymore. Retrieval is. Once your archive becomes large, folders start acting like graveyards. Keyword search fails when you remember the shape of an idea but not the exact phrase you used six months ago.

AI changes retrieval because it works semantically. You can ask, “What was the argument I made last fall about pricing complaints?” or “Show me every note where I sounded uncertain about onboarding.” That is different from hunting through file names. The value is not that AI suddenly understands your life like a person. The value is that it lowers the cost of getting your own context back.

Compressing and resurfacing old context

The cognitive offloading literature clarifies why this helps. Evan Risko and Sam Gilbert describe offloading as the use of external tools to reduce internal memory and processing demands. That is not automatically a weakness. In many cases it is an efficient allocation of attention. Benjamin Storm and Sasha Stone found that reliably saving information can improve learning for subsequently encountered material. If some cognitive load is lifted, later learning can benefit.

This is exactly where AI shines when used well. It can take fifty pages of notes and surface the recurring themes, unresolved questions, and action items. It can help you re-enter a dormant project without rereading everything line by line. Built over a trusted archive, AI becomes less like a replacement brain and more like a fast reactivation layer for one.

What should stay inside your own head

First principles, taste, and judgment

There is a line between offloading memory support and offloading agency. The things most worth keeping internal are the ones that shape decisions: first principles, taste, moral judgment, strategic priorities, and the mental models you want available even when no tool is open in front of you.

You do not need to memorize every article you read. You do need an internal sense of what good evidence looks like, which tradeoffs matter in your field, and what standards define your work. Those are not just facts. They are control systems.

High-stakes decisions and source verification

The 2011 “Google effects on memory” paper remains the most intuitive warning. Betsy Sparrow, Jenny Liu, and Daniel Wegner found that when people expect future access to information, they often remember where to find it better than the information itself. That shift can be rational. It becomes dangerous when the retrieval layer is fuzzy, generative, or opaque.

If an AI assistant gives you a polished answer without showing the source trail, you may remember the answer while forgetting that the answer was partly inferred, merged, or hallucinated. For high-stakes domains such as law, medicine, finance, security, and core business decisions, AI memory must be citation-first or it will train false confidence.

Skills you still need to practice

There is also a difference between offloading around a skill and offloading the skill itself. A calculator helps a mathematician move faster without replacing mathematical understanding. But if a learner never practices the underlying operation, nothing stable gets built. The same applies to writing, analysis, coding, and argumentation. AI can help you revisit and refine knowledge. It should not become the only place where the knowledge exists.

Why AI is more dangerous than a normal hard drive

The hard drive metaphor breaks down at the exact point where AI becomes most useful. A hard drive stores what you put into it. It does not rewrite your notes every time you open them. A chatbot does. Even when it is accurate, it returns a compressed and reframed version of what you stored. Retrieval is also transformation.

That matters because human memory is already reconstructive. We do not replay the past like a video file. We rebuild it from cues, fragments, and interpretations. Add an AI layer that summarizes instead of quoting, merges distinct contexts, or fills gaps with plausible language, and your future recollection may absorb those distortions. Over time, you can end up remembering the assistant’s polished story rather than your original thought.

Research on offloading risk points the same way. Work by Risko and colleagues suggests that once memories are externally stored, they can become vulnerable to manipulation. In practical terms, if you trust the external system too much, altered material can flow back into internal memory. That warning matters more with generative AI than with ordinary file storage because the entire interface is built around fluent reinterpretation.

There is also a privacy problem. A real second brain contains half-finished ideas, embarrassing drafts, commercially sensitive details, and emotionally charged material. Dumping all of that into a general-purpose assistant without clear retention, access, and governance rules is not smart augmentation. It is reckless centralization.

Finally, there is the motivational risk. Cognitive offloading is often rational, and Sam Gilbert’s recent value-based framing helps explain why: people offload because they are balancing effort, expected payoff, and limited cognitive resources. The danger is not offloading itself. The danger is offloading the wrong things because short-term convenience makes reflective effort feel optional.

A safer architecture for an AI-powered second brain

Keep a canonical layer and a conversational layer

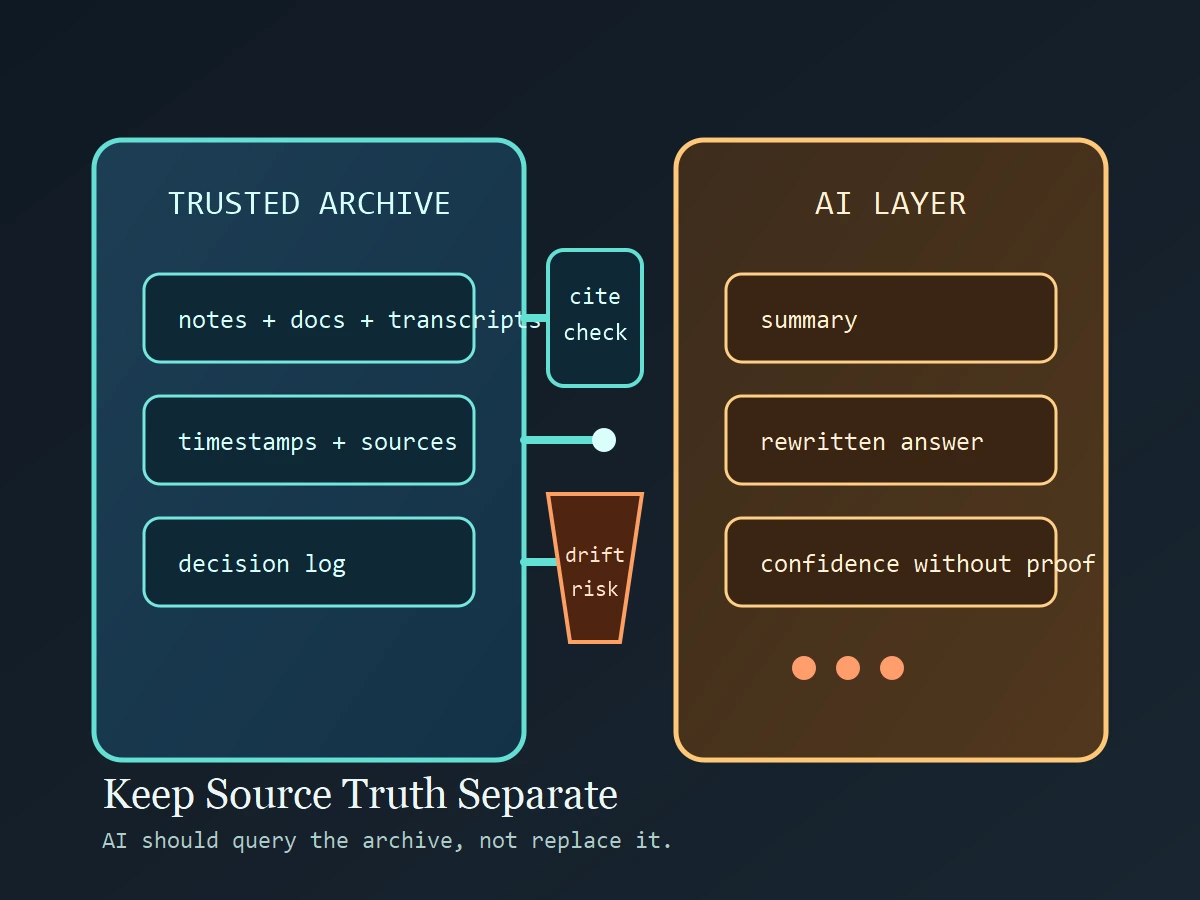

The safest pattern is simple. Keep a canonical layer that contains your actual archive: notes, documents, transcripts, bookmarks, highlights, and decision logs. Then put a conversational AI layer on top that queries, compares, summarizes, and surfaces material from that archive.

This distinction keeps the model from becoming the source of truth. Truth lives in the archive. AI is the interpreter that helps you navigate it.

Make the assistant cite the archive

If you ask AI to remind you what you think about pricing, onboarding, hiring, or product strategy, require citations. The answer should point back to notes, transcripts, or documents that support the claim. If it cannot, treat the response as brainstorming rather than memory retrieval. This one habit sharply reduces false recall.

Use recall before retrieval

Before asking the assistant for the answer, state the answer yourself. Then compare your own recollection with the archive-backed response. This preserves retrieval practice, which strengthens memory, while still giving you the benefit of external support. It also makes distortion easier to catch.

Review and reconsolidate

Do not let your best ideas live only as prompts and summaries. Periodically rewrite core insights in your own words. Build short evergreen notes. Convert repeated AI-assisted discoveries into compact internal models you can carry without the tool.

Limit sensitive data

Not every memory belongs in an AI system. Health details, legal matters, confidential client data, source code, and private emotional records deserve a much tighter standard. An external memory layer should be designed, not dumped into existence.

Final takeaway

AI can function as an external memory layer for the human mind, and the extended mind framework explains why that feels so natural. But the “external hard drive” metaphor is only half right. A hard drive stores. AI stores, retrieves, compresses, rewrites, and predicts. That makes it more useful than ordinary storage and more dangerous than ordinary storage.

Use AI to capture fragments, search your archive, and reactivate old context. Do not use it as a substitute for source control, judgment, or first-principles understanding. The smartest AI-powered second brain is not one that thinks for you. It is one that helps you think with cleaner access to what you already know.

CTA

This week, audit one workflow you already offload to AI, such as meeting notes, reading highlights, or project retrospectives. Build a canonical archive for that workflow, then force your assistant to answer only from that archive with citations. If the system cannot show its sources, it is not memory support yet. It is just fluent guesswork.

Source Notes

- Andy Clark and David Chalmers, “The Extended Mind” (1998) for the foundational argument that external tools can become part of a cognitive system when they are reliably coupled to thinking.

- Daniel Wegner and colleagues on transactive memory for the idea that people often remember by knowing where knowledge resides.

- Betsy Sparrow, Jenny Liu, and Daniel Wegner, “Google Effects on Memory” (2011) for the finding that expected access changes what people remember.

- Evan Risko and Sam Gilbert, “Cognitive Offloading” (2016) for the broader framework of why humans use tools to reduce memory and processing demands.

- Benjamin Storm and Sasha Stone, “Saving-Enhanced Memory” (2015) for evidence that reliable saving can improve later learning.

- Evan Risko and colleagues on memory manipulation after offloading for the warning that externally stored memories can become vulnerable to contamination.

- Sam Gilbert, “Cognitive Offloading as Value-Based Decision Making” (2024) for a current account of why humans strategically decide when to offload.