If you want to understand how technology is changing the human mind, the useful question is not whether phones are making us smarter or dumber. It is how tools keep shifting the division of labor between brain and environment. Writing, printed books, maps, notebooks, calculators, search engines, and smartphones all moved some part of memory or reasoning outside the skull. Brain-computer interfaces may move the next layer closer to the nervous system itself. By the end of this guide, you should have a practical way to think about external memory, cognitive offloading, smartphone-era attention, and what neural interfaces might really change next.

The short version is this: human cognition has always been partly tool-assisted. Smartphones accelerated that pattern by making external memory constant, portable, and socially connected. Neural interfaces may deepen the shift, but they do not remove the need for judgment, consent, and human control.

Human cognition has always extended beyond the skull

Why writing, reading, and diagrams already changed the mind

People often talk about smartphones as if they were the first technology to alter thought. They were not. Long before digital devices, humans used marks, symbols, and structured artifacts to store ideas outside biological memory. A grocery list lets you stop rehearsing every item in your head. A map lets you navigate space without memorizing every landmark in advance. A diagram lets you inspect relationships visually instead of carrying the whole structure mentally.

That matters because cognition is not just raw brainpower. It is also how the brain recruits outside supports. Cultural tools can even change the brain itself. In one study of adults learning to read, literacy training was associated with measurable changes in cortico-subcortical connectivity in the visual system. Source That is a strong reminder that a cultural technology like reading is not just a convenience layer. It can reorganize how the brain processes information.

So when people ask whether technology is changing human intelligence, the answer is yes, but not in a sudden smartphone-only way. The better framing is that humans have always built cognitive scaffolding around themselves. Each major tool changes what must be remembered internally, what can be stored externally, and how attention gets allocated.

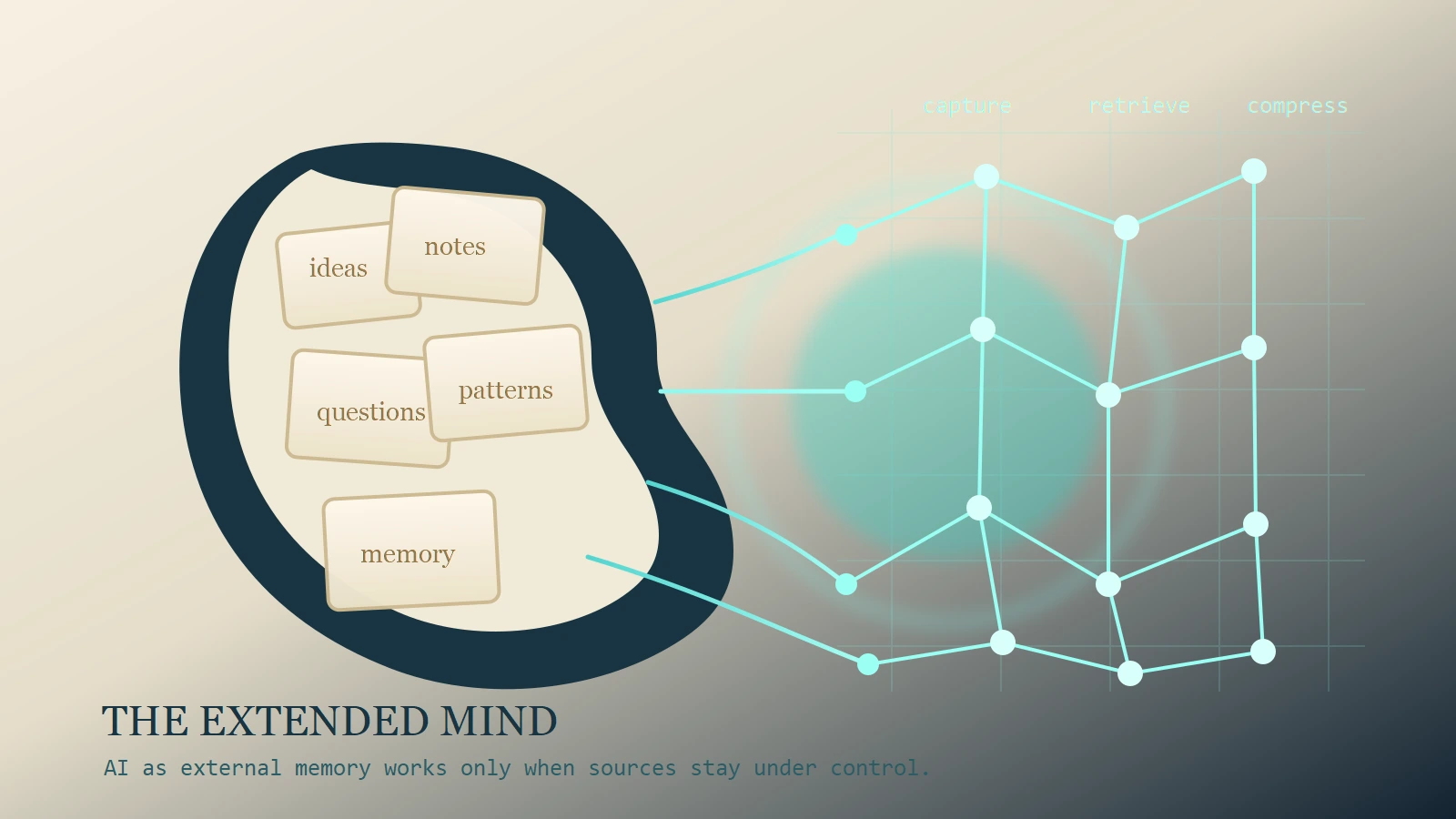

A plain-language definition of external memory

External memory means information stored outside your biological recall but still available to support thinking. A notebook is external memory. So is a whiteboard full of project notes. So is a digital calendar, a bookmark folder, a saved route, or a searchable archive of messages.

The easiest comparison is this:

- Internal memory is remembering your dentist appointment from recall.

- External memory is knowing that your calendar will surface it when needed.

Neither is automatically better. External memory reduces mental load. It also changes what your mind practices. If you always store a piece of information outside yourself, you may become less likely to encode it deeply. But you may also free up attention for more useful work.

What cognitive offloading actually is

How humans trade internal effort for tool-assisted recall

The research term for that trade is cognitive offloading. Risko and Gilbert describe it as using physical action or changes in the environment to reduce internal cognitive demand. Source In plain language, it means setting up the world so your brain does less storage or tracking work.

People do this constantly. You put your keys near the door so you do not have to remember where they are. You set reminders instead of mentally rehearsing a date all day. You ask a navigation app for turn-by-turn guidance instead of building the whole route from memory. None of that is strange or lazy. It is often efficient.

A simple example makes the idea concrete. Imagine planning a complicated week with travel, deadlines, and family obligations. You could try to hold every commitment in working memory, or you could build a calendar system with reminders, notes, and travel buffers. The second method does not mean you stopped thinking. It means you used a tool to reduce recall burden so you could focus on sequencing, tradeoffs, and decisions.

Example: remembering the fact versus remembering where to find it

One of the best-known studies in this area is Sparrow, Liu, and Wegner’s 2011 paper on what they called the Google effects on memory. Their core finding was not that the internet destroys memory altogether. It was more specific: when people expect information to remain accessible externally, they become less likely to remember the information itself and more likely to remember where to find it. Source

That distinction matters. It suggests that digital tools can shift memory strategy from store the content to store the path to the content.

You can see the same shift in everyday life. Many people no longer memorize phone numbers the way they once did. That sounds like loss, and in one sense it is. But it is also a reallocation. The mind stops spending effort on one task because the environment now handles it reliably. The real question becomes whether that freed capacity gets used for higher-level reasoning or gets eaten by distraction.

What smartphones changed

The leap from occasional offloading to always-on offloading

A printed atlas, a filing cabinet, and a pocket notebook all support cognition, but they do so intermittently. A smartphone changed the frequency, speed, and emotional texture of that support. It is not just a memory aid. It is a calendar, search engine, map, camera, notebook, message archive, recommendation system, and social feed in one object.

That combination matters. The review by Firth and colleagues describes the online brain as an environment that may influence attention, memory, and social cognition all at once. Source Smartphones compress that environment into something we carry everywhere. The result is a shift from occasional cognitive offloading to nearly continuous offloading.

Think about navigation. With a paper map, you usually studied the route before leaving and then used the map when necessary. With a phone, you often consult the route in real time and let the device decide when the next turn matters. That reduces planning burden. It may also reduce route encoding because the brain does less independent spatial work.

The same logic applies to reminders, search, contact memory, and even social memory. A phone does not just store facts. It stores cues, contexts, photos, messages, and location histories that can stand in for parts of recall.

Attention costs are real, but not as simple as the headlines suggest

This is where public discussion often gets sloppy. It is tempting to say, smartphones ruin attention, full stop. The evidence is more nuanced.

Some studies do find that the mere presence of a smartphone can compete for limited attentional resources. Skowronek, Seifert, and Lindberg reported reduced basal attentional performance when a smartphone was present in the environment. Source That supports the everyday intuition that a phone on the desk is not always a neutral object.

At the same time, direct replication work has been less tidy than popular summaries imply. Ruiz Pardo and Minda reexamined the well-known brain drain result from Ward et al. and did not recover the same effect cleanly. Source A later meta-analysis by Böttger, Poschik, and Zierer found an overall negative effect in the literature, but not one that justifies sweeping claims about universal cognitive collapse. Source

The useful conclusion is not that the problem is fake. It is that the effect depends on context, task type, habit, and how the device is positioned in the workflow. A phone can act like a quiet memory prosthetic in one moment and an attention tax in the next.

A concrete comparison helps:

- During deep reading, visible notifications and latent social expectations can fragment sustained attention.

- During travel, a phone can reduce working-memory load by handling route tracking, check-in details, and schedule changes.

The device is the same. The cognitive role is not.

This is a tradeoff, not a simple decline story

When offloading expands capability

The downside story gets more attention than the upside, but it is incomplete on its own. Offloading can expand capability when it frees the mind for better work.

A good analogy is arithmetic. Calculators reduced the need to perform certain computations mentally. They also made more complex quantitative work accessible in classrooms, engineering, finance, and everyday planning. The real gain was not just faster calculation. It was the ability to shift attention upward toward problem structure and interpretation.

External memory can work the same way. If a researcher no longer spends energy remembering every citation location, that effort can move into comparison and synthesis. If a team uses a shared note system well, fewer decisions disappear into chat history and more attention remains for actual judgment. In that sense, digital tools can widen effective cognitive reach.

This is why cognitive offloading should not be treated as a synonym for weakness. It is often a rational strategy. Humans have finite working memory, limited attentional bandwidth, and competing demands. Building supports around those limits is part of intelligence, not evidence against it.

When offloading weakens encoding or sustained focus

Still, there is a real cost when offloading becomes reflexive rather than strategic. If the device becomes the default answer to every small uncertainty, the brain may encode less, rehearse less, and tolerate less friction in the process of remembering.

That does not mean memory disappears. It means memory style changes. You may become better at cue-driven retrieval and worse at spontaneous recall. You may become faster at reaching information and slower at holding an argument in mind without interruption.

The Firth review is useful here because it does not make one sweeping claim. It suggests that the online environment may shape several domains at once, especially attention and memory retrieval. Source That is a better fit for lived experience. Many people are not forgetting everything. They are relying on a system where retrieval is external, fast, and fragmented.

The practical risk is not just weaker fact recall. It is reduced sustained thought. A person who constantly checks, switches, and reorients may still access enormous information, but struggle to stay with one difficult line of reasoning long enough to transform it into understanding.

That is why design matters. A phone used as a quiet memory layer is one thing. A phone used as a loop of alerts, feeds, and unresolved tabs is another.

From smartphones to synapses

What brain-computer interfaces can actually do today

The phrase from smartphones to synapses sounds dramatic, so it needs grounding. Brain-computer interfaces are not consumer mind-upgrade devices in any broad sense today. They are mainly specialized systems that record neural activity and translate it into useful outputs such as cursor movement, text, speech, or device control, often in assistive or clinical settings.

That distinction matters. A smartphone is an external interface you consult intentionally. A BCI creates a more direct path between neural signals and digital systems.

Recent progress is real. Nature Electronics noted in September 2024 that advances in sensors, electrodes, and probes are expanding BCI capabilities while raising wider ethical and societal questions. Source The 2023 Nature paper by Willett and colleagues showed a high-performance speech neuroprosthesis that restored rapid communication in a participant with severe paralysis. Source Metzger and colleagues reported related work on speech decoding and avatar control. Source

Those are important milestones, but they are not proof that ordinary consumers will soon merge with cloud cognition. The current frontier is still mostly about restoring lost function, improving assistive communication, and pushing signal decoding farther than earlier systems could. Readers who want a dedicated breakdown of this field can continue with MindoxAI’s guide to brain-computer interfaces and the future of AI.

Why neural interfaces are not just “better smartphones”

It is tempting to think of a neural interface as a smartphone without the screen. That misses the real difference.

With a phone, you decide when to look, what to search, and whether to trust the result. The interface sits outside you. With a neural interface, the system gets closer to the signal layer of intention, motor planning, or communication. That changes the privacy, consent, and reliability stakes.

A comparison makes this clearer:

- A search engine query is an intentional request you type.

- A speech neuroprosthesis attempts to decode neural patterns tied to intended communication.

Both are tools. They do not pose the same design problem.

So the future arc is not simply more convenience. It is a shift in where cognition meets machinery. Smartphones changed retrieval and coordination from the outside. Neural interfaces may change communication and control from much closer in.

The future of humanity question is really about agency

Which cognitive functions should stay internal

Once the conversation moves from phones to synapses, the future-of-humanity question stops sounding abstract. It becomes practical very quickly.

Not every cognitive task should be externalized to the same degree. Outsourcing route memory to navigation software is relatively low-stakes. Outsourcing moral judgment, intimate preference formation, or interpretation of neural signals is different.

The useful dividing line is not natural versus artificial. It is agency. Which parts of cognition should remain meaningfully owned, interpreted, and revised by the person? Which parts are safe to automate, scaffold, or decode? Which parts require strong consent and clear boundaries?

Judgment is the clearest example. You can offload reminders, transcription, route planning, and document search without losing authorship of your decisions. But if a system begins to infer intention from neural data or shape choice through always-on cognitive mediation, the stakes change. That is no longer just a productivity tool. It becomes part of the architecture of agency itself.

This is also where the broader comparison with AI vs human intelligence becomes useful. External tools can support cognition, but accountable judgment still belongs to people.

Privacy, inequality, and governance before enhancement

This is why the most serious BCI discussions are already about more than technical capability. They are about governance, privacy, and who benefits.

Neural data is not ordinary app telemetry. Even if current BCIs remain narrow and clinical, the closer technology gets to decoding intention, the stronger the case for strict limits around access, storage, interpretation, and commercial use. Nature Electronics was explicit that the field carries ethical, legal, and societal implications alongside engineering progress. Source

Inequality matters too. If advanced cognitive tools become expensive layers of enhancement for a small minority while basic digital dependence fragments everyone else’s attention, the result is not a uniform cognitive upgrade. It is stratification.

So the biggest question is not whether tools will keep changing cognition. They will. The question is whether we design that shift around human flourishing or around convenience without boundaries.

Final Thoughts

The evolution of human intelligence is not a story about the brain being replaced by gadgets. It is a story about how humans keep redistributing memory, attention, and coordination across brains, tools, and environments.

Writing changed that balance. Literacy changed it again. Search engines and smartphones changed it at a new speed and scale. Brain-computer interfaces may push the next step closer to the nervous system, but the underlying question remains the same: what should the tool handle, and what should remain under human judgment?

That is the right way to read the future. Not as a contest between pure biology and pure technology, but as an ongoing redesign of where thinking happens and who stays in charge of it. Readers who want the longer-form AI cognition angle can also read MindoxAI’s piece on why AGI still can’t think like a human mind.