If you are trying to judge AGI claims without getting pulled into hype, this article gives you a practical framework. In the next few minutes, you will see exactly where current AI can look human, where it still differs from human cognition, and why that distinction matters for students, founders, researchers, and professionals. The goal is clear thinking, not dramatic claims.

The short version is this: AI capability is improving quickly, but “AGI thinking like a human mind” is a deeper claim than strong benchmark scores or fluent answers. To evaluate that claim well, we need to separate three layers: performance, reasoning process, and consciousness.

If you want a baseline definition first, see this primer on AGI explained, then return here for the cognition-level analysis.

First, Define the Question Correctly

Most confusion starts with mixed definitions. So we begin there.

Artificial general intelligence (AGI) usually means broad competence across many tasks. It does not automatically mean human-like cognition in every sense. The DeepMind-led “Levels of AGI” paper pushes this discussion forward by separating performance, generality, and autonomy (Levels of AGI).

Human cognition means how minds build and use understanding through perception, memory, emotion, social context, goals, and causal models.

A simple comparison:

- A model can produce a polished explanation of how to ride a bicycle.

- A child can actually learn balance through physical trial, correction, and adaptation.

Both can produce useful knowledge, but the underlying process is different. One is mostly pattern synthesis in symbol space. The other is embodied learning in the world.

That is why we should ask a tighter question: Do current AGI-like systems merely produce human-like outputs, or do they form and use understanding the way people do?

How Human Cognition Works (and Why It Is Hard to Replicate)

Human thought has constraints, but it also has flexible strengths that remain difficult to unify in AI.

Humans learn from sparse data plus rich context

People can generalize from very few examples when context is meaningful. Show a child once that a glass can shatter, and they often extend that lesson to other fragile objects.

Cognitive science points to ingredients behind this efficiency: intuitive physics, intuitive psychology, causality, and compositionality (Lake et al., 2017).

Comparison:

- Human learner: fewer examples, more prior structure.

- Model learner: many examples, weaker grounding unless explicitly engineered.

Humans build causal stories, not only correlations

A correlation says two things often appear together. A causal model says one thing helps produce another.

Example:

- Correlation-level claim: “People carry umbrellas when streets are wet.”

- Causal-level understanding: “Rain causes wet streets and causes umbrella use.”

Humans rely on this causal style for planning and counterfactual reasoning: “What happens if I change one variable?”

Human reasoning is limited, yet adaptive

Humans do not have unlimited working memory. A well-known result suggests active short-term capacity is often around four chunks (Cowan, 2001).

But people compensate through strategies: chunking, analogy, external tools, and social reasoning. So human cognition is not about perfect memory or raw speed. It is about adaptive control under constraints.

Where AGI Looks Human but Is Still Different

This section is the center of the debate. Modern systems can look strikingly human in conversation, coding help, and synthesis tasks. That visible fluency can hide deeper differences.

For a broader side-by-side overview, this companion article on AI vs human intelligence is a useful background read.

Benchmark success can overstate robust reasoning

Benchmark progress is real, and it matters. But benchmark scores are not identical to stable, human-like general reasoning.

The GSM-Symbolic study shows this clearly. Models that perform well on standard math sets can degrade when symbolic details change, even when core reasoning structure stays similar (GSM-Symbolic).

Comparison:

- Human student: often transfers logic across wording variants.

- Model: may be sensitive to phrasing that should be irrelevant.

This is not a dismissal of model progress. It is a reminder that robust transfer is a different bar.

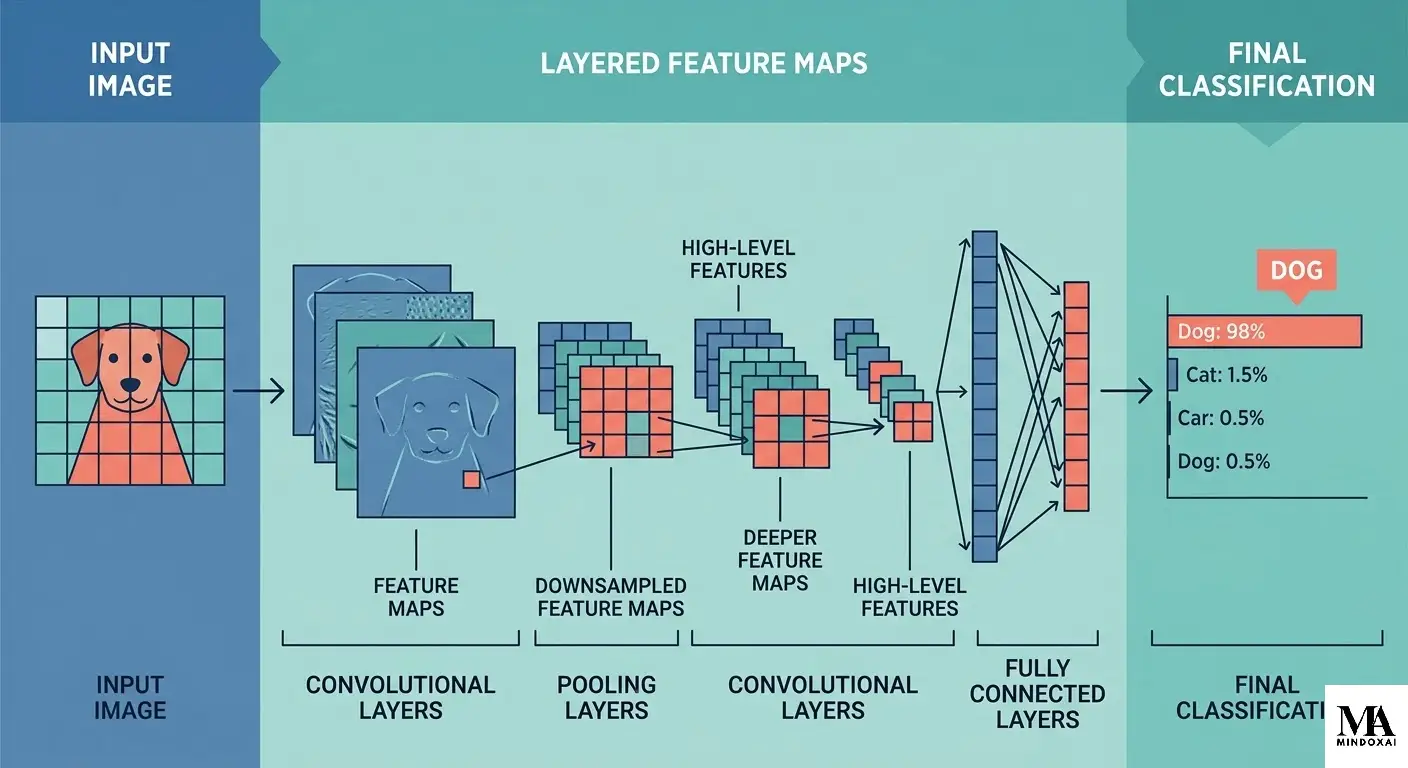

Next-token prediction is strong, but not equivalent to grounded understanding

Large language models are trained mainly to predict likely next tokens from large corpora. This can produce behavior that resembles explanation and reasoning.

Yet the objective itself does not guarantee grounded world models, stable causal inference, or consistent long-horizon reasoning in unfamiliar conditions.

The GPT-4 technical report describes impressive capability growth while also noting limitations and safety concerns that remain important in practice (GPT-4 Technical Report).

Concrete example:

- A model may produce an excellent medical-style summary.

- A clinician integrates body signs, patient history, uncertainty tolerance, and liability constraints in real time.

The output can look similar. The cognitive pipeline is not the same.

Fluency can create the illusion of understanding

When an answer is coherent and confident, people infer depth. That is a normal human response. It is also a reliability risk.

A useful mental rule:

- Readable answer means “the output is polished.”

- Reliable understanding means “the reasoning stays sound when conditions change.”

Those are related but separate properties.

The Hard Problems AGI Still Has

If we move from broad claims to concrete gaps, the picture becomes clearer and more useful.

1) Common-sense generalization in open environments

Francois Chollet defines intelligence as skill-acquisition efficiency over a range of tasks, given priors and experience (On the Measure of Intelligence).

By that standard, one key challenge is adapting efficiently to unfamiliar situations without massive retraining.

Example:

- Human: enters a new kitchen, infers function from handles, heat cues, and social norms.

- Model: may describe likely tool use well in text, but can fail when interaction becomes physical, ambiguous, or safety-critical.

2) Stable causal reasoning under small perturbations

Human reasoning is imperfect, but causal logic often remains stable under modest wording changes. AI systems still show brittle zones where tiny prompt edits alter conclusions.

This matters in high-stakes settings. If a legal or medical recommendation flips because phrasing changed, that is not human-like robustness.

3) Integrated goal formation and value awareness

Humans generate goals from needs, values, relationships, and long-term identity. Current AGI-like systems execute given objectives; they do not show human-style motive formation.

Comparison:

- Human professional decides not only how to do a task, but whether the task should be done.

- Model optimizes within provided instructions and guardrails.

That distinction shapes accountability, trust, and deployment design.

What About Machine Consciousness?

People often ask a direct question: if AI sounds reflective, is it conscious?

Here, definitions are crucial.

- Behavioral competence: the system produces outputs that appear thoughtful or empathic.

- Subjective experience: the system has an inner felt point of view.

These are not the same claim.

Example:

- A model says, “I know this is painful, and I am here to help.”

- This can be supportive behavior.

- It is not direct evidence of felt experience.

A major interdisciplinary review proposes theory-linked indicators for machine consciousness and concludes that current systems do not yet meet strong evidence thresholds (Butlin et al., 2023).

If you want a deeper philosophical walkthrough, this related post asks can AI be conscious.

So the balanced conclusion is simple: consciousness is not ruled out forever, but present claims should stay evidence-first.

A Quick Reader Test for Future AGI Claims

When you see a new AGI headline, run this five-point check.

- What exactly was demonstrated?

– One benchmark, one domain, or broad transfer? - What changed in the setup?

– Did performance remain strong under perturbation? - Is the claim about output or cognition?

– “Sounds human” and “thinks like a human” are different claims. - Is consciousness being implied without evidence?

– Require explicit indicator-based support. - Are failure modes reported clearly?

– Serious research includes limits, not only wins.

This small checklist helps avoid both extremes: blind hype and blanket dismissal.

What Would Need to Change for AGI to Think More Like Humans?

If the target is closer human-like cognition, several research directions matter.

Better grounding through world interaction

Text-only scaling is powerful, but human-like cognition likely needs richer sensorimotor grounding.

Comparison:

- Reading recipe books gives symbolic knowledge.

- Cooking under time pressure teaches timing, smell, texture, and adaptation.

Stronger causal and compositional learning

Human learners combine reusable concepts into new structures quickly. Building this kind of compositional causal flexibility remains central in the cognitive science agenda (Lake et al., 2017).

Better evaluation culture in deployment

Organizations need evaluation discipline that tracks robustness, transfer quality, and failure behavior, not only headline benchmark gains.

The practical governance side supports this. Frameworks such as NIST AI RMF emphasize measurable risk management in real settings where people may over-trust fluent outputs (NIST AI RMF 1.0).

AGI vs Human Intelligence at a Glance

The easiest way to avoid confusion is to compare dimensions directly instead of asking one giant yes-or-no question.

| Dimension | AGI-like systems today | Human cognition |

|---|---|---|

| Learning source | Mostly large-scale training data and optimization | Lifelong embodied, social, and goal-directed learning |

| Generalization | Strong in familiar distributions, uneven in novelty | Often efficient in novelty through abstraction and context |

| Causal reasoning | Emerging, but can be brittle under perturbation | Imperfect but usually stable in everyday transfer |

| Motivation | Objective-driven from external task setup | Value- and identity-shaped internal goal systems |

| Consciousness evidence | No robust consensus evidence in current systems | Direct first-person experience is part of normal human life |

A concrete example makes the table practical. Imagine a founder deciding whether to launch a new healthcare feature next week:

- The AI assistant can synthesize regulations, summarize user feedback, and generate options in minutes.

- The human founder weighs trust, brand risk, legal exposure, team morale, and long-term values.

Both are doing cognition, but not the same kind. One is high-speed synthesis over learned statistical structure. The other integrates social responsibility, personal judgment, and lived accountability.

This is why “AGI vs human intelligence” is not a winner-loser scoreboard. It is a systems comparison. In many workflows, the best results come from combining machine speed with human judgment instead of forcing one-to-one equivalence.

Final Thoughts

AGI is advancing, and the progress is meaningful. But the phrase “AGI thinking like a human mind” should be treated as a high bar, not a casual headline.

The current evidence supports a calibrated view: modern AI can produce increasingly human-like outputs, yet still differs from human cognition in grounding, robust causal transfer, adaptive goal formation, and consciousness evidence.

That same distinction becomes even more important when you evaluate longer-horizon capability claims around superintelligence risks.

That distinction improves decision quality. It helps researchers ask better questions, helps builders design safer systems, and helps readers avoid false certainty in both directions.

Sources

- Levels of AGI: Operationalizing Progress on the Path to AGI

- Building machines that learn and think like people

- On the Measure of Intelligence

- GPT-4 Technical Report

- GSM-Symbolic: Understanding the Limitations of Mathematical Reasoning in Large Language Models

- Consciousness in Artificial Intelligence: Insights from the Science of Consciousness

- The magical number 4 in short-term memory

- AI Index Report 2025

- NIST AI RMF 1.0