If AGI headlines feel confusing, you are not alone. This guide gives you a clear answer to what is Artificial General Intelligence, how it differs from today’s AI, and how to evaluate big AGI claims without guesswork. You will get plain-language definitions, concrete examples, and trusted sources. By the end, terms like AGI meaning, AGI vs narrow AI, and strong AI should make practical sense, even if you are new to advanced AI topics.

What Is Artificial General Intelligence (AGI)?

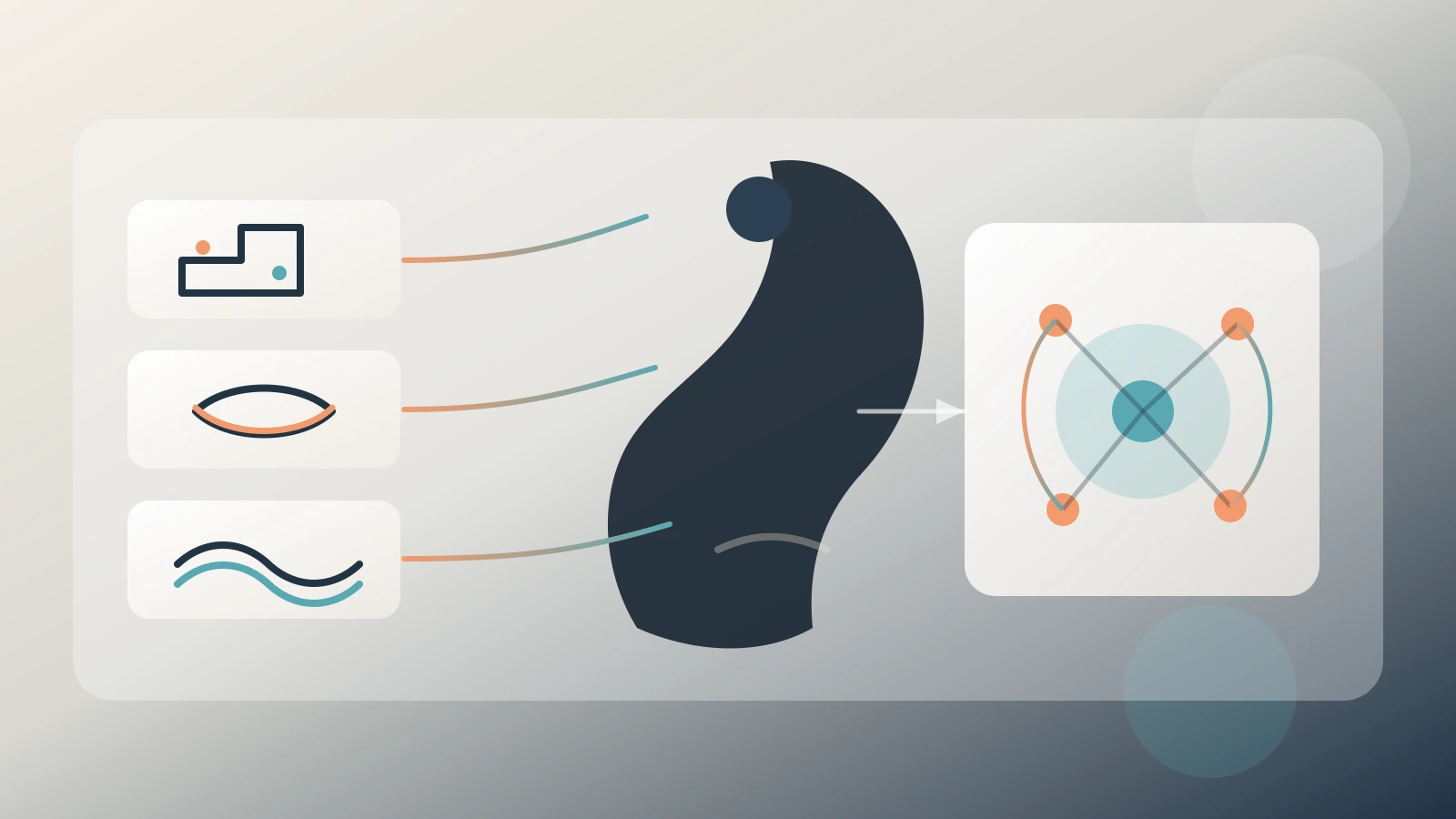

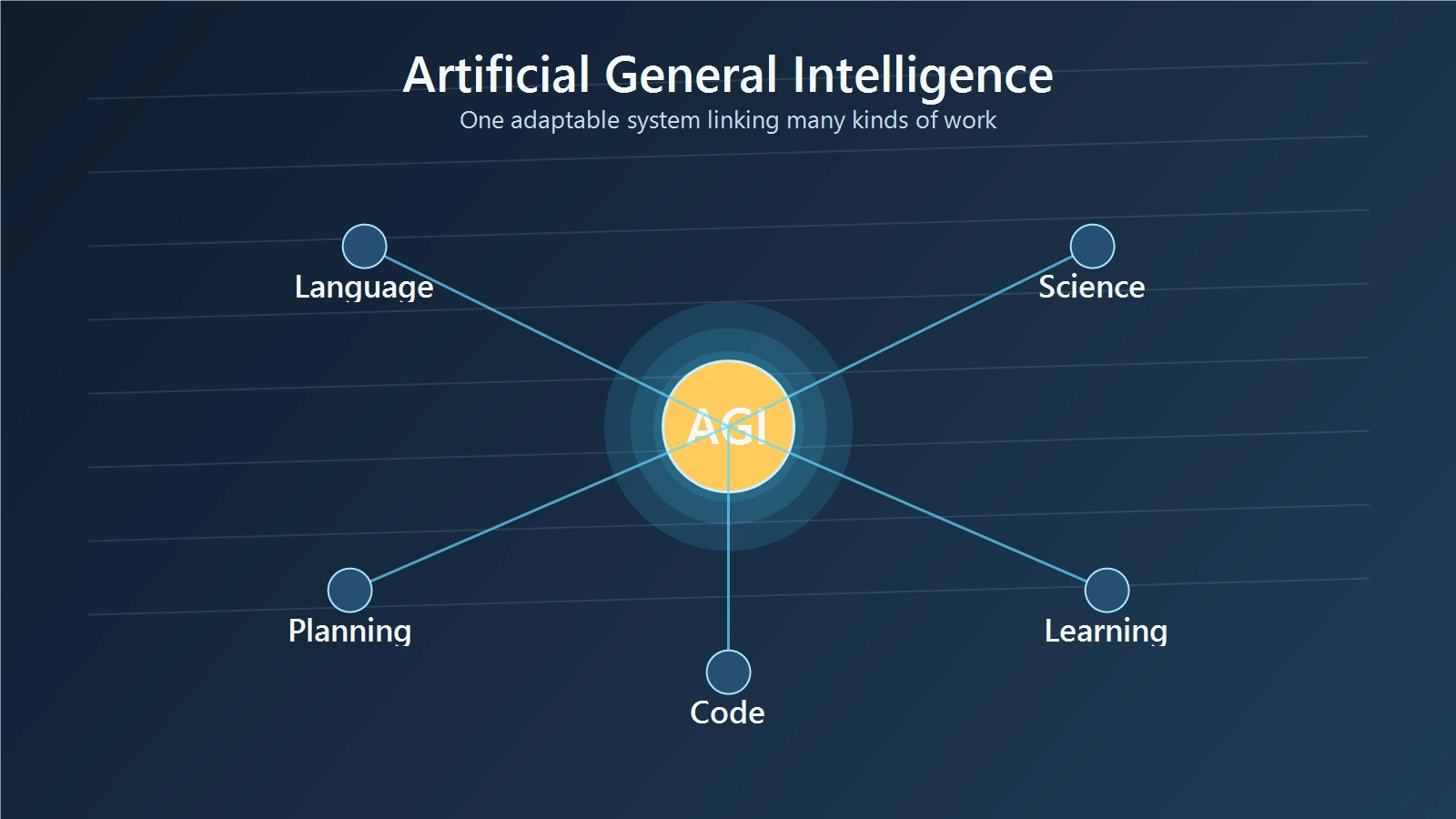

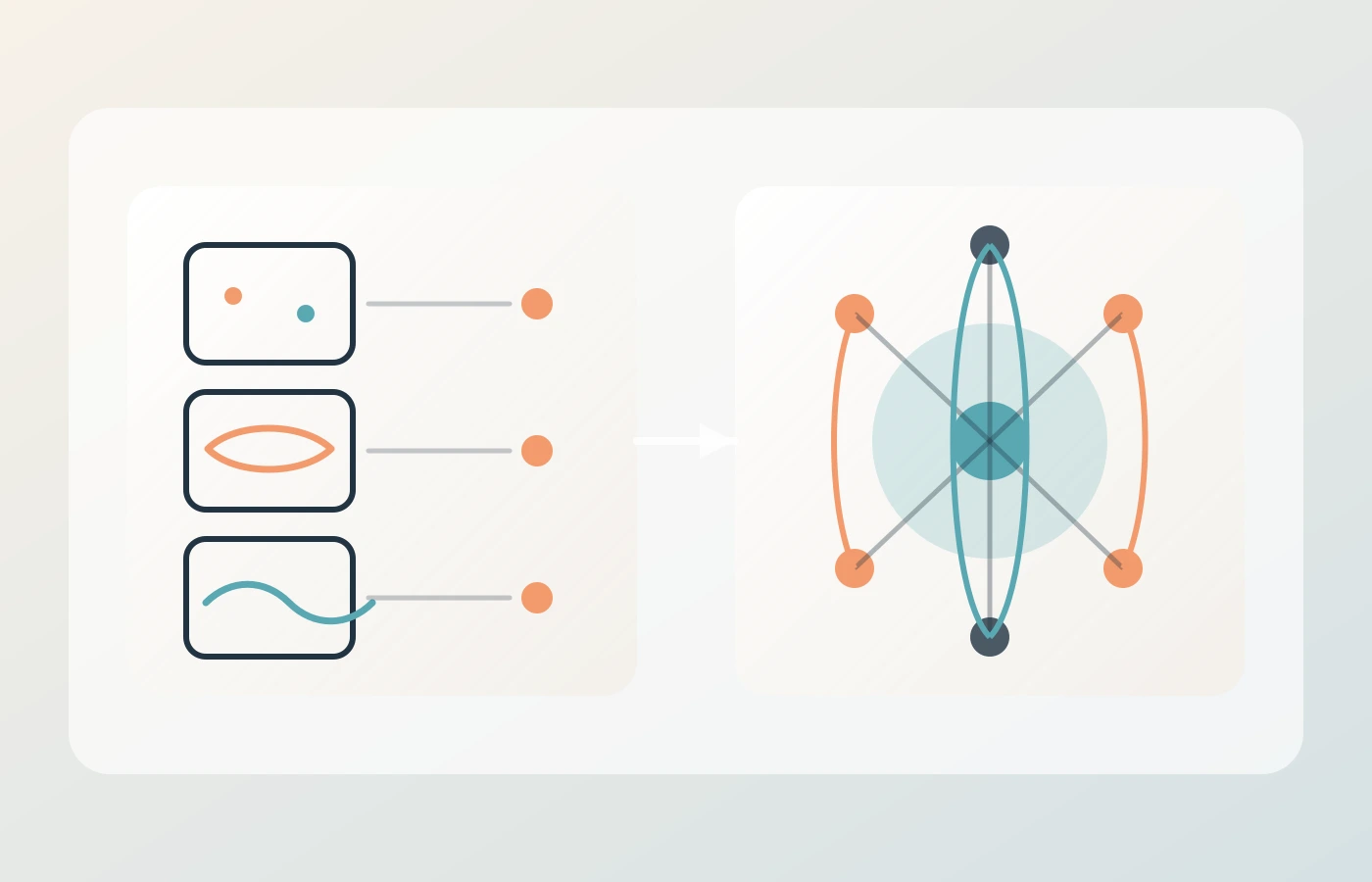

Artificial General Intelligence (AGI) is usually described as AI that can handle many different cognitive tasks at human-like or higher levels, including new tasks it was not narrowly trained for.

That sounds straightforward, but there is a key point: the field does not have one final definition. OpenAI’s charter defines AGI as highly autonomous systems that outperform humans at most economically valuable work. A DeepMind-led paper on AGI levels says directly that no consensus definition has been accepted across the field.

A simple comparison helps:

- Narrow AI is a specialist tool.

- AGI would be a flexible general problem-solver.

So when people ask for AGI explained, the practical answer is this: AGI is not just a better chatbot. It is the idea of broad, adaptable intelligence.

AGI vs Narrow AI: The Difference That Matters

The difference between AGI and AI becomes clear when you look at real systems.

Most AI in use today is narrow AI. It can be world-class in one domain while remaining limited outside that domain.

Examples:

- AlphaGo reached superhuman play in Go.

- AlphaFold solved protein-structure prediction at high accuracy.

- Whisper performs robust speech recognition across many conditions.

These are major scientific and engineering wins. But each one is task-focused.

AlphaGo cannot run a hospital. AlphaFold cannot negotiate a legal contract. Whisper cannot design a physics experiment. Their strength is depth, not general breadth.

That is the core of AGI vs narrow AI:

- Narrow AI: excellent at bounded tasks.

- AGI: competent across many domains, with reliable transfer to unfamiliar tasks.

Think of narrow AI as a set of elite specialists. AGI would be closer to a capable generalist who can learn and switch contexts quickly.

Is AGI the Same as Strong AI?

People often use AGI and strong AI as interchangeable labels, but they come from slightly different discussions.

The phrase “strong AI” is tied to philosophy of mind debates, especially John Searle’s 1980 paper Minds, Brains, and Programs. In that context, the question is not only performance, but whether a machine genuinely understands.

AGI is more often used in capability language: can a system perform broad cognitive work across domains?

A clean way to avoid confusion:

- Use AGI for capability scope and generality.

- Use strong AI when the claim involves mind-like understanding or consciousness.

This distinction is useful for students and professionals because many AGI arguments are really mixing technical claims with philosophical claims.

Do We Have AGI Yet?

Short answer: no broadly accepted evidence says AGI exists today.

Current frontier models are powerful and increasingly general in interface. They can write, summarize, code, and reason across many topics. But they still show reliability gaps. The GPT-4 technical report, for example, documents both broad capabilities and persistent limitations, including factual errors.

A model that looks general in conversation is not automatically general in robust real-world performance.

A concrete comparison:

- Passing many exam-style tasks shows breadth.

- Handling new, open-ended, high-stakes tasks with stable reliability shows deeper general intelligence.

Most systems today are stronger at the first than the second.

This is why “Do we have AGI?” is still open. If someone asks for artificial general intelligence examples, the careful answer is: we have strong multi-capability AI systems, but no universal AGI example accepted by the whole field.

How Researchers Track Progress Toward AGI

There is no single AGI finish line, so researchers rely on multiple lenses.

1) Historical test lens

The Turing Test asked whether machine conversation could be indistinguishable from a human in text exchange. It is historically important, but most researchers now see it as insufficient on its own.

2) Generalization lens

Francois Chollet’s work argues that intelligence should include skill transfer to unseen tasks, not just performance on familiar benchmark patterns.

3) Benchmark lens

Projects like ARC-AGI try to stress abstraction and transfer. These tests are designed to reduce shortcut behavior and reward more general reasoning.

4) Capability-level lens

The DeepMind AGI levels paper proposes staged levels to classify system competence, from narrow systems to increasingly general ones.

A practical takeaway: do not trust one headline benchmark. Look for consistent progress across reliability, transfer, autonomy, and safety.

Think of it like judging a driver. Passing one closed-track test does not prove safe driving in every city, weather, and emergency. AGI claims need that broader standard.

Potential Benefits of AGI (If It Arrives)

The future of AGI discussion is worth having, but only if it stays concrete.

Potential upside could include:

- Faster scientific discovery by combining reasoning, simulation, and literature synthesis.

- Better adaptive tutoring that responds to each learner’s misunderstandings.

- Stronger decision support in medicine, logistics, and infrastructure when systems can connect multiple data streams.

Example scenario:

A research lab currently uses separate tools for searching papers, designing experiments, writing code, and analyzing results. A more general system could coordinate these steps while humans stay in control of goals and validation.

That kind of support could raise productivity. But productivity alone is not the same as trustworthy deployment.

Risks and Governance: Why AGI Debate Is Also a Policy Debate

AGI is not only a technology topic. It is also a governance topic.

Main risk areas include:

- Reliability failures in critical settings.

- Misuse at scale, including fraud, manipulation, and cyber abuse.

- Concentrated control over highly capable systems.

- Uneven social and labor impacts.

The policy base is already forming:

- NIST AI RMF 1.0 provides practical risk management guidance.

- NIST Generative AI Profile extends those controls for generative models.

- OECD AI Principles provide global guidance on trustworthy, human-centered AI.

- UNESCO Recommendation on the Ethics of AI sets rights-based ethical guardrails.

- EU AI Act creates legal obligations for classes of AI systems, including general-purpose contexts.

A useful comparison is aviation safety. Aircraft are judged by testing, incident handling, and certification processes, not just raw speed. AGI governance needs the same discipline: capability plus controls.

Future of AGI: Better Questions Than “When Will It Arrive?”

Timeline claims are easy to publish and hard to verify. Different experts make very different predictions.

For readers, better questions are:

- How broad is the system’s capability in real tasks, not demos?

- How reliably does it transfer to new situations?

- What failure modes are known, and how often do they appear?

- What controls exist before deployment in high-stakes use?

- Who is accountable when the system causes harm?

This checklist is more useful than any single dramatic date.

If you use it consistently, AGI meaning becomes practical: broad capability plus robust reliability plus governance.

How to Read AGI Headlines Without Getting Misled

Most confusion around AGI comes from mixed claims in one sentence. A headline might combine a benchmark result, a product demo, and a future prediction as if they prove the same thing. They do not.

A simple method is to separate each claim type:

- Capability claim: what the system did in a defined test or task.

- Generality claim: whether that result transfers to new domains.

- Deployment claim: whether it is safe and reliable in real settings.

Example: if a model solves a hard coding benchmark, that is meaningful progress. It still does not prove broad scientific reasoning, safe autonomy, or policy readiness by itself.

This three-part check keeps your reading grounded. It also helps professionals communicate clearly with teams and stakeholders who may hear the same AGI news but interpret it very differently.

Final Thoughts

The clearest answer to What is Artificial General Intelligence is this: AGI is a research goal for broad, adaptable machine intelligence, not a settled achievement.

Today’s AI is advancing quickly, but fast progress does not remove the need for careful definitions and evidence. Keep three filters in view when evaluating AGI claims: breadth of capability, reliability under new conditions, and strength of safeguards. That approach will help you stay accurate as the field evolves.

References

- OpenAI Charter

- DeepMind et al., Levels of AGI

- Alan Turing (1950), Computing Machinery and Intelligence

- John Searle (1980), Minds, Brains, and Programs

- Francois Chollet (2019), On the Measure of Intelligence

- ARC Prize Foundation (2025), ARC-AGI-2 Technical Report

- OpenAI (2023), GPT-4 Technical Report

- Silver et al. (2016), AlphaGo

- Jumper et al. (2021), AlphaFold

- Radford et al. (2022), Whisper

- NIST AI RMF 1.0

- NIST Generative AI Profile

- OECD AI Principles

- UNESCO Recommendation on the Ethics of AI

- EU AI Act (Regulation (EU) 2024/1689)