If you keep hearing that artificial general intelligence is two years away, ten years away, or still decades out, the contradiction is real. Forecasts diverge because experts are often talking about different thresholds, different evidence, and different bottlenecks. This guide sorts that out. By the end, you will know what serious public forecasts say as of March 23, 2026, why they differ, what openai-agi-definition-microsoft-contract/">OpenAI’s AGI definition means, and which signals matter more than a single dramatic year on a countdown.

The short answer is that there is no single AGI date that every credible source agrees on. The serious range still runs from the late 2020s to the mid-2040s, depending on what counts as AGI. That may sound unsatisfying, but it is far more useful than pretending the debate is settled.

Why AGI timelines vary so much

Different groups mean different things by AGI

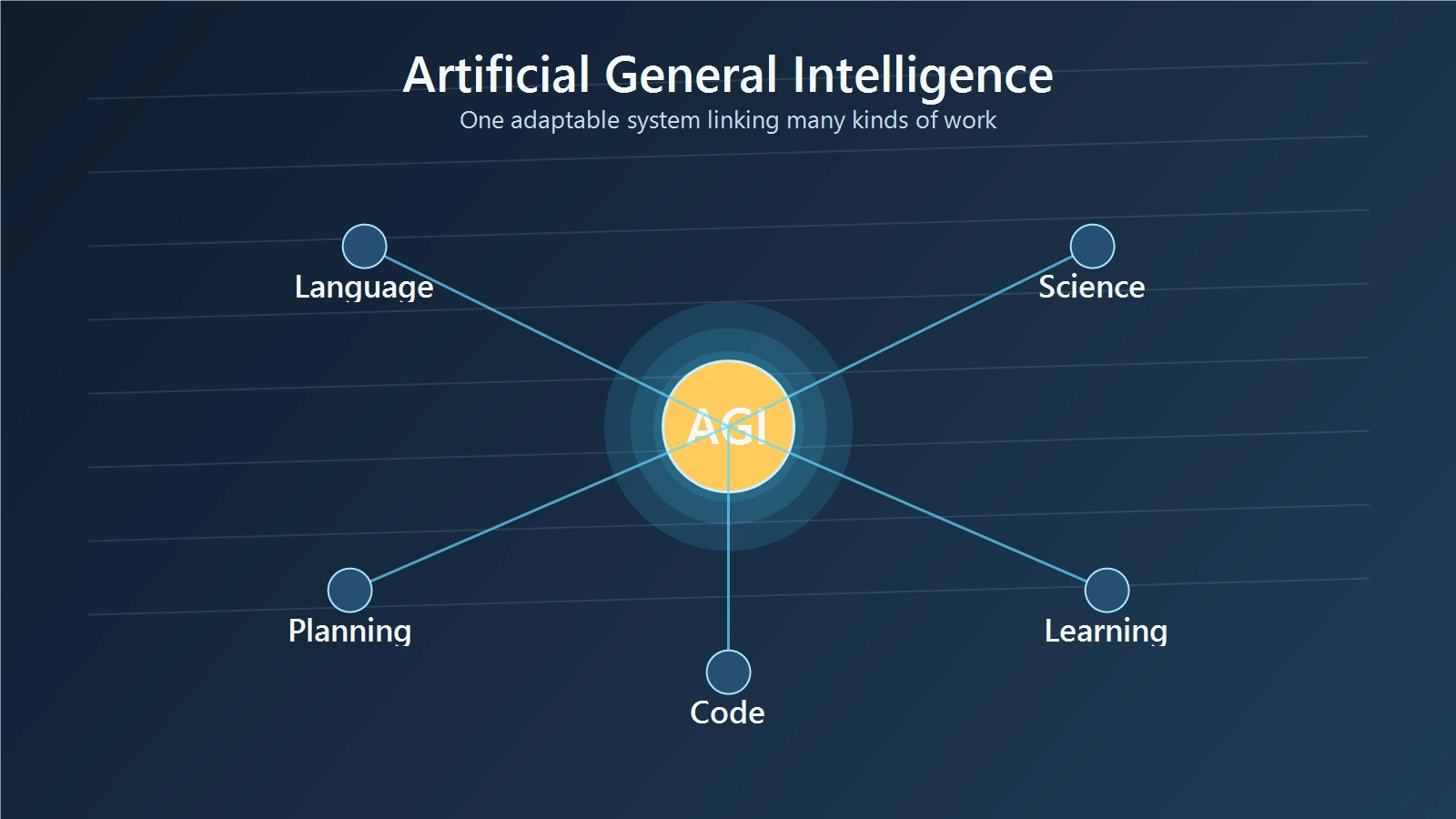

AGI stands for artificial general intelligence. In plain language, it usually means an AI system that can do a broad range of cognitive work at or above the level of skilled humans. The trouble is that there is no universal definition.

OpenAI’s charter gives one of the clearest public definitions: AGI means highly autonomous systems that outperform humans at most economically valuable work. Source That is not the same as saying “a machine that can do every possible human task,” “a conscious machine,” or “a robot that can replace all labor.” It is a practical economic threshold.

AI Impacts uses another term, high-level machine intelligence, or HLMI. Their 2023 survey defines it as the point where unaided machines can accomplish every task better and more cheaply than human workers. Source That is a tougher threshold than “excellent at many digital tasks.”

Google DeepMind’s paper on Levels of AGI is useful because it explains why these definitions collide. The authors argue that performance, generality, and autonomy should be treated as separate dimensions. Source A system can look strong on one axis without qualifying on the others.

The easiest comparison is this: a model that can generate strong code, summarize research, and answer legal-style questions from one chat window already feels broad. But that is still different from a system that can move from code review to contract analysis to lab planning with stable judgment, low supervision, and no task-specific scaffolding. If one forecaster means the first thing and another means the second, both can sound precise while talking about very different milestones.

AGI, human-level AI, and the Singularity are related but not identical

This is where a lot of readers get tripped up. AGI is a capability threshold. Human-level AI is often used as a rough synonym, though surveys define it differently. The Singularity is something else again. It usually refers to a feedback loop in which AI speeds up scientific and technological progress, which then speeds up AI itself.

That means the Singularity does not have to arrive on the same day as AGI. You can imagine systems that accelerate research before they meet a strict general-intelligence standard. You can also imagine AGI arriving without an immediate runaway jump in progress.

AI Impacts asked experts directly about this feedback-loop idea. On the question of whether AI-driven research and development could make technological progress more than an order of magnitude faster in less than five years, responses were spread across quite likely, likely, about even chance, and the corresponding skeptical bins. Source That is a much more grounded way to discuss the Singularity than treating it as either obvious destiny or obvious fantasy.

What the current forecasts actually say

As of March 2026, the most optimistic public forecasts still come from the leaders of frontier labs. Broader researcher surveys are later. Both matter.

The near-term camp from frontier labs

Sam Altman has been consistently aggressive in public. In Reflections, published at the start of 2025, he wrote that OpenAI was now confident it knew how to build AGI “as we have traditionally understood it” and that 2025 may be the year the first AI agents join the workforce and materially change company output. Source

Later in 2025, in The Gentle Singularity, Altman laid out an even more specific sequence: agents doing real cognitive work in 2025, systems producing novel insights in 2026, and robots doing real-world tasks in 2027. Source That is one of the clearest public statements of the aggressive late-2020s case.

Dario Amodei has been similarly forceful, though his wording is slightly different. In January 2025, he wrote that AI smarter than almost all humans at almost all things is most likely in 2026-2027. Source That points to something much closer to a broad digital intellect than to a narrow product feature.

Demis Hassabis has been later in public, but still well inside the next decade. Axios reported in May 2025 that Hassabis and Sergey Brin placed AGI around 2030, with Brin leaning just before and Hassabis just after. Source That is a slower clock than Amodei’s, but it is still a near-term forecast by historical standards.

Put together, these statements reveal a pattern. The people closest to the frontier labs often talk as if the decisive phase is already underway. They do not agree on the exact year, but they cluster around the late 2020s and early 2030s.

The broader-survey view is later

The strongest counterweight comes from the broader research community. AI Impacts’ 2023 Expert Survey on Progress in AI collected 2,778 researcher responses, and the aggregate forecast for HLMI gave a 50% chance by 2047. Source

That number matters for two reasons. First, it is much later than the most bullish CEO rhetoric. Second, it still moved sharply forward from the 2022 version of the survey, whose 50% HLMI estimate was 2060. So the survey result is not AGI is far away. It is the median expert view shifted earlier, but not all the way into the late 2020s.

This is one place where typical search results fall short. They often treat 2027 and 2047 as if one of them must be nonsense. A better reading is that the two forecasts are tied to different thresholds and different vantage points.

Plain-English takeaway from the spread

| Source or camp | Public stance | Rough window |

|---|---|---|

| Sam Altman | agents in 2025, novel insights in 2026, robots in 2027 | late 2020s |

| Dario Amodei | smarter than almost all humans at almost all things | 2026-2027 |

| Demis Hassabis | AGI around 2030 | around 2030 |

| AI Impacts survey median | 50% chance of HLMI | 2047 |

The useful interpretation is not that one side is serious and the other is not. It is that inside-view lab forecasts and broad probability medians are measuring different things. If you ask people building frontier systems whether broad digital intelligence may emerge this decade, you get much earlier dates. If you ask a large sample of researchers when machines will beat humans at every task more cheaply than workers, the midpoint lands much later.

Why some timelines have moved forward

The shorter forecasts did not appear out of nowhere. There are real reasons serious people have updated toward earlier AGI timelines.

Scaling, reasoning, and better interfaces all matter

One reason is that the systems themselves changed faster than many observers expected. In 2020, most public language models still felt like autocomplete with better grammar. In 2025 and 2026, frontier systems can reason across longer chains, call tools, work through software tasks, and stay useful across a much wider range of knowledge work.

Amodei’s January 2025 essay explains this from the inside. He argues that scaling laws, continuing algorithmic progress, and the newer reinforcement-learning-based reasoning paradigm all point in the same direction. Source The key point is not just that models are cheaper. It is that efficiency gains are usually poured back into stronger models, which keeps pushing the frontier forward.

Hassabis has made a similar point from a different angle. He has argued that the industry needs both maximum scaling of current techniques and new breakthroughs. Source That is a useful correction to the lazy debate between just scale and only breakthroughs matter. The likely path is both.

METR’s time-horizon data is one of the strongest capability signals

If you want something closer to a measurement than a quote, METR’s time-horizon research is one of the best public signals available. METR studies the autonomous task horizon of models, meaning roughly how long a task takes a human when the model can complete it at a given success rate. In plain language, it asks whether models are staying competent on longer and more demanding tasks.

In January 2026, METR reported that the frontier trend in human-equivalent time horizon had historically doubled about every seven months. Their updated TH1.1 analysis found even faster growth in the post-2024 subset, with the fitted doubling time dropping to about 89 days. Source That does not prove AGI is near. It does explain why timelines are compressing. If the amount of work a model can handle keeps stretching from short tasks to multi-hour tasks and beyond, then the economic relevance of these systems rises fast.

That is a better reason to update than a dazzling demo clip. A demo can mislead. A capability trend measured across multiple model generations is harder to wave away.

What could still delay AGI

Acceleration is real, but so are the bottlenecks.

Generality and reliability are still the hard parts

The biggest missing piece is not raw fluency. It is dependable transfer. A system can look broad in a chat interface and still fail when the environment gets messy, the goals shift, or the task chain grows long.

This is exactly why the DeepMind paper separates generality from performance and autonomy. Source A model can score highly on a benchmark while still needing heavy prompting, carefully prepared tools, and frequent human correction. That matters because most readers do not really mean excellent at benchmark tasks when they ask when AGI will arrive. They mean something closer to a reliable digital coworker that can adapt across domains.

The comparison here matters. Passing a difficult coding test is impressive. Running a week-long cross-functional project without falling apart is a different standard. Many AGI explainers collapse those ideas into one. They should not.

Physical-world work, energy, and chips may push some thresholds later

A second delay factor is physical infrastructure. Even the optimistic forecasters often describe digital capability first and robotics later. Altman’s own sequence does this: workforce agents first, novel-insight systems next, real-world robots after that. Source

Amodei is also explicit that extremely powerful AI will require millions of chips and tens of billions of dollars. Source That means supply chains, energy, data-center buildout, and geopolitics still matter. If your definition of AGI includes strong robotics or very broad physical-world competence, the path may be slower than the digital-only story.

This is one reason the question When will AGI arrive? never has a clean answer. One person is picturing a system that can do extraordinary research, coding, and analysis. Another is picturing full labor automation across physical and digital work. Those are not the same milestone.

Safety and governance can slow deployment even when capability rises

Capability is not the only clock. Deployment is another. Even if systems keep improving quickly, labs and governments may slow how much autonomy they allow in sensitive domains.

The AI Impacts survey is useful here too. When asked how much society should prioritize AI safety research relative to how much it is currently prioritized, a large majority of respondents chose more or much more. Source That does not tell you when AGI arrives, but it does tell you many researchers expect the governance problem to grow, not shrink.

If you want a related perspective, this site has already explored why AGI still cannot think like a human mind and superintelligence and what comes next. Those pieces fit well with the timeline question because they focus on what capability alone does not settle.

A practical timeline for 2026-2035

This section is synthesis from the sources above, not a direct quote from any one source.

2026-2028: bounded agents become normal

This is the period where the strongest improvements are easiest to imagine because they are already underway. Expect more AI systems that can complete real cognitive work inside tightly scoped domains: code review, internal research, support triage, model evaluation, documentation, and workflow automation.

That will matter economically even if these systems do not satisfy a strict AGI definition. A company may feel as if it hired a new class of digital coworkers long before benchmark designers or philosophers agree that AGI has arrived.

2028-2031: the first serious AGI tests

If the late-2020s forecasts are right, this is the zone where the first convincing AGI arguments will emerge. Not universal agreement. Not necessarily full labor automation. But serious claims that one system can learn and transfer across a wide set of high-value digital tasks with much less scaffolding than today’s agents need.

This is also where the spread between Altman, Amodei, and Hassabis becomes informative instead of confusing. They are not all naming the same year, but they are pointing to the same broad window. If AGI-like systems appear this early, they will probably appear first in digital knowledge work rather than as general household robots.

2031-2035: either AGI arrives, or narrow systems become economically overwhelming

This is where the two camps partly converge. If the aggressive lab forecasts are wrong, AI may still become so capable across important domains that the economy changes dramatically before a consensus AGI label ever arrives. If the aggressive forecasts are right, then by the early 2030s the debate will shift from Is AGI close? to How do institutions handle it?

That is also the period where the Singularity discussion becomes more practical. You do not need a cinematic instant jump for it to matter. If AI starts making AI research, engineering, and science much faster year after year, then the future of computing changes quickly even without one ceremonial AGI day on the calendar.

My read, as of March 23, 2026, is that the late 2020s to early 2030s are now the serious high-variance zone for AGI-like digital systems. The mid-2040s survey median still matters, especially for stricter definitions. But the next decade is no longer a distant speculative window. It is the live window.

How to read AGI claims without getting fooled

Five questions to ask any AGI timeline claim

- What definition is being used?

Ask whether the speaker means broad digital work, HLMI, full labor automation, or something stronger. - Is this a capability claim or a deployment claim?

A lab can show a capability before firms or regulators allow that capability to operate unattended in the real world. - How much transfer is actually demonstrated?

One system handling math, code, and writing from the same interface is promising. It is still not proof of robust transfer across open-ended domains. - How much human scaffolding is still required?

A system that looks autonomous in a demo may still rely on carefully built tools, retrieval layers, and human fallback paths. - What evidence supports the claim?

Serious forecasts connect to at least one of these: primary-source lab statements, expert surveys, capability trends, or real deployment evidence.

Those questions sound simple, but they cut through most weak AGI content immediately. They also pair well with a broader primer on AI vs human intelligence if you want the cognitive comparison behind the forecast debate.

Final Thoughts

The timeline to AGI is shorter than many people assumed a few years ago, but it is still not a settled date on a calendar. Frontier lab leaders are openly talking about the late 2020s. Broader expert-survey medians still land much later. The gap between those views is not noise. It reflects real disagreement about definitions, evidence, and bottlenecks.

If you want the practical takeaway, use this one: track the systems, not the slogans. Watch whether AI keeps stretching from narrow assistance into reliable cross-domain work, whether it needs less scaffolding over time, and whether deployment constraints keep pace with capability growth. That is where the answer to when will AGI arrive will actually become visible.