If you are trying to understand what hardware AGI would actually need, skip the idea of a single miracle chip. The real constraints are compute throughput, high-bandwidth memory, chip-to-chip networking, and the power and cooling needed to keep large clusters stable. This guide explains where GPUs still lead, when TPUs and other AI accelerators make more sense, why GPU vs NPU is usually the wrong fight, and why quantum computing is still a side path rather than the core AGI stack. By the end, you should have a practical way to judge next-gen AI chip claims without getting trapped by marketing.

What AGI hardware requirements actually mean

The first thing to say plainly is that there is no agreed hardware checklist for AGI. There is not even universal agreement on what technical threshold would count as AGI. So the useful question is not, “What chip creates AGI?” It is, “What hardware constraints already shape the most capable AI systems, and how would those constraints tighten if systems become more capable?”

That shift matters because frontier AI progress has not come from one component alone. In OpenAI’s December 14, 2018 article on how AI training scales, the company argued that harder tasks and more powerful models can tolerate much larger batch sizes and much more parallel compute. In plain language, stronger systems tend to demand more hardware working together efficiently, not just a faster chip in isolation.

That leads to four constraints readers should keep in view.

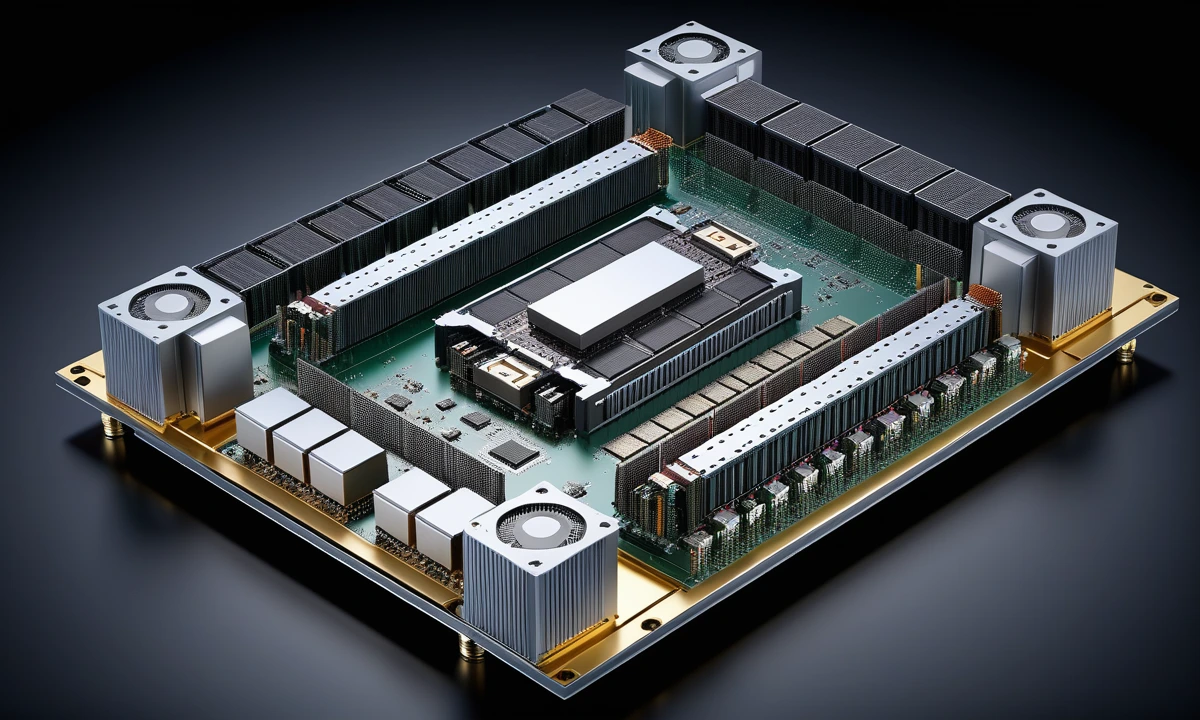

First is raw accelerator throughput: how much matrix math the system can perform. Second is memory capacity and memory bandwidth. High-bandwidth memory, or HBM, is stacked memory placed very close to the processor so the chip can be fed data fast enough. Third is interconnect, meaning the links that let chips share data quickly. Fourth is facility infrastructure: power delivery, cooling, networking, and software that can keep a large cluster busy instead of leaving expensive chips waiting on one another.

A concrete comparison helps. A Copilot+ PC can include a 40+ TOPS NPU, or neural processing unit, for on-device AI features such as live captions and image effects, according to Microsoft’s Copilot+ PC developer guidance. That is a real AI accelerator, but it is built for local, low-power inference. It is not trying to behave like a rack-scale training cluster. By contrast, NVIDIA’s GB200 NVL72 connects 72 Blackwell GPUs and 36 Grace CPUs in one liquid-cooled rack because frontier models are now limited by cluster design as much as single-chip speed.

So when people talk about AGI hardware requirements, the serious version of the conversation is about systems engineering. The important questions are how much memory sits close to the compute, how fast chips talk to each other, how much energy the rack needs, and whether the software stack can use the hardware well enough to justify the cost.

Why GPUs still dominate frontier AI

GPUs still dominate frontier AI because they sit at the best intersection of flexibility, scale, and software maturity. A GPU, or graphics processing unit, started life as a graphics chip, but its highly parallel design turned out to be excellent for the matrix-heavy workloads used in deep learning. Over time, vendors built programming stacks, libraries, compilers, and networking ecosystems around that strength.

That software layer is easy to underrate. In practice, a chip with strong theoretical performance but weak framework support is hard to deploy at scale. Engineers need kernels, compilers, scheduling tools, debugging tools, and model libraries. Buyers need something their teams can use now, not just something that looks impressive on a benchmark slide.

NVIDIA’s current rack-scale systems show what the frontier market values. The GB200 NVL72 page describes a liquid-cooled rack with 72 Blackwell GPUs, 13.4 TB of HBM3E, 576 TB/s of memory bandwidth, and a 72-GPU NVLink domain. NVIDIA is not selling a story about one heroic chip. It is selling tightly linked accelerators, memory, and fabric that can behave more like one giant machine.

AMD is competing on the same terrain. The AMD Instinct MI350 Series page highlights 288 GB of HBM3E and 8 TB/s of memory bandwidth per accelerator. That is a useful reminder that for large-model training and inference, memory capacity is often as important as raw math throughput. If the model, activations, or serving cache do not fit cleanly, the system pays a penalty somewhere else.

This is the practical lesson for readers comparing next-gen AI chips: peak FLOPS or TOPS alone are not enough. If one accelerator has impressive headline compute but weaker memory or a weaker cluster fabric, the real result may be worse on large workloads. A buyer choosing hardware for model serving should care about how many users or tokens the system can sustain under real memory pressure. An engineer training large models should care about how cleanly the system scales across many chips. An investor should care about whether the vendor owns enough of the surrounding stack to capture value beyond the silicon itself.

When TPUs and custom AI ASICs make more sense

GPUs lead the broad market, but they are not the only serious answer. TPUs and other AI ASICs matter because some operators do not need a flexible accelerator for every possible workload. They need something tuned for a narrower set of model patterns, deployed in a controlled software environment, at hyperscale.

An ASIC is an application-specific integrated circuit. A TPU, or tensor processing unit, is Google’s custom AI ASIC family. The case for custom silicon is simple: if you know enough about the workloads, networking model, compiler stack, and data-center design, you can optimize the whole system rather than buying a general-purpose accelerator and adapting around it.

Google’s own numbers make that logic clear. In its May 15, 2024 Trillium announcement, Google said its sixth-generation TPU delivered 4.7x more peak compute per chip than TPU v5e, doubled HBM capacity and bandwidth, doubled interchip interconnect bandwidth, and could scale to hundreds of pods connecting tens of thousands of chips. That is not just a chip story. It is a vertically integrated system story.

Google continued that direction with Ironwood. In the Ironwood launch materials, Google framed its seventh-generation TPU as purpose-built for large-scale training, reinforcement learning, and high-volume inference, while also emphasizing efficiency and inference economics. That tells you where custom AI silicon becomes most compelling: controlled environments, repeatable workloads, and operators large enough to shape both hardware and software.

The comparison with GPUs is straightforward. GPUs are the most practical choice when you need broad model support, strong third-party tooling, and the option to shift between workloads. TPUs and other custom accelerators make more sense when the owner also controls the compiler, runtime, network, and deployment model. For many enterprise buyers, that means TPU is less a “chip purchase” and more a “cloud platform choice.”

GPU vs NPU is mostly a workload question

The phrase “GPU vs NPU” sounds like a clean head-to-head contest, but in most real deployments the two chips solve different problems.

An NPU is built for efficient AI inference under tight power and thermal limits. Microsoft’s current documentation says many Copilot+ PC features require a 40+ TOPS NPU because those systems need local AI acceleration for tasks like translation, image generation, and audio-video enhancements without draining battery life or leaning on the cloud for every step.

A data-center GPU has a different job. It must handle much larger models, much larger memory footprints, much heavier parallel workloads, and often both training and inference. That is why comparing a laptop NPU to a rack-scale GPU cluster is like comparing a delivery scooter to a freight train. Both move things. They do not move the same volume, on the same route, under the same constraints.

For AGI hardware discussions, this matters because people often overread the progress of consumer AI PCs. Strong NPUs will matter for local assistants, private inference, low-latency edge workloads, robotics, and always-on multimodal features. They are important. They are just not the same category as the hardware currently used to train and serve the largest frontier systems.

So the better question is not “Will NPUs replace GPUs?” It is “Which part of the AI stack are you optimizing?” If the goal is on-device inference at low power, the NPU is attractive. If the goal is frontier model training or cluster-scale inference, the GPU or another data-center accelerator remains the relevant comparison.

The hidden bottlenecks: HBM, packaging, networking, and power

This is the section most search results underserve. Once you understand current AI systems, it becomes obvious that the main bottleneck is not just transistor speed. It is feeding the compute and scaling the system around it.

HBM is the clearest example. Large AI models need enormous memory bandwidth because the accelerator has to pull weights, activations, and caches constantly. If the memory system cannot keep up, the math units wait. That is why both NVIDIA and AMD emphasize HBM so heavily in their product pages, and why Google highlighted doubled HBM capacity and bandwidth for Trillium.

Advanced packaging is the next layer. In its 2025 Technology Symposium materials, TSMC said AI has an insatiable need for more logic and HBM and outlined plans to bring 9.5 reticle-size CoWoS into volume production in 2027, enabling packages with 12 HBM stacks or more. That is a strong signal that packaging is now a strategic bottleneck. If you cannot integrate enough memory close to the compute, you cannot keep scaling cleanly.

Networking is just as important. NVIDIA’s current pitch leans hard on fifth-generation NVLink and large NVLink domains. Google talks about interchip interconnect bandwidth and multi-pod scaling. The reason is simple: once models are spread across many accelerators, slow communication becomes dead time. A chip can look brilliant in isolation and disappoint in a cluster if the network is the real choke point.

Power and cooling finish the picture. Frontier AI infrastructure is moving toward liquid-cooled, rack-scale systems because the thermal density keeps rising. NVIDIA’s GB200 and GB300 platforms make that visible, and Google now talks openly about water cooling and data-center infrastructure as part of its TPU story. For buyers, this means the right question is no longer just “What accelerator should I buy?” It is “Can my facility support the rack design, networking, and thermal profile this accelerator expects?”

A useful comparison is to think of older AI infrastructure as a server-buying problem and current frontier AI infrastructure as a mini power-plant problem. That is not hype. It is a clue about where the industry is actually spending money.

Where quantum computing fits, and where it does not

Quantum computing belongs in this conversation, but only in the right place.

The strongest near-term case for quantum is not “replace GPUs and train AGI differently.” The stronger case is that some future AI and scientific workloads may benefit from hybrid workflows where quantum processors work alongside classical high-performance computing systems. That is how major vendors describe the path today.

IBM’s quantum roadmap, updated in April 2025, repeatedly frames the near-term model as quantum plus HPC. It discusses mapping and profiling tools for quantum-plus-HPC workflows and places fault-tolerant systems later on the roadmap. That is not the language of a near-term drop-in replacement for current AI accelerators. It is the language of staged hybrid adoption.

Recent research points in the same direction. A 2026 paper in npj Quantum Information states that the prospect of direct quantum advantage in machine learning has remained questionable. That does not make quantum unimportant. It does mean readers should separate long-range research potential from near-term AGI infrastructure planning.

The plain comparison is this: in 2026, if you want to train or serve large transformer-style systems, you design around GPUs, TPUs, memory, interconnect, and facilities. If you want to explore future hybrid workflows for chemistry, optimization, or specialized subproblems, quantum becomes interesting. Those are not the same procurement decision.

So when someone says quantum computing will power AGI soon, the right follow-up is: which workload, which error-correction assumptions, and what classical infrastructure still has to sit beside it? Most of the time, the answer reveals that quantum is a research track attached to AI infrastructure, not the foundation that replaces it.

What hardware engineers, tech buyers, and investors should watch next

For hardware engineers, the checklist is brutally practical. Watch memory per accelerator, memory bandwidth, interconnect bandwidth, compiler maturity, and rack-level efficiency. If a platform looks impressive only in sparse or low-precision peak numbers, dig deeper.

For tech buyers, start with workload fit. Are you training large models, serving them, running retrieval-heavy inference, or doing local edge inference? Then look at software support, availability, facility constraints, and total cost of operation. A chip with a great benchmark can still be the wrong purchase if your team cannot deploy it easily or your site cannot support its cooling needs.

For investors, one of the clearest lessons is that AI value is spreading beyond the accelerator die itself. HBM, advanced packaging, optical and electrical networking, liquid cooling, and software ecosystems are all part of the moat now. The winning stack may not belong to the company with the loudest headline number. It may belong to the company or supply chain that removes the most real bottlenecks.

A short rule of thumb helps:

- If the claim is about AGI, ask how the system scales.

- If the claim is about next-gen AI chips, ask what memory and networking sit beside the compute.

- If the claim is about efficiency, ask whether it holds at rack scale rather than on a single accelerator.

- If the claim is about quantum, ask what part of the workflow still runs on classical infrastructure.

That framework will not predict AGI timelines, but it will help you read the hardware landscape more clearly than a list of brand names ever will.

Final Thoughts

The most useful way to think about AGI hardware requirements is to stop looking for a single winner chip. The next leap in intelligence, if it comes, will depend on whether compute, memory, networking, packaging, and power can scale together.

That is why the chip conversation is getting bigger rather than narrower. GPUs still lead because they combine flexibility with mature software and strong cluster design. TPUs and other custom accelerators matter because some operators can co-design the whole stack. NPUs matter because more inference is moving to the edge. Quantum matters as a research frontier, but not yet as the core engine of modern AI infrastructure.

The hardware story behind AI is becoming more physical, not less. That is the part many summaries miss, and it is the part engineers, buyers, and investors should keep in view.