Parents and tech ethicists do not need another futuristic argument about robot nannies. They need a practical way to judge what is safe, useful, and off-limits right now. This guide explains where humanoid robots in education can genuinely help, where an AI nanny becomes a developmental or privacy risk, and what standards to check before letting a child use one regularly. The short version is this: a robot can assist learning in narrow, supervised ways, but that is very different from letting a machine take over the work of raising a child.

The first distinction parents need: helper or caregiver?

The most important distinction in this whole debate is not whether the robot looks human. It is whether the robot is acting as a helper or as a caregiver.

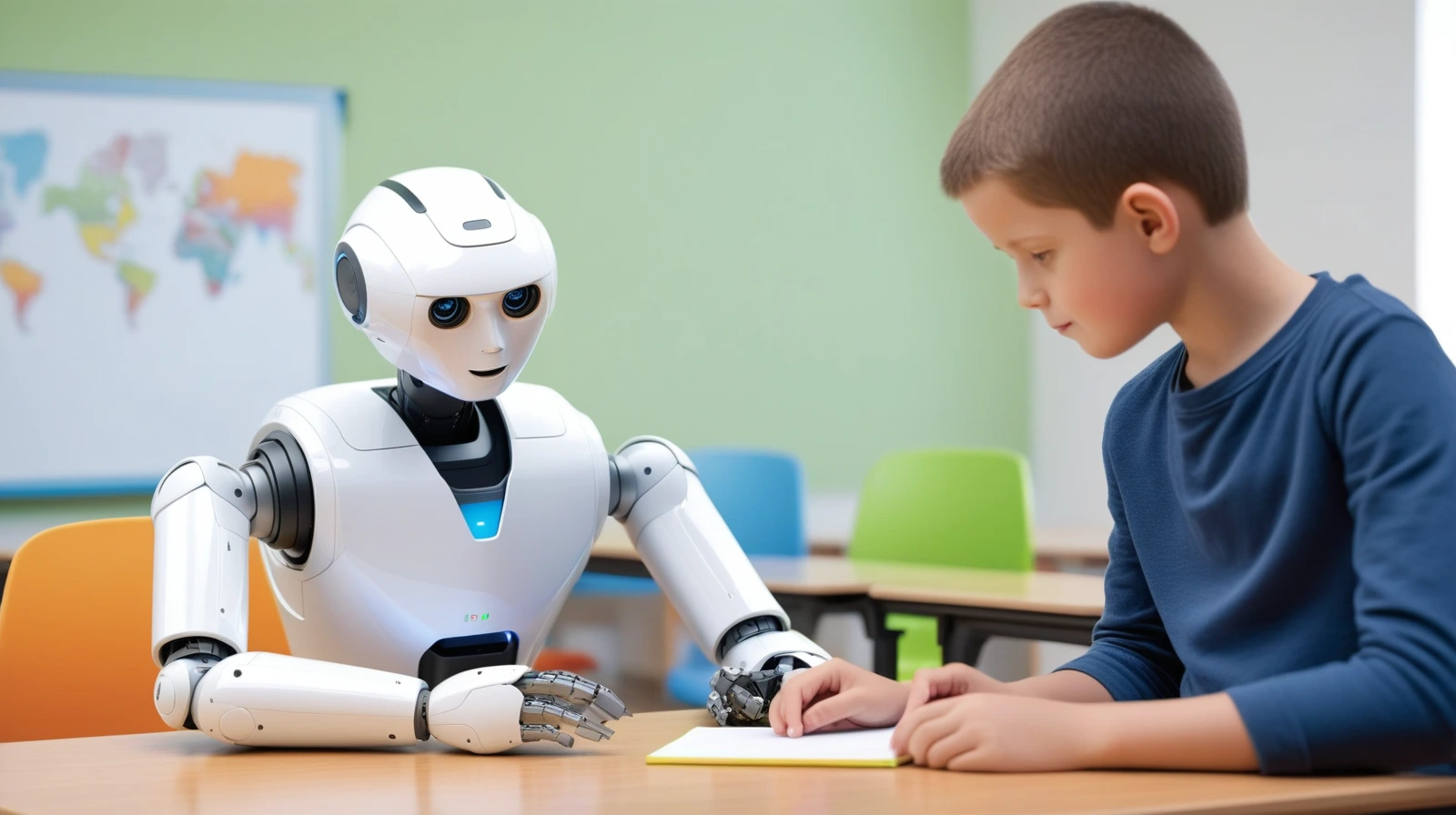

A helper supports a narrow task. Think of a humanoid robot leading a short pronunciation exercise, prompting turn-taking during a classroom activity, or helping a teacher keep one child engaged during a structured lesson. In those cases, the adult stays responsible for the child, the goal is specific, and the interaction is bounded.

A caregiver substitute is different. That is a system expected to comfort a child when they are upset, decide when a warning should turn into discipline, interpret a sibling conflict, or carry enough emotional weight that the child begins to rely on it as a primary attachment figure. At that point, the question is no longer about educational tech. It is about development, trust, and responsibility.

That distinction matches the strongest public sources. The OECD’s chapter on social robots as educators treats the most pragmatic role as tutor or teaching assistant. UNICEF Serbia’s October 24, 2024 note on humanoid robots in learning does the same thing, framing them as assistive technology that enhances teacher-led inclusive education rather than replacing adults.

This is why the phrase AI nanny causes trouble. It wraps monitoring, tutoring, companionship, supervision, and emotional care into one product label. Those are not the same function. A robot that helps a child rehearse vocabulary for 15 minutes is one thing. A robot that handles bedtime reassurance, social conflict, or daily emotional regulation is something else.

If you want one plain-English rule, use this: the closer the robot gets to acting like a stand-in adult, the higher the safety bar should rise.

Four safety tests that matter more than the demo

Most robot demos are built to answer one question: can the machine perform the task? Parents need different questions. They need to know whether the use is safe for the child’s development, privacy, physical environment, and everyday decision-making.

Developmental safety: can it support, not replace, human interaction?

The Harvard Center on the Developing Child’s serve-and-return guidance is useful here because it reduces a complicated topic to one core pattern. A child sends a cue. A caregiver notices it, responds, and keeps the exchange going. That repeated back-and-forth helps build language, trust, self-regulation, and social understanding.

Now compare two scenarios. In the first, a teacher uses a humanoid robot for a 10-minute reading activity, then discusses the lesson with the child afterward. In the second, a parent relies on the robot to handle routine soothing, storytelling, reminders, and emotional reassurance every evening. The first use may support an adult-led learning process. The second starts to displace it.

That is the central developmental concern. A robot can simulate responsiveness, but it does not understand family context, emotional ambiguity, or the moral weight of a difficult moment. It can generate the right-sounding sentence. That is not the same thing as raising a child through a real relationship.

The evidence base is also incomplete, which matters. The 2023 review Ethical considerations in child-robot interactions notes that social robots are moving into schools, homes, and clinics, but that little is known about their long-term socio-emotional effects. That does not prove harm. It does mean parents should be wary of any product that asks them to outsource ordinary caregiving.

Privacy safety: what data does the robot collect, store, and share?

A child-facing robot is often a sensor system before it is anything else. It may use cameras, microphones, facial detection, speech recognition, usage logs, personalization, app connectivity, and cloud processing. That is one reason parents should treat childcare robots differently from ordinary toys.

UNICEF’s 2025 guidance on AI and children puts safety, privacy, explainability, fairness, and child well-being at the center of child-rights-respecting AI. That is the right frame. If a robot is operating around children, the practical questions are immediate:

- Does it record audio or video?

- Is data processed locally or sent to the cloud?

- Can parents inspect, export, or delete what is stored?

- Does the system profile the child over time?

- Does it rely on third-party analytics or hidden software kits?

The risk is not theoretical. In September 2025, the FTC took action against robot toy maker Apitor over allegations that its app allowed a third party to collect children’s geolocation data without parental consent. That case is a strong reminder that a robot for kids is also a data pipeline, and weak governance can turn a friendly device into a privacy problem fast.

Physical and operational safety: what happens when the system fails?

Parents naturally worry about whether a robot could bump into a child or behave unpredictably around stairs, pets, or furniture. That matters, but it is only part of the operational picture.

The deeper issue is failure handling. What happens if speech recognition misfires? What happens if the network drops? What happens if the robot interprets horseplay as aggression, or misses the difference between a joke and distress? Is there a clear human override? Does the robot stop, escalate to an adult, or keep acting with false confidence?

A supervised classroom tool has a human in the loop who can step in immediately. A pseudo-caregiver in the home creates a harder problem because the robot is being trusted in precisely the situations where judgment matters most.

Accountability safety: who is responsible when the robot is wrong?

Every child-facing system has an accountability problem hidden inside it. If a teacher uses a robot as a classroom aid, responsibility still sits clearly with the school and the adult running the lesson. If a parent treats a robot like a semi-autonomous nanny, responsibility becomes blurred.

Who is accountable if the robot gives harmful advice, mishandles a conflict, normalizes manipulative behavior, or quietly encourages emotional dependence? The device itself cannot carry moral responsibility. That is why transparency and explainability matter so much in the UNICEF framework. A system that cannot clearly explain its role should not be allowed to shape a child’s routine in intimate ways.

Where humanoid robots in education can genuinely help

The case against robot nannies is not the same thing as a case against all educational robots. Some uses look defensible, and in a few settings genuinely helpful, when the robot is narrow, supervised, and clearly subordinate to adult goals.

One promising area is structured tutoring. The OECD notes that the most realistic role for social robots in education is as tutor: a system that gives a child extra practice, supports a small-group activity, or offers patient repetition without replacing the teacher. Language learning is one of the clearer examples because pronunciation drills, vocabulary rehearsal, and turn-based prompts can be structured well.

The comparison is useful. A robot is much more plausible as a pronunciation coach than as a bedtime confidant.

Another credible use is inclusive education. UNICEF Serbia’s humanoid-robot initiative explicitly frames these systems as assistive technology for children who need additional educational support inside teacher-led classrooms. That is a much stronger use case than general childcare because the robot is part of a designed educational environment rather than a replacement adult at home.

There is also broader evidence that robot-based education can help learning outcomes in some contexts. A 2025 meta-analysis in Humanities and Social Sciences Communications found that robot-based education can improve some educational outcomes compared with non-robot approaches. But the authors also note strong heterogeneity, teacher-training effects, infrastructure differences, and the need for more evidence on long-term impact. That caveat matters. It means the robot is not a magic ingredient. Results depend on how it is used.

Repetition-heavy tasks are another reasonable fit. A humanoid robot may be useful for drill-based practice, predictable turn-taking, or short motivation boosts where patience and consistency matter more than deep judgment. In plain language, robots do better when the task is structured and the stakes are low.

This supplement-not-replacement framing lines up with a broader point already made on MindoxAI in Augmented Intelligence: AI as a Co-Processor: AI tools are usually strongest when they extend human work rather than quietly substitute for the hard part of it.

Where AI nanny claims break down fast

The strongest claims for an AI nanny usually fail at the point where caregiving becomes personal, messy, and morally loaded.

Take emotional care. A child who is ashamed after being scolded, frightened after a nightmare, or confused by conflict with a friend is not only looking for words. The child is reading tone, trust, history, and whether the adult truly understands what happened. A robot can produce a script. It cannot carry the full human meaning of the moment.

Take conflict resolution. If two children are fighting over a toy, the right response may depend on fairness, fatigue, past behavior, family norms, and whether one child is quietly distressed rather than openly upset. Those judgments are hard to hand over reliably in real family life, even if a rule-based system can imitate them in narrow cases.

This is why the phrase “let a robot raise your child” should trigger resistance. Raising a child is not only about reminders, schedules, or repeating facts. It is about modeling care, interpreting ambiguity, setting boundaries, and building trust over years.

The evidence does not justify panic, but it does justify caution. The review Do Robotic Tutors Compromise the Social-Emotional Development of Children? concludes that responsible educational uses appear to pose little threat based on current evidence. That is reassuring as far as it goes. But the same literature does not prove that extended, unsupervised nanny-like use is safe. Those are different claims.

Attachment is the other reason to slow down. The OECD chapter notes that personalization and social presence can increase engagement. That may help learning in short, structured settings. But it also means designers can make a robot feel unusually compelling. Engagement is not the same thing as healthy dependence.

If you want the wider cognitive version of this distinction, MindoxAI’s explainers on AI vs Human Intelligence and Why AGI Still Can’t Think Like a Human Mind make the underlying point clearly: competent output is not the same as human understanding.

A practical decision framework for parents and schools

The safest way to think about childcare robots and educational tech is to sort use cases into green-light, yellow-light, and red-line zones.

Green-light use cases

These are the strongest candidates:

- short, supervised learning sessions

- drill-based language or reading practice

- assistive support in inclusive classrooms

- structured activities where an adult sets the goal, monitors the interaction, and reviews the outcome

The common feature is clear adult control.

Yellow-light use cases

These uses are not automatically wrong, but they deserve stricter review:

- home robots that use personalization, memory, or emotional language to increase engagement

- robots connected to apps or cloud dashboards that store child interaction history

- devices marketed as companions rather than clearly described educational tools

- systems used daily for long stretches without regular adult observation

These cases may be workable, but only if privacy controls, override mechanisms, and limits on use are explicit.

Red-line use cases

These are the uses parents and schools should treat with the most skepticism:

- replacing core babysitting or caregiving with a robot

- relying on the robot for discipline, emotional soothing, or moral guidance

- leaving a young child alone with a semi-autonomous robot as if it were a trusted adult

- buying a system whose vendor cannot clearly explain data handling, failure handling, and human oversight

For schools, the checklist should be just as direct:

- Define the task narrowly.

- Require teacher control and override.

- Audit data collection and retention.

- Test for distraction, not just engagement.

- Review whether the robot saves teacher time or quietly adds workload.

If a vendor cannot answer those questions cleanly, the product is not ready for child-facing deployment.

Final Thoughts

Humanoid robots in education deserve a more precise conversation than they usually get. The useful question is not whether robots are good or bad for children in the abstract. It is whether the robot is being used as a supervised tool for a narrow purpose, or as a substitute for the human work of raising and guiding a child.

That is where the safety line should sit. A robot may help with tutoring, repetition, accessibility, or engagement. It should not be trusted to carry the emotional, moral, and relational load of caregiving. Safe helpers are plausible. Safe nannies are a much harder case, and right now the evidence does not justify pretending otherwise.